Abstract

Accurate gene annotation is essential for deciphering the mapping from genomic sequences to their functional roles. However, current methods struggle to model complex gene transmission patterns, such as vertical inheritance and horizontal gene transfer. Here we introduce ANNEVO, a mixture of experts-based genomic language model that directly models distal sequence dependencies and joint evolutionary relationships from diverse genomes, enabling precise ab initio gene annotation. Through extensive benchmarking on 566 phylogenetically diverse species, we demonstrate that ANNEVO substantially outperforms existing ab initio methods and achieves performance comparable to state-of-the-art annotation pipelines. Furthermore, ANNEVO’s independence from external evidence allows it to deliver more complete annotations than reference annotations for a broad range of species while correcting errors within them. These advancements will improve genome sequence interpretation and provide a framework capable of integrating evolutionary insights.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$259.00 per year

only $21.58 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

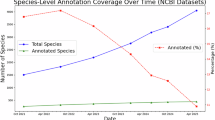

The sources of RNA-seq are listed in Supplementary Table 9. The genome and annotation of RefSeq are listed in Supplementary Tables 23–27 and are available from the NCBI’s FTP site at https://ftp.ncbi.nlm.nih.gov/genomes/refseq/. The genome and annotation of Ensembl are available via the Ensemble page for release 112 at https://ftp.ensembl.org/pub/release-112/, Ensembl Fungi release 59 at https://ftp.ensemblgenomes.ebi.ac.uk/pub/fungi/release-59/ and Ensembl Plants release 59 at https://ftp.ensemblgenomes.ebi.ac.uk/pub/plants/release-59/.

Code availability

ANNEVO is available via GitHub at https://github.com/xjtu-omics/ANNEVO. The repository is free for non-commercial use by academic, government and nonprofit/not-for-profit institutions.

References

Yandell, M. & Ence, D. A beginner’s guide to eukaryotic genome annotation. Nat. Rev. Genet. 13, 329–342 (2012).

Lewin, H. A. et al. The Earth BioGenome Project 2020: starting the clock. Proc. Natl Acad. Sci. USA 119, e2115635118 (2022).

Jumper, J. et al. Highly accurate protein structure prediction with AlphaFold. Nature 596, 583–589 (2021).

Stanke, M. et al. AUGUSTUS: ab initio prediction of alternative transcripts. Nucleic Acids Res. 34, W435–W439 (2006).

Korf, I. Gene finding in novel genomes. BMC Bioinf. 5, 59 (2004).

Lukashin, A. V. & Borodovsky, M. GeneMark. HMM: new solutions for gene finding. Nucleic Acids Res. 26, 1107–1115 (1998).

Majoros, W. H., Pertea, M. & Salzberg, S. L. TigrScan and GlimmerHMM: two open source ab initio eukaryotic gene-finders. Bioinformatics 20, 2878–2879 (2004).

Burge, C. & Karlin, S. Prediction of complete gene structures in human genomic DNA. J. Mol. Biol. 268, 78–94 (1997).

Thibaud-Nissen, F., Souvorov, A., Murphy, T., DiCuccio, M. & Kitts, P. Eukaryotic genome annotation pipeline. The NCBI Handbook Vol. 2 (National Center for Biotechnology Information, 2013).

Holt, C. & Yandell, M. MAKER2: an annotation pipeline and genome-database management tool for second-generation genome projects. BMC Bioinf. 12, 1–14 (2011).

Gabriel, L. et al. BRAKER3: fully automated genome annotation using RNA-seq and protein evidence with GeneMark-ETP, AUGUSTUS, and TSEBRA. Genome Res. 34, 769–777 (2024).

Brůna, T., Lomsadze, A. & Borodovsky, M. GeneMark-ETP significantly improves the accuracy of automatic annotation of large eukaryotic genomes. Genome Res. 34, 757–768 (2024).

Brůna, T. et al. Galba: genome annotation with miniprot and AUGUSTUS. BMC Bioinf. 24, 327 (2023).

Aken, B. L. et al. The Ensembl gene annotation system. Database 2016, baw093 (2016).

Morgulis, A., Gertz, E. M., Schäffer, A. A. & Agarwala, R. WindowMasker: window-based masker for sequenced genomes. Bioinformatics 22, 134–141 (2006).

Chen, N. Using Repeat Masker to identify repetitive elements in genomic sequences. Curr. Protoc. Bioinformatics 5, 4.10. 11–14.10. 14 (2004).

Holst, F. et al. Helixer: ab initio prediction of primary eukaryotic gene models combining deep learning and a hidden Markov model. Nat. Methods https://doi.org/10.1038/s41592-025-02939-1 (2025).

Stiehler, F. et al. Helixer: cross-species gene annotation of large eukaryotic genomes using deep learning. Bioinformatics 36, 5291–5298 (2021).

Gabriel, L., Becker, F., Hoff, K. J. & Stanke, M. Tiberius: end-to-end deep learning with an HMM for gene prediction. Bioinformatics 40, btae685 (2024).

Hochreiter, S. & Schmidhuber, J. Long short-term memory. Neural Comput. 9, 1735–1780 (1997).

Sætre, G. P. & Saether, S. A. Ecology and genetics of speciation in Ficedula flycatchers. Molecular Ecology 19, 1091–1106 (2010).

Parkin, I. A. et al. Transcriptome and methylome profiling reveals relics of genome dominance in the mesopolyploid Brassica oleracea. Genome Biol. 15, R77 (2014).

Chen, L., DeVries, A. L. & Cheng, C.-H. C. Evolution of antifreeze glycoprotein gene from a trypsinogen gene in Antarctic notothenioid fish. Proc. Natl Acad. Sci. USA 94, 3811–3816 (1997).

Vosseberg, J. et al. The emerging view on the origin and early evolution of eukaryotic cells. Nature 633, 295–305 (2024).

Zhou, Y. et al. Gene fusion as an important mechanism to generate new genes in the genus Oryza. Genome Biol. 23, 130 (2022).

Bang, M.-L. et al. The complete gene sequence of titin, expression of an unusual ≈700-kDa titin isoform, and its interaction with obscurin identify a novel Z-line to I-band linking system. Circ. Res. 89, 1065–1072 (2001).

Vaswani, A. et al. Attention is all you need. In 31st Conference on Neural Information Processing Systems (NIPS 2017) https://proceedings.neurips.cc/paper_files/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf (2017).

Viterbi, A. Error bounds for convolutional codes and an asymptotically optimum decoding algorithm. IEEE Trans. Inf. Theory 13, 260–269 (1967).

Jaganathan, K. et al. Predicting splicing from primary sequence with deep learning. Cell 176, 535–548.e24 (2019).

Zoph, B. et al. St-MoE: designing stable and transferable sparse expert models. Preprint at https://arxiv.org/abs/2202.08906 (2022).

Dalla-Torre, H. et al. Nucleotide Transformer: building and evaluating robust foundation models for human genomics. Nat. Methods 22, 287–297 (2025).

Brixi, G. et al. Genome modeling and design across all domains of life with Evo 2. Preprint at bioRxiv https://doi.org/10.1101/2025.02.18.638918 (2025).

Nguyen, E. et al. Sequence modeling and design from molecular to genome scale with Evo. Science 386, eado9336 (2024).

Harrison, P. W. et al. Ensembl 2024. Nucleic Acids Res. 52, D891–D899 (2024).

Wang, S. et al. De novo and somatic structural variant discovery with SVision-pro. Nat. Biotechnol. 43, 181–185 (2025).

Lin, J. et al. SVision: a deep learning approach to resolve complex structural variants. Nat. Methods 19, 1230–1233 (2022).

de Klerk, E. & t Hoen, P. A. C. Alternative mRNA transcription, processing, and translation: insights from RNA sequencing. Trends Genet. 31, 128–139 (2015).

Xia, Z. et al. Dynamic analyses of alternative polyadenylation from RNA-seq reveal a 3′- UTR landscape across seven tumour types. Nat. Commun. 5, 5274 (2014).

Zhang, R. X. et al. A high-resolution single-molecule sequencing-based Arabidopsis transcriptome using novel methods of Iso-seq analysis. Genome Biol. 23, 149 (2022).

Seppey, M., Manni, M. & Zdobnov, E. M. BUSCO: assessing genome assembly and annotation completeness. in Gene Prediction: Methods Protocols (ed Kollmar, M.) 227–245 (Springer, 2019).

Lin, T. Y., Goyal, P., Girshick, R., He, K. M. & Dollár, P. Focal loss for dense object detection. In Proc. IEEE International Conference on Computer Vision (eds Ikeuchi, K. et al.) 2999–3007 (IEEE, 2017).

Li, X. Y. et al. Dice loss for data-imbalanced NLP tasks. In Proc. 58th Annual Meeting of the Association for Computational Linguistics (eds Jurafsky, D. et al.) 465–476 (Association for Computational Linguistics, 2020).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In Proc. IEEE Conference on Computer Vision and Pattern Recognition (eds Bajcsy, R. et al.) 770–778 (IEEE, 2016).

Fedus, W., Zoph, B. & Shazeer, N. Switch transformers: scaling to trillion parameter models with simple and efficient sparsity. J. Mach. Learn. Res. 23, 1–39 (2022).

Acknowledgements

We thank the Vertebrate Genomes Project and Darwin Tree of Life for helpful comments. K.Y. is supported by National Key R&D Program of China (grant no. 2022YFC3400300) and National Natural Science Foundation of China (grant nos. 32125009 and 32430017). S.W. is supported by National Natural Science Foundation of China (grant no. 323B2015). X.Y. is supported by the National Natural Science Foundation of China (grant nos. 32422019 and 62172325), the Natural Science Foundation of Shaanxi Province (grant no. 2024JC-JCQN-28) and the Fundamental Research Funds for the Central Universities (grant no. xzy012024088). P.J. is supported by the National Natural Science Foundation of China (grant no. 32400509). J.L. is supported by the National Natural Science Foundation of China (grant no. 62302386). D.M. is supported by the National Natural Science Foundation of China (grant no. 12426105) and the Major Key Project of Pengcheng Laboratory (grant no. PCL2024A06).

Author information

Authors and Affiliations

Contributions

K.Y. designed and supervised the research. P.Z. developed the ANNEVO algorithm and performed the performance evaluation. T.X. and S.W. contributed to the data processing. X.Y. and B.W. contributed to the training and prediction of Augustus. P.J., P.S. and Y.Z. contributed to the RNA-seq analysis. J.L. contributed to the supplemental analysis of ANNEVO. D.M. contributed to the model ablation and fine-tuning. Z.N. contributed to the evaluation metrics. P.Z., S.J.B., Z.N. and K.Y. wrote the paper with input from all other authors. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Methods thanks Michael Hiller and the other, anonymous, reviewer(s) for their contribution to the peer review of this work. Primary Handling Editor: Lin Tang, in collaboration with the Nature Methods team.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Detailed model architecture of ANNEVO’s neural network component.

a, Distal Information Modeling Module. This module extracts local sequence patterns using five consecutive ConvBlocks and learns long-range dependencies through positional encoding and Transformer encoder layers. The parameters are as follows: C = 64, H = 8, D = 768. b, Joint Evolutionary Modeling Module. The module consists of eight sub-lineage networks and a relationship computation controller. The sub-lineage networks capture diverse evolutionary relationships, while the relationship computation network models the affinity between the input sequence and each EvoExpert. The parameters are as follows: C = 64, D = 768. c, Resolution Restorer Module. The Resolution Restorer Module serves as the inverse process to the ConvBlocks, designed to reconstruct the feature vector back to nucleotide resolution. Its primary purpose is to transform the 320-channel feature vector (which has been expanded from the original 64 channels by the ConvBlocks) back to a representation that aligns with the original nucleotide-level resolution, effectively inverting the channel expansion and restoring the spatial detail for gene annotation. d, Detailed Architecture of Network Blocks. The ConvBlocks progressively increase the number of channels, with the convolutional layer channels expanding from C to 5 C. After passing through five ConvBlocks, the features reach 5 C = 320 channels. Each ConvBlock compresses information from two adjacent positions, embedding information from every 32 nucleotides into the same dimension. Encoder use the classical six-layer Transformer encoder structure to minimize parameter tuning. In ANNEVO, the number of attention heads is set to H = 8, and the hidden layer dimension is D = 768. EvoExpert employs two simple linear layers designed to preserve the distinct characteristics of different sub-clades. These layers map the feature vector’s dimension from 320 to 768, and then back to 320. Relationship calculation network uses a single linear layer to map the feature vector from dimension 320 to 8, corresponding to the number of expert networks.

Extended Data Fig. 2 Overview of the gene structure decoding component in ANNEVO.

a, Predefined primary gene structure states. Gene structure states are defined based on the typical gene structure of eukaryotes. Each arrow in this diagram represents a possible state transition, with adjacent nucleotides specifying the required composition for the transition. Gene structure decoding utilizes the Viterbi algorithm, leveraging the prediction probabilities provided by the deep learning model to determine the most likely sequence of states. b, Intron state groups. The intron state account for three primary splicing patterns: GC-AG, GT-AG, and AT-AC. These splicing patterns are incorporated in the decoding process and considered during gene structure predictions. Importantly, an exit from an intron state to a CDS state does not return to the original CDS phase; instead, it transitions to the next CDS phase. For example, if the model enters an intron state from CDS0, it will exit to CDS1.

Extended Data Fig. 3 Nucleotide-level performance comparison with evidence-assisted annotation pipelines across 12 model species.

ANNEVO achieved the highest mean F1 score (0.92), driven by its superior recall (0.922), indicating more complete coding region identification. BRAKER3 exhibited slightly lower precision (0.906 vs. 0.919 for ANNEVO) and its completeness was substantially lower (recall: 0.805 vs. 0.922 for ANNEVO). The 7% absolute improvement in F1 by ANNEVO reflects its optimized balance between precision and recall, surpassing evidence-dependent methods despite its relying solely on genomic sequence inputs.

Extended Data Fig. 4 Gene-level performance comparison with evidence-assisted annotation pipelines across 12 model species.

ANNEVO achieved optimal performance in most species. ANNEVO demonstrates complete gene structure recovery (highest recall) that aligned with nucleotide-level performance. Across all 12 model species, ANNEVO achieves a 4% absolute improvement in F1 score over BRAKER3 and an 11% absolute improvement over the deep learning baseline Helixer.

Extended Data Fig. 5 Benchmarking against evidence-assisted annotation pipelines and deep learning methods.

a, Average performance across six vertebrate model species. This panel presents a comparative analysis restricted to vertebrates, aligning with Tiberius’s stated scope. ANNEVO consistently and substantially outperforms all other methods in this comparison set. Tiberius shows a notably lower average performance, primarily due to a sharp decline in its performance on Vertebrate_other clade (as detailed in Extended Data Figs. 3,4). b, Performance comparison between ANNEVO and Tiberius on all (43) test mammalian species. ANNEVO demonstrates superior performance across most test mammalian species, with higher NT(CDS)-F1, gene-F1 and BUSCO scores than Tiberius by an average of 5.9%, 1.0%, and 3.5%, respectively. The boxplot elements are defined as described in the Fig. 2b legend. c, Comparison of prediction tendencies between ANNEVO and Tiberius on all (43) test mammalian species. ANNEVO exhibits a tendency to recover more gene models, while Tiberius demonstrates a more conservative prediction behavior. The boxplot elements are defined as described in the Fig. 2b legend. d, Comparison of runtime across different deep learning-based gene annotation methods under GPU and CPU-only environments. BRAKER3 was used as the baseline for comparison. ANNEVO is substantially faster than all other methods in any settings. Note that due to the extreme resource demands of Tiberius, it could not be executed on a GPU with 32 GB of memory. Therefore, GPU-based evaluations were conducted on four vertebrate model species, while CPU-only evaluations were performed including mammalian model species.

Extended Data Fig. 6 Examples of ANNEVO Correcting Erroneous Gene Annotations in Ensembl.

a, Correction of a gene loss. Ensembl failed to annotate a conserved BUSCO gene at this region. ANNEVO recovered this gene, fully supported by RNA-seq evidence. b, Correction of a premature stop codon. Due to an erroneous splice site, the Ensembl annotation introduced a premature stop codon, resulting in the loss of four downstream exons. ANNEVO recovered this gene, fully supported by RNA-seq evidence. c, Correction of an incorrect start codon. Ensembl missed an upstream exon, leading to an incorrect initiation site. ANNEVO recovered this gene, fully supported by RNA-seq evidence.

Extended Data Fig. 7 Analysis of ANNEVO’s performance.

a, Ablation analysis of ANNEVO’s performance. Performance decreases when either the MoE module or the resolution restorer module is removed from ANNEVO, with the MoE module contributing the most prominently to overall accuracy. b, Ablation analysis of ANNEVO’s efficiency. Eliminating ANNEVO’s distal information modeling module, even when substituting it with a pure CNN-based architecture, results in a 2.5-fold increase in training cost and an 8.8-fold decrease in training speed. c, Effects of different fine-tuning strategies on performance. We used the no-MoE version as the baseline. Partial fine tuning of specific modules led to consistent performance improvements across both model organisms and species with previously lower BUSCO. d, Performance comparison of ANNEVO when matching only the longest transcript versus any transcripts. The results indicate a modest performance improvement when matching against any transcript isoform, suggesting that some ANNEVO predictions correspond to alternative splice variants. Nevertheless, the overall predictions remain largely aligned with the longest transcript structures. e, Analysis of false-positive predictions by ANNEVO. The majority of false positives were identified as fragmented mRNAs or known non-coding transcripts, indicating that these predictions might still be biologically relevant rather than merely spurious.

Supplementary information

Supplementary Information (download PDF )

Supplementary Methods 1–3, Notes 1–17, Figs. 1–6 and Refs. 1–17.

Supplementary Data 1 (download PDF )

ANNEVO’s accurate prediction of 12 genes exceeding 400 kb in length.

Supplementary Data 2 (download PDF )

Additional BUSCO genes fully supported by RNA-seq.

Supplementary Data 3 (download PDF )

Additional genes near BUSCO genes fully supported by RNA-seq.

Supplementary Tables 1–36 (download XLSX )

Detailed results and supplementary results.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Zhang, P., Xu, T., Wang, S. et al. Highly accurate ab initio gene annotation with ANNEVO. Nat Methods (2026). https://doi.org/10.1038/s41592-026-03036-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41592-026-03036-7