Abstract

Engineering design optimization problems present significant challenges due to the complexity of objective functions, which often involve both continuous and discrete design variables, along with multiple constraints. GWO and PSO are well-known heuristic algorithms with efficient search ability and reasonable execution time. They are often used to solve complex optimization problems, but there are still problems such as premature convergence and limited global search efficiency. To overcome these limitations and enhance solution quality, this study proposes a novel Hybrid Grey Wolf-Particle Swarm Optimization (HGWPSO) algorithm. HGWPSO integrates the exploration ability of GWO with the rapid convergence and exploitation efficiency of PSO. The algorithm’s performance is first validated using CEC_2022 benchmark functions and then applied to eight complex engineering design problems, including pressure vessel design, compression spring design, three-bar truss design, gear train design, cantilever beam design, welded beam design, transmission line parameter estimation, and reactive power planning problem. The improvement in the average best optimal value varies across different cases, demonstrating the effectiveness of the proposed method. In Case 1, the improvement is 71.61%, while in Cases 2 and 4 obtained a 99% improvement. Case 3 shows an enhancement of 53.46%, and Case 5 reaches 71.92%, whereas Case 6 also obtained 99%. In Case 7, the improvement depends on the bundle configuration, with two-bundle conductors showing a 76.91% increase, three-bundle conductors achieving 43.94%, and four-bundle conductors reaching 46.57%. Finally, in Case 8, the improvement is 1.02%. The obtained results demonstrate that HGWPSO achieves better performance than other methods in terms of convergence rate, cost function minimization, and constraint handling. This study highlights the effectiveness of HGWPSO as a powerful tool for solving complex engineering design problems.

Similar content being viewed by others

Introduction

Optimization problems involve finding the best solution within specific constraints. As technology advances, these problems have become more complex, often involving multiple constraints and mixed variable types such as continuous, discrete, and high-dimensional search spaces. Traditional optimization methods, such as gradient descent and the Newton method, face certain limitations based on the type of optimization problem. For example, multi-constraint problems need to address trade-offs between conflicting objectives, and mixed-variable optimization adds complexity due to the necessity for specific approaches to process different data types. Gradient-based methods struggle with highly multimodal functions, while Newton’s method is inefficient in high-dimensional optimization problems, and in some cases, they may fail to provide the best solutions1.

Considering the increasing complexity of optimization problems, researchers have extensively analyzed and developed different optimization methods to address multi-constraint, multi-variable, and high-dimensional search space problems2. As a result, different optimization methods have been proposed, leading to significant advancements and results. Among these swarm-based optimization techniques have obtained significant attention due to their ability to efficiently search complex areas, reduce slow convergence, and the risk of premature convergence into local optima3,4. These algorithms are fundamentally based on mimicking the collective behavior found in nature, facilitating group coordination to reach optimization goals through information sharing among individuals. Inspired by natural phenomena and biological evolution, researchers have developed simple, easy-to-implement optimization algorithms to address complex problems5. They include GWO6, PSO7, MFO8, WOA9, SCA10, ALO11, COA12, GJO13. GWO and PSO have gained considerable interest among researchers due to their simple structure, robust adaptability, and few control parameters. These algorithms have been effectively utilized in various complex optimization problems, including optimal power flow14, economic dispatch problems15, feature selection16, and power transmission lines17,18. However, GWO suffers early convergence, especially in complex and high-dimensional environments, where subsequent iterations can generate less-than-ideal solutions due to a lack of variation. Similarly, PSO can easily fall into the trap of local optimums, particularly when dealing with functions that demonstrate high levels of multimodality due to inefficient exploration search space. Furthermore, the PSO is dependent on different parameters tunning including inertia weight and acceleration coefficients. To overcome these limitations, different researchers focus on developing new optimization algorithms or enhancing existing methods. One common approach to improvement is hybridization, where different techniques are combined to enhance performance. Hybridization involves the combination of two or more algorithms to demonstrate their strengths while minimizing the drawbacks of individual algorithms. A hybrid algorithm is characterized by its extensive applicability and robust performance, enabling it to effectively solve a wide range of complex optimization problems19. Therefore, different techniques have been developed to improve the performance of GWO and PSO to address their limitations. For GWO improvements have been introduced through binary GWO20, parallelized GWO21, hybrid GWODE22, hybrid GAGWO23, and GWO with FOX24. Similarly, PSO is also improved through the combinations of PSODE25, PSOGA26, and PSOACO27 which improve the exploration and exploitation balance. Furthermore, to obtain more better efficiency the GWO and PSO further hybridized with HMFOPSO28, HPSOALO29, HGWOWOA30, and HGWOSCA31. These hybrid algorithms combine the efficiency of different techniques to improve the convergence speed and global search capabilities.

As society and technology continue to progress, the number of optimization problems that need addressing is growing, along with their complexity and characteristics, such as multifactorial, large-scale, and challenging issues. Despite significant advancements in optimization techniques, existing algorithms still struggle with slow computation speeds and inadequate performance when faced with such complex optimization problems32,33. In response to these challenges, HGWPSO is proposed to address the limitations of the existing algorithms through the integration of GWO and PSO. The motivation behind the HGWPSO is that it is capable of balancing exploration and exploitation efficiently. GWO is known to be a very good exploration mechanism, which ensures effective exploration of the search space and avoids premature convergence. However, its exploitation capability is very weak and hence converges slowly during the latter stages of optimization. On the other hand, PSO has better exploitation capability and enables its solution sharply once the search space is identified. However, PSO is prone to local optimum trapping due to its exploration ability. By combining the search capability of GWO with the exploitation capability of PSO, HGWPSO takes advantage of GWO’s capability to maintain diversity during the search while taking advantage of PSO’s ability to enhance convergence speed and solution quality. This combination results in an efficient optimization algorithm with the ability to solve complex engineering problems. To address the limitations of existing techniques, this research work proposed HGWPSO that provides a better balance between exploration and exploitation, making the proposed method a better choice for solving real-world optimization problems. The fundamental motivations and novel contributions of HGWPSO are as follows.

-

Improved exploration and exploitation The proposed algorithm effectively combines the exploratory properties of GWO with the enhanced convergence ability of PSO to provide an effective search process. This combination ensures that the algorithm can explore a large search space to prevent premature convergence efficiently. Unlike most hybrid techniques, HGWPSO uses an adaptive parameter regulation strategy that allows the algorithm to change its behavior dynamically based on the complexity of the problem. The adaptive weight mechanism dynamically modifies the impact of GWO and PSO, improving the balance between exploration and exploitation, which allows the HGWPSO to adapt to complex problems.

-

Improved constraint handling HGWPSO utilizes dynamic penalty functions to manage constraints efficiently. These penalty functions are employed to modify the fitness values of candidate solutions that violate problem constraints. The penalty is imposed in real-time, and modified based on the level of constraint violation, improving the search for viable solutions. This approach enables the algorithm to maintain diversity within the search space while assuring convergence towards feasible and optimal solutions, in different problems.

-

Validation on CEC_2022 benchmark functions and 8 complex engineering problems The efficiency of HGWPSO is initially verified on CEC_2022 functions which provide different complex and high dimensional functions. These benchmark functions verify the algorithm’s performance in terms of convergence. Additionally, HGWPSO is further verified on 8 real-world engineering design problems. These problems have different constraints and high dimensional solution space providing an extensive evaluation of the proposed algorithm across different problems.

-

Algorithm performance The performance of HGWPSO is compared with other competitive hybrid algorithms where HGWPSO obtained top rankings demonstrating its superior performance.

Additionally, Fig. 1 shows the graphical abstract demonstrating the fundamental concepts and methodology of the proposed work.

Graphical abstract of the proposed work.

Related work

In engineering, optimization techniques serve as variable and robust frameworks34 to address a wide range of real-world problems in different domains, such as data science, industrial engineering, civil engineering, and mechanical design. These developments have significantly improved linear programming, quadratic programming, and dynamic programming, enabling effective solutions for complex problems in various fields, including engineering and data sciences35,36.

In37, the author proposed a modified QDE algorithm, introducing new strategies to enhance search efficiency and maintain population diversity. Comparative tests on unimodal and multimodal functions showed improved optimization, scalability, and stability over other algorithms. In38, WOASCALF is introduced as a hybrid algorithm combining the WOA and the SCA with Levy flight to solve benchmark function and inverse kinematics problems. In39, the TO-PSO algorithm enhances traditional PSO through QRS for improved population initialization, resulting in better convergence and diversity of unimodal and multimodal functions, outperforming conventional algorithms across 15 benchmark problems. The WSOA algorithm in40 combines WOA and SOA to enhance computational accuracy and address premature convergence by utilizing WOA’s contraction mechanism, SOA’s spiral behavior, and a Levy flight strategy, demonstrating superior performance on 25 benchmark functions compared to seven metaheuristic algorithms. In41, MHCSA, a hybrid of CSA and PSO, improves memory representation and balances exploration and exploitation, achieving higher accuracy and stability in tests on 73 benchmark functions and seven engineering design problems. The MBFPA hybrid metaheuristic in42 combines MVO and FPA to enhance exploration and exploitation for faster convergence, confirming its superiority compared to other algorithms through evaluations of benchmark functions and classic engineering problems such as three bus trees, welded beam design, etc. Finally, the hybrid COASaDE Optimizer proposed by43 integrates COA with self-adaptive DE to effectively address complex optimization and engineering design challenges including pressure vessel design, speed reducer design, etc., with experimental evaluations on CEC2022 and CEC2017 benchmark functions. Despite their good performance, optimization algorithms have limitations. EMMSIQDE struggles with complexity in complex problems, WSOA has limited exploitation ability, and IBSSA faces challenges in high-dimensional scenarios. MHCSA also needs further validation across different optimization tasks.

Furthermore, the author of44 presents the IBSSA algorithm, which enhances the original sparrow search algorithm using dynamic population initialization and staged inertia weight guidance. This improvement leads to increased convergence accuracy on benchmark functions and engineering design problems, including welded beam design, compression spring design, etc. In45, another author presents a chaotic gravitational search algorithm specifically designed for solving mechanical engineering (welded beam, compression spring, and pressure vessel) design problems. In46, FSGWO is introduced to improve GWO performance in multimodal optimization problems by using the binary joint normal distribution for adaptive control parameter adjustments and incorporating fuzzy mutation and crossover operators. The effectiveness of FSGWO is validated through testing on 30 IEEE CEC2014 benchmark functions and five engineering application problems. In47, LSO, a novel metaheuristic algorithm inspired by light dispersion in the rain, is designed for continuous optimization problems. Its effectiveness is validated through experiments comparing LSO with established metaheuristics on CEC 2005, and several CEC competition benchmarks (CEC2014, CEC2017, CEC2020, and CEC2022) along with tension/compression spring design optimization problem, welded beam design problem, and pressure vessel design problem. In48, the SMA-GM algorithm is proposed to enhance diversity and local search using Gaussian mutation and an updated oscillatory parameter, improving performance on various benchmark tests, including unconstrained, constrained, and CEC2022 functions. The DGSWOA is proposed by49, which enhances the traditional WOA by improving global search efficiency and convergence rate. It uses a sine–tent–cosine map for better population initialization, a gaining-sharing strategy to avoid local optima, and incorporates dynamic opposition-based learning to increase solution diversity, validated through experiments on optimization benchmarks and engineering applications. The IGWO algorithm aims to improve the convergence speed, solution accuracy, and ability to escape local minima of the traditional GWO, with evaluations performed on 23 benchmark test problems, CEC2014 test problems, and three‑bar truss and automobile side impact design problems50. A RIME Optimizer using random reselection and the Powell mechanism, along with a modified SMA, is proposed for engineering design problems51,52. Moreover, in53 and54, the authors propose an improved COATI algorithm, which is a hybrid of MBO and genetic operators, to enhance performance in engineering design problems. The different algorithms demonstrate better performance in solving engineering problems; however, they have certain limitations. For instance, FSGWO requires verification for multi-population approaches, including solution exchange and search direction design. LSO could benefit from hybridization with other algorithms and SMA-GM’s applicability to real-world problems. DGSWOA needs performance improvements for large-scale, high-dimensional optimization.

Advancements in machine learning and optimization algorithms have led an increasing number of researchers to explore the application of optimization algorithms for solving various real-world problems. According to the NFL theorem55, there is no single optimization algorithm that can consistently outperform all others across all problem types. While several methods developed by experts can enhance the optimization effectiveness of GWO and PSO, these algorithms still tend to get trapped in local optima and exhibit inadequate convergence accuracy when faced with more complex optimization challenges. Despite these improvements, there is still room for enhancing global search capability and convergence speed. To address the challenges associated with optimization problems, this paper presents the HGWPSO. The proposed HGWPSO aims to enhance the uniformity in the distribution of the initial population, which is crucial for improving the algorithm’s convergence speed and overall performance. The hybridization combines the strengths of GWO and PSO, with GWO imitating the social hierarchy and hunting strategies of grey wolves for exploration and exploitation, while PSO draws inspiration from the social behaviors of flocking birds and schooling fish, emphasizing collaboration and information sharing among particles. By integrating these two algorithms, HGWPSO utilizes the adaptive capabilities of PSO to ensure a more diverse initial population. This diversity is essential for exploring the solution space more thoroughly, ultimately leading to better optimization results. Additionally, the hybrid approach allows for improved convergence characteristics, as it can more effectively balance exploration and exploitation. To evaluate the performance of the HGWPSO, a comparative analysis is conducted using the CEC_2022 benchmark functions.

Motivation and incitement

The motivation for a combination of GWO with PSO is based on their complementary optimization skills because GWO is known to have better exploratory capabilities as it is based on the social structure and the hunting behavior of grey wolves to search across the solution space56; on the other hand, its convergence could be slow and fall into local optima traps. GWO’s hierarchical leadership structure enhances global search efficiency, ensuring better coverage of the solution space in early iterations. PSOs have better performance in exploitation by guiding particles based on personal and global optimal locations, which leads to rapid convergence but with the risk of premature convergence. The swarm cooperation in PSO enhances local optimization, making it effective in refining solutions in promising regions. The HGWPSO obtained a balanced search mechanism by combining GWO’s exploration with PSO’s exploitation, improving solution, accuracy, and convergence speed. The adaptive parameter mechanism controls the balance between GWO’s global exploration and PSO’s local optimization dynamically, making the algorithm adaptable based on the problem complexity and optimization process. This combination is very useful in solving engineering problems with complex objectives. The combined effect of GWO and PSO provides a diversified search approach in the early stages and effectively refines results in the later stage. GWO-PSO has been selected over other hybrids since PSO has already proved its effectiveness in solving complex problems, and it is capable of complementing GWO’s exploratory behavior, leading to a robust optimization framework for real-world engineering applications. Despite the increasing popularity of metaheuristic algorithms in optimization, it remains difficult to obtain a well-balanced trade-off between exploration and exploitation, especially for complex engineering problems. Existing optimization techniques focus on specific domains but lack a comprehensive evaluation across different engineering problems. In this research work, different complex optimization problems have been analyzed, such as the design of welded beam, pressure vessel, three-bar truss, cantilever beam, speed reducer, and transmission line parameter estimation. However, existing literature lacks a comprehensive comparison of hybrid metaheuristic frameworks on such diverse problem sets. Particularly, the use of a hybrid GWO-PSO for different complex problems is not sufficiently explored. This work bridges the gap by exploring the adaptability of the hybrid model across various optimization challenges, demonstrating its robustness, efficiency, and superior performance compared to other algorithms.

Key innovations of the proposed work

The Key innovations of this research work are as follows.

-

Adaptive inertia weight HGWPSO presented an adaptive inertia weight update strategy such as linear updates to maintain exploration and exploitation at different optimization stages.

-

Hybrid update strategies The algorithm combines GWO’s hierarchical structure alpha (α), beta (β), and delta (δ) leadership and PSO’s personal and global best update mechanism to improve convergence behavior.

-

Comparison with other hybrid algorithm HGWPSO is compared with other hybrid algorithms such as HMFOPSO, HPSOALO, HGWOWOA, and HGWOSCA to demonstrate improved convergence speed, solution accuracy, and stability over complex optimization problems.

Structure of the paper

This paper is structured as follows: Section “Mathematical modeling of complex engineering design problem” presents the mathematical modeling, problem formulation, and constraints. Section “Proposed method” introduces the HGWPSO technique for optimization. Section “Experimental Result and analysis” discusses simulation results, while Section “Practical implications” explores practical implications. Finally, Section “conclusion, discussion, and future work” concludes the study and outlines future research directions.

Mathematical modeling of complex engineering design problem

Problem description

In this research work, the performance of the HGWPSO algorithm on complex engineering design problems, including pressure vessel design, compression spring design, three-bar truss, gear train design, cantilever beam, and welded beam design. Effective handling of constraints is crucial for achieving feasible solutions, utilizing various methods such as penalty functions, specialized operators, better computations, and hybrid techniques. The penalty function method is favored for its straightforward approach, converting constrained problems into unconstrained ones by applying penalties57. Let’s assume that \(x\) represents the solution vector, and f(x) is the fitness function. The terms \({g}_{i}\left(x\right)\) and \({h}_{i}\left(x\right)\) shows the inequality and equality constraints, respectively. The xjmin and xjmax are the lower and upper limits.

A penalty function modifies the objective function by adding terms that penalize constraint violations. The resulting transformed function is:

For inequality constraints, if \(g_{i} \left( x \right) \le 0\), no penalty is added; however, if \(g_{i} \left( x \right) > 0\), a penalty of \(max\left( {o,g_{i} \left( x \right)} \right)\) is applied. For equality constraints, any deviation from zero \(h_{i} \left( x \right) \ne 0)\) is penalized with \(h_{i} \left( x \right)\). The above-constrained problem can be transformed into an unconstrained function F(x) using the penalty function method as mentioned below.

Assume that λ is the penalty factor for inequality, and \(\upsigma\) is the tolerance value of the equality constraints.

Optimizing pressure vessel design using HGWPSO

The pressure vessel design problem58 is a second-order challenge in mechanical and industrial engineering that addresses solving the overall cost of production of the vessel while still ensuring that the structural strength and the operational safety of the vessel are not compromised. Pressure vessels find application in a myriad of areas, such as the chemical, petrochemical, and power generation sectors, where fluids are stored and processed under high pressures. Pressure vessel design aims to reduce manufacturing costs, and the design process targets the optimization of the following four parameters such as the shell thickness (Ts), the thickness of the enclosing head (Th), the inner shell radius (R), and the container length (L). Such parameters have to be altered carefully to ensure that the cost targets are met without compromising the required levels of performance and safety. The mathematical model is presented as follows:

The mathematical model for the above problem using HGWPSO aims to minimize the total cost of the vessel while satisfying constraints related to material thickness, volume capacity, and maximum length. The optimization process involves adjusting the design variables Ts, Th, R, and L within their defined ranges to find the optimal solution that meets these criteria.

Evaluating compression spring design using HGWPSO

The design problem of tension/compression springs59,60,61 is another optimization problem related to the mechanical engineering field in such a way that it sets the spring’s geometrical and material parameters and their maximal weight. The difficulty of the design problem is to make sure that the spring not only meets its specifications but also satisfies design requirements such as control of disturbances, control of vibration frequencies, and control of shear stresses. This problem is critical in the reliability context, for example, in an automobile suspension system, aerospace, and industrial equipment where the spring is required to bear variable forces without failure. With the recent developments in calculation tools and improvement methods, it is now possible to solve the tension/compression spring design problem more elegantly and provide lightweight solutions while satisfying shear stress, vibration, and disturbance performance limitations for the spring. The design variables are wire diameter (d), mean coil diameter (D), and the number of active coils (N). The mathematical model is as follows.

In this problem, the mathematical model for evaluating compression spring design using HGWPSO is used to minimize the cost of the spring while satisfying constraints related to stress, deflection, spring index, and geometric relationships. The optimization process involves adjusting the design variables d, D, and N within their defined ranges to find the optimal solution.

Examining three-bar truss structure design using HGWPSO

The three-bar truss design problem62 approaches a structural design concept that is related to civil and structural engineering to obtain the balance between the efficiency of the structure and its material composition. The objective of this work is to focus on the designing of the truss system in such a way that the overall weight of the assumed structure is minimal while considering other significant features like weight distribution and weight interactions under dynamic loads. The importance of this design problem is observed in those applications in which there is a construction of light and strong members, for example, the design of bridges, towers, and load-bearing frames. The mathematical model for this problem is presented as follows.

The optimization of the three-bar truss structure design using the HGWPSO involves minimizing the cost function with design variables. The problem is subject to three stress-related constraints and bounded within the range.

Exploring gear train design using HGWPSO

The design of gear systems63 can be defined as a well-structured optimization problem in mechanical and industrial engineering, with the design objective being the minimization of the transmission ratio cost subject to the performance and efficiency specifications of the gear transmission system. The purpose of this optimization problem is to determine the minimum cost for the appropriate gear transmission ratio, which accomplishes the highest performance, which is transmission efficiency between the input shaft and output shaft. As a point of fact, transmission ratio has many effects on speed, torque, noise wear, and so on. To achieve this objective, the values of four integer design variables, g₁, g₂, g₃, g₄, representing the number of teeth on four gears, must be determined. Gear ratio is directly affected by the number of teeth on certain gears, as a higher number of teeth implies a bigger gear, resulting in higher torque while the opposite is true for the number of teeth on a smaller gear. The objective function is defined as follows.

The gear train design problem utilizing HGWPSO aims to find optimal values for the gear parameters g1,g2,g3, and g4 that minimize the objective function while adhering to the given constraints. This optimization process can lead to improved performance and efficiency of the gear train system.

Analyzing cantilever beam design using HGWPSO

In this cantilever beam design problem64, there are five nodes, and each node is represented by a hollow cross-section defined by a square with uniform thickness. The variable xi denotes the cross-sectional breadth of the ith node and ranges from [0.01,100]. The objective of this problem is to reduce the weight of the cantilever beam.

The cantilever beam design problem utilizing HGWPSO focuses on optimizing the dimensions h1,h2,h3,h4, and h5 to minimize the cost associated with material usage while adhering to structural integrity constraints. The optimization process aims to produce a design that is both cost-effective and structurally better.

Analyzing welded beam design using HGWPSO

The welding beam design problem65 is an important problem of structural optimization in mechanical and structural engineering. The parametric variables for the welded joint include bar thickness (b), attachment length (l), bar height (t), and weld height (h). The objective is to minimize the fabrication cost of the welded beam, and the mathematical model is presented as follows.

where

The welded beam design problem using HGWPSO focuses on optimizing the height, length, thickness, and breadth of the beam to minimize material costs while satisfying several engineering constraints related to stress, deflection, and overall strength. The hybrid optimization algorithm is employed to efficiently search for optimal values that meet all design specifications.

Analyzing transmission line parameter problem

The capacitance of transmission line parameters plays an important role in long transmission lines, as it greatly influences voltage variations. This problem aims to determine and compare the line capacitance by evaluating various bundle conductor arrangements66,67. The capacitance of an individual conductor can be mathematically represented as:

Assume that

-

\(\epsilon\) is the permittivity of the medium between the conductors, and D is the spacing.

-

\(R_{eq}\) is the equivalent radius of the bundle, which can be calculated based on the geometry of the conductors in the bundle, r is the radius, and Nc is the number of bundle conductor

-

\(d_{{min}},\;d_{{max}} ,\;r_{{min}} ,r_{{max}} ,\;\epsilon _{{min}} \;{\text{and}}\;\epsilon _{{max}}\) are the lower and upper limits

$$C = \frac{2\pi \epsilon }{{ln\frac{D}{{R_{eq} }}}}$$(30)

An equivalence radius of different bundle conductors (two, three, and four) can be expressed as

Analyzing reactive power planning problem

The rapid growth of the power sector has significantly increased the electricity demand, pushing transmission lines to operate near or even beyond their thermal limits. This situation necessitates efficient RPP to reduce transmission congestion, minimize active power losses, and maintain the voltage profile within prescribed limits. Effective RPP plays a crucial role in enhancing the overall stability, reliability, and efficiency of the power network. The primary objective of this study is to minimize total transmission losses, which is a key factor in optimizing power system performance. Transmission losses lead to inefficient power delivery and increased operational costs68. Therefore, their reduction directly contributes to improved economic and technical performance of the grid. The total transmission loss is mathematically represented by Eqs. (34).

where.

-

\(G_{mn}\) is the conductance of the transmission line.

-

\(V_{m}\) and \(V_{n}\) are the voltage magnitudes at buses \(m\) and \(n\).

-

\(\delta_{m}\) and \(\delta_{n}\) is the voltage phase angles at buses \(m\) and \(n\).

In this research work load flow equations for real and reactive power are selected as equality constraints, represented by Eqs. (35) and Eqs. (36), while the inequality constraint is given by Eqs. (37). It is essential to ensure that transmission loss minimization complies with all equality and inequality constraints69.

where.

-

\(P_{Gm}\) and \(P_{Dm}\) are the real power generated and real power demand at bus \(m\)

-

\(V_{m}\) and \(V_{n}\) are the voltage magnitudes at buses \(m\) and \(n\)

-

\(\delta_{mn}\) is the phase angle difference between buses \(m\) and \(n\)

-

\(B_{mn}\) is the susceptance element and \(N_{b}\) is the total number of buses

$$Q_{Gm} - Q_{Dm} - V_{m} \sum\limits_{N = 1}^{{N_{b} }} {V_{n} } \left[ {G_{mn} \cos \,\,\,\left( {\delta_{mn} } \right) + B_{mn} \sin \,\,\left( {\delta_{mn} } \right)} \right] = 0$$(36)$$\left. {\begin{array}{*{20}c} {V_{gm}^{\min } \le V_{g} \le V_{gm}^{\max } } \\ {Q_{Gm}^{\min } \le Q_{G} \le Q_{Gm}^{\max } } \\ {Q_{Cm}^{\min } \le Q_{C} \le Q_{Cm}^{\max } } \\ {T_{m}^{\min } \le T_{m} \le T_{m}^{\max } } \\ \end{array} } \right\}$$(37)

Where

-

\(Q_{Gm}\) and \(Q_{Dm}\) are the reactive power generated at bus \(m\).

-

\(T_{min }\) is the minimum value of the transformer tap position.

-

\(T_{max }\) is the maximum value of the transformer tap position.

Proposed method

The GWO algorithm is inspired by the social hierarchy and hunting behaviors of grey wolves6 and is designed to handle optimization problems. In GWO, wolves are assigned roles such as α, β, and δ, which shape their decision-making during the search for optimal solutions. This algorithm utilizes a population of potential solutions that iteratively refines itself through strategies that mimic wolf hunting behaviors, combining exploration and exploitation. The following mathematical models show the interactions between wolves and their prey, guiding the algorithm toward optimal or near-optimal solutions.

where.

-

\(\vec{Y}\) shows the position of the wolf, and t is the iteration number

-

The vector \(\overrightarrow {{Y_{{\text{p}}} }}\) indicates the position of the prey,

-

\(\vec{T}\) is the distance, \(a\) is the control parameter, \(\vec{S}\) and \(\vec{B}\) are the coefficients

The flowchart for the GWO is presented in Fig. 2. In addition, the pseudocode in Algorithm 1 shows the initialization of parameters, including the number of wolves and maximum iterations.

Flowchart for GWO.

Pseudo-code for the GWO

PSO is inspired by the social behavior of flocking birds and schooling fish7. It uses a swarm of particles, each representing a potential solution, to explore the search space. By iteratively updating positions and velocities based on individual and collective best-known positions, PSO balances exploration and exploitation. Its simplicity, ease of implementation, and minimal parameter tuning make it suitable for solving complex optimization problems.

where.

-

\(g_{i}^{t}\) represents the velocity and \(x_{i}^{t}\) is the position

-

\(pbest_{i}^{t}\) indicates the personal best solution and \(gbest_{i}^{t}\) is the global best solution.

-

\(m_{1} \;and\;m_{2}\) are vectors within the range [0, 1] and \(k_{1} \;and\;k_{2}\) are constant values, and ω is the inertia weight

The flowchart for PSO is shown in Fig. 3, while the pseudocode in Algorithm 2 shows the initialization of parameters, including the number of generations and the number of particle swarms.

Flowchart for PSO.

Pseudo-code for the PSO

In GWO, the parameters V and B are updated using random values r1 and r2. However, in PSO, the inertia weight ω is updated linearly as \(\omega = \omega_{\max } - \frac{t}{T}*\left( {\omega_{\max } - \omega_{\min } } \right)\). The GWO and PSO are widely used optimization algorithms in different problems. GWO, inspired by the social hierarchy and hunting strategies of grey wolves, is better in exploration but often struggles with effective exploitation, leading to premature convergence on local optima. Similarly, PSO, which mimics the social behaviors of flocking birds, is strong in exploitation but can suffer from a loss of diversity and premature convergence. To address these limitations, a novel hybrid algorithm is proposed70. This hybrid algorithm combines the exploration capabilities of GWO with the exploitation strengths of PSO, resulting in improved performance across various optimization problems. HGWPSO aims to enhance the balance between exploration and exploitation, improve convergence rates, and achieve superior solution quality. By leveraging the strengths of both algorithms, HGWPSO demonstrates a promising solution to the challenges faced by GWO and PSO, making it a better algorithm for complex optimization problems.

The following velocity and update equations are obtained after hybridization.

According to Fig. 4, the HGWPSO algorithm involves initializing parameters for GWO and PSO, including the number of agents and key coefficients. Each agent’s performance is evaluated to optimize the objective function, followed by an iterative process that updates positions and velocities for convergence. The algorithm ultimately outputs the best objective solution found, representing the optimal parameters for the given optimization problem. The pseudocode in Algorithm 3 shows the initialization of parameters, including the number of agents and maximum iterations, while establishing key parameters a, B, ω, and Y. The algorithm evaluates each agent’s performance using established equations and iteratively updates their positions and velocities until the iteration count t is reached. The flowchart for the HGWPSO is presented in Fig. 5.

Design process steps.

Flowchart for HGWPSO.

Pseudo-code for the proposed algorithm

Moreover, the computational complexity of the HGWPSO is analyzed in terms of its fundamental operations per iteration the total cost depends on the number of function evaluations and the operations required for updating positions and velocities. Assume that N is the population size, D is the number of dimensions, T is the number of iterations, and f(x) is the objective function evaluation. During each iteration, the HGWPSO performs different computational steps. Initially, the position update complexity is determined by the operations involved in GWO and PSO. In the GWO phase, the optimization process is influenced by three leading wolves (α, β, δ), which guide the search direction. Secondly, it takes O(N × D) operations per iteration. Similarly, the PSO iteration involves updating the position and velocity of all the particles and takes another O(N × D) operation. Then, complexity in fitness evaluation is taken into account and all the individuals in the candidate population are assessed by the objective function and this requires O(N × f(x)) operations per iteration. Since the function evaluation would typically be the most expensive operation in an optimization problem to be solved, function evaluation complexity depends to a great extent on the nature of the problem to be solved. This requires O(N × D) operations per iteration. Similarly, the PSO step also updates the position and velocity of all the particles, which again requires another O(N × D) operation. Finally, the fitness evaluation is a computational expense step. The population’s candidate solutions are assessed based on the objective function and require O(N × f(x)) operations per iteration. Since function evaluation is typically the most time-consuming step, its complexity greatly relies on the problem at hand. Combining these operations, the total computational complexity per iteration is O(N × D + N × f(x)). For T iterations, the overall complexity of HGWPSO is O(T × (N × D + N × f(x))) = O(T × N × D).

In constrained optimization scenarios, the dynamic penalty function offers an adaptive method of regulating the trade-off between exploration and exploitation. The adaptive change of the penalty factor λ as mentioned in Eq. (4) ensures that the optimization process can efficiently explore infeasible areas at the beginning and then emphasize feasible solutions as the search process increases.

Experimental result and analysis

In this section, the performance of the proposed algorithm is verified on the CEC_2022 benchmark functions to evaluate its optimization performance and the application of HGWPSO in engineering design problems. Table 1 demonstrates the summary of the CEC_2022 benchmark functions, including range and optimal values, covering 12 optimization functions.

The performance of HGWPSO is compared against different well-known population-based optimization algorithms. These algorithms are widely recognized for their effectiveness in optimization problems. Each algorithm is set with the same population size maximum number of iterations, and their main control parameters are based on the original algorithm specifications, as presented in Table 2. The obtained results are conducted on a 7th Gen Intel(R) Core(TM) i7-1125G4 @ 2.5 GHz processor, with 12.00 GB of RAM, running Windows 10, using the MATLAB R2019a platform.

Validation of HGWPSO with CEC_2022 benchmark functions

This section analyzes CEC_2022 test functions to further assess the performance of the HGWPSO algorithm. The CEC_2022 functions simulate complex global optimization problems, featuring 12 test functions divided into unimodal, basic, hybrid, and composite types. Table 3 provides a comprehensive analysis, including metrics such as best, worst, mean, SD, rank, and computational performance over 30 independent runs. The results highlight the superior performance of HGWPSO across these functions. It demonstrates strong exploration and exploitation capabilities, effectively addressing various optimization challenges. Compared to other algorithms, HGWPSO shows enhanced optimization accuracy, solution stability, and adaptability, leading to more competitive outcomes. According to Table 3, HGWPSO ranked 1st across most CEC_2022 functions, with HGWOSCA and HGWOWOA securing 2nd and 3rd positions in F2_CEC_2022 functions, followed by WOA, MFO, SCA, ALO, COA, and GJO. The SD values for HGWPSO remained within acceptable limits, indicating greater stability and a more focused search approach compared to GWO and PSO. Additionally, Table 3 also shows the computational times for HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, WOA, MFO, SCA, ALO, COA, and GJO. Particularly, GWO had higher computational times, while HGWPSO achieved lower times compared to both GWO and PSO, demonstrating its ability to reduce computational overhead and improve efficiency. This is attributed to its balanced exploration and exploitation, combining GWO’s diverse search capabilities with PSO’s efficiency, which enhances convergence speed and solution quality. As a hybrid algorithm, HGWPSO integrates GWO’s social hierarchy with PSO’s social learning, resulting in improved performance across various optimization problems. The obtained results confirm that HGWPSO is the most effective optimizer for functions such as F1_CEC_2022, F5_CEC_2022, F10_CEC_2022, and F12_CEC_2022, consistently outperforming other algorithms.

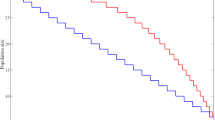

To evaluate the convergence curve of HGWPSO, the curves are plotted over a maximum of 500 iterations using CEC_2022 benchmark functions, as mentioned in Fig. 6a. In this research work 12 functions from the CEC_2022 benchmark are selected F1_CEC_2022 represents a unimodal function; F2_CEC_2022, F3_CEC_2022, F4_CEC_2022, and F5_CEC_2022 are basic functions; F6_CEC_2022, F7_CEC_2022, and F8_CEC_2022 are hybrid functions and F9_CEC_2022, F10_CEC_2022, F11_CEC_2022, and F12_CEC_2022 are also included. Figure 6a shows the convergence curves of different algorithms across 500 iterations. The horizontal axis represents the number of iterations, and the vertical axis shows the average difference between the algorithm’s best value and the theoretical optimal value. According to Fig. 6a, HGWPSO demonstrates better convergence compared to HGWOMFO, HPSOALO, HGWOWOA, HGWOSCA, GWO, PSO, MFO, WOA, SCA, ALO, COA, and GJO. While its performance is comparable to other algorithms in the initial iterations, it accelerates as the iterations progress, especially on F1_CEC_2022, F5_CEC_2022, and F10_CEC_2022. At 500 iterations, HGWPSO maintains the fastest convergence rate, showing the importance of combining improved GWO and PSO. Additionally, HGWPSO consistently achieves more accurate solutions across CEC_2022 functions, confirming its effectiveness as a hybrid approach. HGWPSO reduces the risk of getting trapped in local minima by initially focusing on broad exploration and gradually shifting towards exploitation, reducing search diversity in the later stages. This method enhances convergence speed towards optimal solutions, effectively balancing exploration and exploitation throughout the optimization process. Figure 6a further demonstrates HGWPSO’s strong performance in both early global search and later local search, maintaining stability across the process for all CEC_2022 functions. To better demonstrate performance and stability, a box plot analysis is conducted. These box plots summarize key data points, including maximum, upper quartile, median, lower quartile, minimum, and outliers. Figure 6b shows the box plots for each algorithm across all CEC_2022 functions. In these plots, “+” represents outliers, “−” marks the median and the box edges denote the upper and lower quartiles, with additional indicators for maximum and minimum values. From Fig. 6b, it is confirmed that the highest length of HGWPSO’s box plots is generally lower than those of other algorithms, suggesting better stability. This indicates that HGWPSO provides robust optimization performance and stability across different test functions.

Convergence behavior and box plot representation of algorithm performance.

Figure 7 shows the rankings of different optimization methods across 12 benchmark functions (F1_CEC_2022 to F12_CEC_2022), highlighting their comparative efficiency in addressing optimization challenges. The proposed technique constantly obtained the top place across all functions, demonstrating its performance. This constancy demonstrates its ability to efficiently handle complex problems. The other competent algorithms, such as HGWOWOA, HGWOSCA, and HPSOALO, also obtained average ranks in a few functions, in addition to this the traditional algorithms such as WOA, SCA, and ALO obtained average ranks in a few functions but are not as reliable across the entire set of problems. WOA and ALO indicate better performance on certain problems but lower than the performance of HGWPSO. PSO and GWO are also two basic algorithms for optimization; still, they happen to lag from the hybrid approach while handling some complicated problems. While MFO and COA show a generally average performance, MFO performed lower in most functions and required some enhancement in the search mechanism. Although COA performs well in a few cases, it can also face difficulties compared to the more dynamic behavior of hybrid models. Therefore, this results in a nearby bottom ranking. This comprehensive analysis confirms that the proposed method has better performance than traditional algorithms in terms of convergence speed and solution quality. In addition to this Table 4 compares the ranking of different algorithms across CEC_2022 functions. The rankings represent how efficient an algorithm is. Lower values are considered good performance. Particularly in the proposed method, the best rank was achieved near 1 across all the test functions. Hence, it clearly shows that the proposed method is better compared to other algorithms like HMFOPSO, HPSOALO, HGWOWOA, and GWO. The chi-squared statistic values for each test function are all greater than the critical value of 21.026 at a given significance level computed with 12 degrees of freedom. In addition, the p-values for all test cases fall below 0.05; hence, observed ranking differences are statistically significant and not likely due to chance. The obtained results show that HGWPSO substantially outperforms other optimization algorithms, whereas algorithms like GJO, COA, and MFO generally rank low, indicating less competitive performance.

Ranking of algorithm for CEC_2022 benchmark functions.

Application of HGWPSO in complex engineering problem

Case 01: Optimizing pressure vessel design using HGWPSO

Table 5 presents a comparative analysis of HGWPSO including HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO, for the pressure vessel design problem. The results show that HGWPSO consistently outperforms the other algorithms, achieving the best values for the cost function of the pressure vessel design problem. Additionally, HGWPSO provides lower mean and SD values, demonstrating its stability and robustness. Although GJO also performs well, particularly for certain parameters of the pressure vessel design problem, such as the height of the bar, HGWPSO remains the top-performing algorithm overall, offering lower mean and SD values for the analyzed statistical measures. In addition to this, ALO also shows higher values for the cost function within the pressure vessel design framework, indicating less optimal performance. Figures 8a illustrate the comparative analysis of HGWPSO and nine other algorithms over 500 iterations. Initially, HGWPSO exhibits some variability in the early stages of iterations due to sensitivity to initialization. However, it quickly stabilizes, maintaining lower fitness values throughout. This behavior can be attributed to the combination of GWO’s exploitation capabilities and PSO’s rapid convergence, which creates a balanced approach that effectively navigates the solution space. This synergy improves performance, especially in complex optimization scenarios. Additionally, convergence curves for MFO, WOA, SCA, ALO, COA, and GJO also show minimum cost function values. An in-depth analysis of the best solutions is further visualized through box plots in Fig. 8b. The horizontal axes represent the algorithms being compared, while the vertical axes show the best cost function values achieved by each. It is confirmed that HGWPSO has better capability to solve pressure vessel design.

Convergence behavior and box plot representation of algorithm performance.

Case 02: evaluating string/compression spring design using HGWPSO

Table 6 presents the optimal performance results for minimizing the spring weight in the string/compression spring problem. Analyzing the mean, SD, and median, it is confirmed that HGWPSO achieves lower values for these statistical measures, indicating superior performance. In addition to this, the best values obtained by MFO and COA are relatively high, suggesting suboptimal results. This is due to the tendency of their search agents to get trapped in local optima, reducing the exploitation efficiency of the algorithms. On the other hand, statistical measures for HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, GWO, PSO, WOA, SCA, ALO, and CJO show lower values, reflecting strong intensification capabilities. Additionally, GJO provides competitive optimal values for several design variables. HGWPSO, in particular, stands out due to its effective combination of GWO’s exploitation abilities and PSO’s rapid convergence, leading to stable, high-quality solutions. This combination allows HGWPSO to consistently navigate complex search spaces without becoming stuck in local minima while efficiently exploiting potential optimal regions to reach the global minimum. Furthermore, PSO’s behavior quickly guides particles away from local minima, helping HGWPSO achieve better parameter values compared to other algorithms. Figure 9a shows the minimum cost function values for the string/compression spring problem, in which the convergence graph demonstrates the efficient performance of HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, SCA, and GJO. However, GWO, MFO, WOA, ALO, and COA show higher cost function values, indicating less optimal performance. Overall, HGWPSO demonstrates superior performance in solving the string/compression spring problem. The robustness of HGWPSO in addressing problems is further analyzed by the boxplots in Fig. 9b, where the algorithm exhibits lower and more compact boxes, reflecting consistent and reliable results.

Convergence behavior and box plot representation of algorithm performance.

Case 03: examining three-bar truss structure design using HGWPSO

Figure 10a shows the minimum cost function values for the three-bar truss problem. The convergence graph highlights the effective performance of HGWPSO, along with HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, SCA, and GJO, while GWO, MFO, WOA, ALO, and COA show higher cost function values, suggesting less efficient optimization. Overall, HGWPSO demonstrates a clear advantage in solving the string/compression spring problem, consistently delivering more accurate solutions compared to other algorithms. The robustness of HGWPSO in managing constrained optimization tasks is further supported by the boxplots in Fig. 10b, which show lower and more compact distributions, indicating stability and reliability in their performance. The optimal value for the minimum cost function is presented in Table 7.

Convergence behavior and box plot representation of algorithm performance.

Case 04: exploring gear train design using HGWPSO

Table 8 demonstrates the analysis of the gear train design problem, which focuses on minimizing the gear ratio across four parameters: the number of teeth on the gears (g₁, g₂, g₃, g₄). These parameters are discrete, and the constraints are limited to the permissible ranges for the variables. The obtained results using HGWPSO, as shown in Table 8, indicate that this algorithm outperforms other methods in optimizing the gear train design problem. These findings demonstrate HGWPSO’s ability to effectively solve problems with discrete parameters, delivering highly competitive results. Figure 11a shows the convergence curve of HGWPSO over 500 iterations, comparing its performance with HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO. To further validate the effectiveness of HGWPSO, Fig. 11b presents box plots, where the horizontal axis represents the algorithms, and the vertical axis shows the best cost function values achieved. The results confirm that HGWPSO has a superior capability in solving the gear train design problem.

Convergence behavior and box plot representation of algorithm performance.

Case 05: analyzing cantilever beam design using HGWPSO

Table 9 presents the optimization results obtained using HGWPSO with other competitive algorithms. A comparison of these results demonstrates that HGWPSO obtained better performance in addressing the cantilever beam design problem. The optimized outcomes show not only an improvement in mean values but also a lower SD, highlighting the consistency and reliability of the results. Additionally, HGWPSO shows better results across both the best and worst performance metrics. Furthermore, Figs. 12a and Figs. 12b show a visual representation of these obtained results, confirming the advantages of HGWPSO and providing strong evidence for its effectiveness in optimizing truss design within practical engineering problems.

Convergence behavior and box plot representation of algorithm performance.

Case 06: analyzing welded beam design using HGWPSO

Table 10 presents a comparative analysis of various intelligent techniques for the welded beam design problem. The results indicate that HGWPSO achieves the best cost function values, as well as the lowest mean and SD compared to other algorithms. The performance of HGWPSO, particularly over HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO, can be attributed to the effective combination of GWO’s exploitation and PSO’s exploration. This hybridization enables an entire search of the solution space, preventing the algorithm from becoming trapped in local minima and thereby enhancing exploration capabilities. Among the nine compared algorithms, HGWPSO consistently provides optimal results for the cost function in the welded beam design problem, as evidenced by its low average and SD values. Additionally, Fig. 13a demonstrates the best values obtained in 500 iterations. The convergence curves for HGWSCA, SCA, ALO, COA, and CJO are positioned at the lower part of the graph, indicating minimal cost function values across consecutive runs. However, the convergence curves for MFO and WOA appear in the middle, demonstrating higher cost function values and less effectiveness in addressing the problem’s complexity as compared to HGWPSO. Moreover, the box plots in Fig. 13b show that HGWPSO achieves superior minimum and maximum cost function values for the welded beam design problem.

Convergence behavior and box plot representation of algorithm performance.

Case 07: analyzing transmission line parameter problem

The comparative analysis of the transmission line parameter problem considering two, three, and four bundle conductors is presented in Table 11. The obtained results show that HGWPSO achieves the best values, as well as the lowest mean compared to other algorithms. The performance of HGWPSO is compared with competent algorithms such as HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO. This hybridization of GWO and PSO enables an entire search of the solution space, preventing the algorithm from becoming trapped in local minima and thereby enhancing exploration capabilities. As compared to other algorithms, HGWPSO achieved better optimal results for the transmission line parameter problem, as demonstrated by its low average and SD values. Additionally, Figs. 14a, c, e show the convergence curves of different bundled conductors. Moreover, the box plots in Figs. 14b, d, f show that HGWPSO achieved both the minimum and maximum cost function values. While other algorithms exhibit best values that are relatively close to each other, the box plots further highlight the range of minimum and maximum values, emphasizing HGWPSO’s robust and consistent performance.

Convergence behavior and box plot representation of algorithm performance.

Case 08: analyzing reactive power planning problem

Table 12 presents a comparative analysis of the reactive power planning problem in the IEEE 30-bus system. The results indicate that HGWPSO achieves the best values, along with the lowest mean, compared to other algorithms. The performance of HGWPSO is evaluated against competitive algorithms such as HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO. The hybridization of GWO and PSO facilitates a comprehensive search of the solution space, preventing the algorithm from getting trapped in local minima and thereby improving its exploration capabilities. Compared to other algorithms, HGWPSO yields superior optimal results for the reactive power planning problem, as demonstrated by its lower mean and SD values. Additionally, Fig. 15a illustrates the convergence curves of active power loss. Furthermore, Fig. 15b presents box plots, where the horizontal axis represents the algorithms, and the vertical axis indicates the active power loss achieved. While the best values obtained by other algorithms are relatively close to each other, the box plots further emphasize the range of minimum and maximum values, highlighting HGWPSO’s robust and consistent performance.

Convergence behavior and box plot representation of algorithm performance.

According to the above results, the HGWPSO achieves the best performance with a value of 5.9864E+03, outperforming all other methods. The best value for HGWOWOA is 7.8948E+03, and for GJO, it is 6.2578E+03, while GWO and PSO obtained higher values of 1.0115E+05 and 3.4289E+04 respectively. The other hybrid algorithms, such as HMFOPSO (1.8084E+04), HPSOALO (1.6454E+04), and MFO (1.7667E+04), have average results but are less efficient than HGWPSO as mentioned in Table 5. Table 6 demonstrates the best value of 0.0132 for HGWPSO, which is much higher than others, including GJO (0.0134) and HGWOSCA (0.0145), while algorithms like HMFOPSO (1.6915E+09) and PSO (9.9971E+08) have much higher values, which means they are less optimal. Table 7 shows that HGWPSO has the best value of 263.9044 compared to others, such as GWO at 272.9803 and HPSOALO at 264.8705. Algorithms such as HMFOPSO and PSO obtained values of 1602.1627 and 1555.8422, respectively, which means less optimal performance. Table 8 shows that HGWPSO performs the best with a best value of 1.0847E-10 and a worst value of 0.73226, which means excellent optimization with minimal variation compared to HMFOPSO (best: 0.049028, worst: 8.2495) and PSO (best: 0.026329, worst: 3.1529), whose worst values are much higher, meaning less stability and efficiency. Table 9 clearly shows that HGWPSO performs well, with a best value of 1.3425, a worst value of 5.1042, and a mean of 2.598, thus indicating a good balance between performance and stability. HMFOPSO shows a significantly higher worst value (1.3936E+10) and mean (4.6452e+09), reflecting less stable and consistent performance. Table 10 shows that HGWPSO achieves the best value, 1.5344, where HPSOALO obtained 2.5746, and HGWOWOA is 1.8747. Additionally, as observed from Table 11, HGWPSO has achieved the best values across different bundle configurations. Specifically, it obtained an optimal value of 17.6182 for two-bundle conductors, 9.5877 for three-bundle conductors, and 3.5102 for four-bundle conductors. Finally, according to Table 12, the proposed method outperforms others by achieving the best optimal value.

Performance evaluation of HGWPSO

In this section to evaluate the efficiency of HGWPSO, extensive verification on two different problem domains including the CEC_2022 benchmark functions and real-world engineering optimization problem are considered. The main objective is to analyze the algorithm’s ability to achieve a balance between exploration and exploitation with better accuracy, stability, and computational efficiency compared to other optimization algorithms. The obtained results confirm the better performance of HGWPSO in terms of convergence rate and solution quality.

Comparative performance analysis of HGWPSO on CEC_2022 benchmark functions

To verify the efficiency of HGWPSO, initially, the proposed method applied to CEC_2022 benchmark functions that include unimodal, basic, hybrid, and composite functions. The fundamental performance indicators such as best, worst, mean, SD, and rank, along with the computational time and convergence behavior are included. The obtained results show that HGWPSO performs better than other optimization algorithms such as HGWOSCA, HGWOWOA, GWO, PSO, MFO, WOA, SCA, ALO, COA, and GJO. In the majority of the functions, the HGWPSO obtained the best mean and lowest SD, therefore proving better accuracy and consistency over multiple independent runs. Moreover, HGWPSO demonstrates the best convergence rates, thereby suggesting its efficiency in finding optimal solutions. While traditional methods such as GWO and PSO suffered from poor convergence and high computational cost, HGWPSO successfully balanced the exploration and exploitation effectively. The convergence curves show that HGWPSO surpasses other methods consistently across 500 iterations.

The HGWPSO demonstrates better performance in optimizing the CEC_2022 benchmark functions such as in F1_CEC_2022, HGWPSO demonstrates rapid convergence, successfully minimizing the objective function compared to other algorithms. This indicates its strong exploitation capability, which allows it to converge solutions rapidly in a promising search space. In F6_CEC_2022, HGWPSO maintains its global search ability, balancing exploration and exploitation efficiently. Similarly, in other benchmark functions, HGWPSO also demonstrates superior adaptability under dynamic environments when function characteristics significantly change. It performs well in F9_CEC_2022 and F10_CEC_2022, in which solution quality and search efficiency are very important. Additionally, in F12_CEC_2022, where the local and global search must be well-balanced against and provide better solution quality. To further confirm the reliability of HGWPSO, a box plot analysis is conducted, and it shows that lower box lengths indicate higher robustness and lower variability in performance. It can be observed from the obtained results that HGWPSO is a reliable and better optimizer for handling complex problems, with better stability, computational efficiency, and optimization accuracy.

Comparative performance analysis of HGWPSO on complex engineering problem

In Addition to benchmark verification, HGWPSO is further validated on complex engineering problems. The objective of these problems is to minimize problem costs subject to different design constraints. The complexities of these problems come from their nonlinear constraints and complex design variables, making optimization challenging. The results demonstrate that HGWPSO achieves cost-effective solutions with high quality, outperforming HMFOPSO, HPSOALO, HGWOWOA, HGWOSCA, PSO, GWO, MFO, WOA, SCA, ALO, COA, and GJO. The proposed algorithm efficiently optimizes various engineering design problems while ensuring constraint satisfaction and achieving better performance. In pressure vessel design optimization, HGWPSO optimizes thickness, volume, and cost while meeting the stress, material strength, and internal pressure constraints with minimum weight and cost overall. In the design of compression springs, HGWPSO handles constraints of deflection limits, material strength, and maximum stress to render the design cost-effective and efficient. Similarly, in the design problem of the three-bar truss structure, the algorithm optimizes the geometry and material distribution for minimum weight subject to constraints on stress, displacement, and stability, such that the structure can sustain the intended loads. Furthermore, in the design of gear trains, HGWPSO optimizes gear ratios subject to constraints on torque transmission. In the case of cantilever beam design, the algorithm minimizes material while meeting deflection limits for optimal performance under applied loads. In the welded beam design, HGWPSO optimizes the beam dimensions and weld sizes under the condition that the design must fulfill shear stress and bending stress. When applied to transmission line parameter estimation, HGWPSO accurately estimates inductance and capacitance which is crucial for power system efficiency. In reactive power planning, the algorithm is better than others in terms of solution quality. HGWPSO’s convergence curve shows its efficiency, as it achieves stability in later iterations than other methods. Box plot analysis confirms HGWPSO’s minimal performance variation, reinforcing its stability in practical applications.

The obtained results confirm that HGWPSO consistently obtained better solutions with constraint handling, making it suitable for real-world engineering applications. Moreover, HGWPSO achieved the minimum optimal value demonstrating that it consistently reaches the optimum value across multiple independent runs.

Practical implications

The obtained results have important implications for practical developments in optimization algorithms. Based on these results the practical implications of HGWPSO are more significant than other complex problems, demonstrating its effectiveness in achieving optimum solutions with better computational efficiency. In performance, HGWPSO surpasses other optimization methods in converging to the best optimum values consistently. Such as in pressure vessel design, HGWPSO achieves a 71.61% improvement similarly in the design of three-bar truss structures and compression spring design, the algorithm is improved by 99%, indicating the ability of the algorithm to handle constraints. For a gear train design, the average performance of HGWPSO is improved by 53.46% with assured better performance and reliability for mechanical design issues. When designing a cantilever beam, HGWPSO is improved by 71.92%. For welded beam design, the algorithm is improved by 99%. Also, when applied to transmission line parameter estimation, the algorithm’s performance is a function of the bundle configuration, with an improvement of 76.91% achieved with two-bundle conductors, 43.94% with three-bundle conductors, and 46.57% with four-bundle conductors. These improvements are necessary to optimize power system parameters and transmission efficiency. Finally, in reactive power planning, an improvement of 1.02% is provided by HGWPSO, which reflects its stability and effectiveness. In addition to this, HGWPSO performs better performance in CEC_2022 benchmark functions, computationally more efficiently, and achieves better solutions with lower computational times as confirmed in Table 3. Furthermore, by minimizing computing costs and improving solution quality, the proposed method can enable data-driven decision-making, enabling decision-makers to implement more successful strategies based on optimized results.

Conclusion, discussion, and future work

This section explores conclusions, discussions and provides suggestions for future research work.

Discussion

The HGWPSO algorithm demonstrates significant strengths in its rapid convergence and ability to handle complex optimization problem, as presented by its performance on a range of CEC_2022 benchmark functions fromF1_CEC_2022 to F4_CEC_2022 and F9_CEC_2022 to F10_CEC_2022, shown in Fig. 6. In functions F1_CEC_2022 to F4_CEC_2022, which vary in complexity and dimensionality, HGWPSO exhibits rapid convergence toward near-optimal solutions, outperforming other algorithms in terms of both accuracy and efficiency. The algorithm performs better particularly in F1_CEC_2022 and F2_CEC_2022, where it stabilizes early in the optimization process, demonstrating its ability to explore the search space effectively and prevent premature convergence. When tested on more nonlinear and constrained functions, such as F3_CEC_2022 and F4_CEC_2022, HGWPSO makes a strong balance between exploration and exploitation, allowing it to avoid local optima and navigate toward global solutions efficiently. Moreover, the algorithm shows significant performance, particularly in complex and multi-modal functions F9_CEC_2022 and F10_CEC_2022, which are known for their challenging functions. HGWPSO handles these complex functions with better accuracy and a stable convergence rate. The better convergence and low-performance fluctuations in these functions confirm the HGWPSO performance in terms of computational efficiency and quality.

Conclusion

This research presents HGWPSO, a hybrid optimization algorithm that effectively combines the strengths of GWO and PSO to solve complex engineering design problems. HGWPSO ensures solution diversity, accelerates convergence speed, prevents premature convergence, and maintains a strong balance between exploration and exploitation. The main findings of this research work are explained as follows.

-

Extensive performance evaluation using CEC_2022 benchmark functions and comparative analysis confirms better efficiency of HGWPSO in solution quality, and convergence speed with other optimization algorithms established. Additionally, box plot analysis further validates its efficiency.

-

The proposed algorithms obtained 1st rank in the majority of engineering design problems, including pressure vessel design, compression spring design, three-bar truss design, gear train design, cantilever beam design, welded beam design, transmission line parameters with different bundle conductors, and reactive power planning problems.

-

In all cases, HGWPSO consistently achieves optimal or near-optimal solutions in all test cases such as 5.9864E+03 in Case 1, 5.9864E+03 in Case 2, 263.9044 in Case 3, 1.0847E-10 in Case 4, 1.3425 and 1.5344 in Case 5 and Case 6, respectively. In Case 7, the proposed method obtained optimal values of 17.6182, 9.5877, and 3.5102 for two, three, and four bundle conductors, respectively. Finally, 0.0680 in Case 8. These results confirm the robustness and versatility of HGWPSO across complex engineering problems.

-

According to the obtained result, it is confirmed that HGWPSO prevents premature convergence, while making sure of a balance between exploration and exploitation, obtaining better accuracy and convergence.

Future work

Future research could explore several directions to further improve HGWPSO including the hybridization of GWO and PSO with other advanced optimization and deeplearning techniques to further enhance performance. Additionally, integrating adaptive penalty functions could improve constraint handling by effectively penalizing constraint violations within the fitness function. Further studies may also investigate the impact of different evaluation functions on HGWPSO’s convergence behavior and local exploitation capabilities. Expanding the application of HGWPSO to multi-objective and real-world engineering problems can provide deeper insights into its effectiveness and adaptability.

Data availability

The data will be available from the first author, Muhammad Suhail Shaikh, upon reasonable request.

Abbreviations

- GWO:

-

Grey wolf optimization

- PSO:

-

Particle swarm optimization

- MFO:

-

Moth flame optimization

- WOA:

-

Whale optimization algorithm

- SCA:

-

Sine cosine algorithm

- ALO:

-

Ant lion optimizer

- COA:

-

Crayfish optimization algorithm

- GJO:

-

Golden jackal optimization

- GWODE:

-

Grey wolf optimization-differential evaluation

- GAGWO:

-

Genetic algorithm-grey wolf optimization

- PSODE:

-

Particle swarm optimization-differential evaluation

- PSOGA:

-

Particle swarm optimization-genetic algorithm

- PSOACO:

-

Particle swarm optimization-ant colony optimization

- HMFOPSO:

-

Hybrid moth flame optimization-particle swarm optimization

- HPSOALO:

-

Hybrid particle swarm optimization-ant lion optimizer

- HGWOWOA:

-

Hybrid grey wolf optimization-whale optimization algorithm

- HGWOSCA:

-

Hybrid grey wolf optimization-sine cosine algorithm

- QDE:

-

Quantum differential evolution

- WOASCALF:

-

Whale optimization algorithm based on sine cosine algorithm and levy flight

- TO-PSO:

-

Enhanced particle swarm optimization

- QRS:

-

Quasi-random sequence

- WSOA:

-

Whale optimization with seagull algorithm

- SOA:

-

Seagull algorithm

- MHCSA:

-

Memory-based hybrid crow search algorithm

- MBFPA:

-

Hybrid metaheuristic algorithm and butterfly optimization algorithm

- COASaDE:

-

Crayfish optimization algorithm and self-adaptive differential evolution

- COA:

-

Crayfish optimization algorithm

- IBSSA:

-

Improved beetle antennae search-based sparrow search algorithm

- FSGWO:

-

Fuzzy strategy grey wolf optimizer

- LSO:

-

Light spectrum optimizer

- SMA-GM:

-

Slime mould algorithm based on a Gaussian mutation

- DGSWOA:

-

Dynamic gain-sharing whale optimization algorithm

- IGWO:

-

Improved grey wolf optimization

- RIME:

-

RIME optimization algorithm

- SMA:

-

Slime mould algorithm

- CAOTI:

-

Caoti optimization algorithm

- MBO:

-

Monarch butterfly optimization

- NFL:

-

No free lunch theorem

- RPP:

-

Reactive power planning

- SD:

-

Standard deviation

- Nc2, Nc3, and Nc4 :

-

Two bundle, three bundle and four bundle conductor capacitance

References

Ibrahim, R. A., Abd Elaziz, M. & Lu, S. Chaotic opposition-based grey-wolf optimization algorithm based on differential evolution and disruption operator for global optimization. Expert Syst. Appl. 108, 1–27 (2018).

Shaikh, M. S., Hua, C., Jatoi, M. A., Ansari, M. M. & Qader, A. A. Application of grey wolf optimisation algorithm in parameter calculation of overhead transmission line system. IET Sci. Meas. Technol. 15(2), 218–231 (2021).

Nadimi-Shahraki, M. H., Zamani, H., Asghari Varzaneh, Z. & Mirjalili, S. A systematic review of the whale optimization algorithm: Theoretical foundation, improvements, and hybridizations. Arch. Comput. Methods Eng. 30(7), 4113–4159 (2023).

Fausto, F., Reyna-Orta, A., Cuevas, E., Andrade, Á. G. & Perez-Cisneros, M. From ants to whales: Metaheuristics for all tastes. Artif. Intell. Rev. 53, 753–810 (2020).

Hashim, F. A., Houssein, E. H., Mabrouk, M. S., Al-Atabany, W. & Mirjalili, S. Henry gas solubility optimization: A novel physics-based algorithm. Future Gener. Comput. Syst. 101, 646–667 (2019).

Mirjalili, S., Mirjalili, S. M. & Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 69, 46–61 (2014).

Kennedy, J. & Eberhart, R. Particle swarm optimization. In Proceedings of ICNN’95-international conference on neural networks Vol. 4 1942–1948 (IEEE, 1995).

Mirjalili, S. Moth-flame optimization algorithm: A novel nature-inspired heuristic paradigm. Knowl.-Based Syst. 89, 228–249 (2015).

Mirjalili, S. & Lewis, A. The whale optimization algorithm. Adv. Eng. Softw. 95, 51–67 (2016).

Mirjalili, S. SCA: A sine cosine algorithm for solving optimization problems. Knowl.-Based Syst. 96, 120–133 (2016).

Mirjalili, S. The ant lion optimizer. Adv. Eng. Softw. 83, 80–98 (2015).

Jia, H., Rao, H., Wen, C. & Mirjalili, S. Crayfish optimization algorithm. Artif. Intell. Rev. 56(Suppl 2), 1919–1979 (2023).

Chopra, N. & Ansari, M. M. Golden jackal optimization: A novel nature-inspired optimizer for engineering applications. Expert Syst. Appl. 198, 116924 (2022).

El-Fergany, A. A. & Hasanien, H. M. Single and multi-objective optimal power flow using grey wolf optimizer and differential evolution algorithms. Electr. Power Compon. Syst. 43(13), 1548–1559 (2015).