Abstract

Human activity recognition (HAR) is essential for applications such as healthcare monitoring, fitness tracking, and smart environments, yet deploying accurate and interpretable models on resource-constrained devices remains challenging. In this paper, we propose XTinyHAR, a lightweight, transformer-based unimodal framework trained via cross-modal knowledge distillation from a multimodal teacher. Our model incorporates temporal positional embeddings and attention rollout to enhance sequential feature extraction and interpretability. Evaluated on UTD-MHAD and MM-Fit, XTinyHAR achieves test accuracies of 98.71% and 98.55% with F1-scores that match these results and Cohen’s Kappa above 0.98. The model remains lightweight (2.45 MB) with fast inference (3.1 ms on CPU, 1.2 ms on GPU) and low computational cost (11.3M FLOPs). Extensive ablation studies confirm the contribution of each component, and subject-wise evaluations demonstrate strong generalization across users. These results highlight XTinyHAR’s potential as a high-performance, interpretable, and deployable solution for real-time HAR on edge devices. Our codes are available at: https://github.com/Ism-ail11/XTinyHAR

Similar content being viewed by others

Introduction

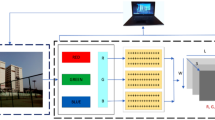

HAR1 has emerged as a critical research area in pervasive computing, with wide-ranging applications in healthcare monitoring, fitness tracking, assisted living, smart homes, and HCI2. The ability to accurately detect and classify human activities from sensor data has the potential to revolutionize real-world scenarios, particularly in environments where continuous, unobtrusive, and real-time behavior monitoring is essential (see Fig. 1). Traditionally, HAR systems have relied on handcrafted features or classical machine learning techniques applied to inertial sensor data3. While these methods offer lightweight deployment, they often fall short in capturing the temporal and spatial complexities of human motion, especially in uncontrolled environments4.

An illustration of HAR process using conventional approaches.

HAR leverages diverse sensing modalities to classify daily human behaviors. Common signal sources include inertial sensors (e.g., accelerometers and gyroscopes), video-based skeleton sequences, speech, and physiological signals such as EMG or ECG. Among these, inertial sensing has become dominant due to its low cost, ubiquity in smartphones and wearables, and robustness to lighting and occlusion.

Recent advances in deep learning, particularly CNNs and RNNs5, have significantly improved HAR performance by automatically extracting hierarchical features from raw sensor inputs6,7. More recently, Transformer architectures originally designed for NLP have shown great promise in time-series modeling due to their capacity to model long-range dependencies and global contextual relationships8,9. In HAR, transformer-based models have demonstrated superior recognition accuracy when applied to multimodal data sources such as inertial sensors, skeleton keypoints, or even audio-visual streams10. However, these architectures typically require high computational resources and extensive sensing infrastructure, limiting their practical deployment on mobile and edge devices with constrained processing power and battery life11.

Moreover, while multimodal HAR systems leveraging skeleton and inertial data provide rich and complementary information12, such setups are impractical for many real-world deployments due to cost, occlusion, lighting constraints, or the need for specialized hardware (e.g., depth cameras)13. In contrast, inertial measurement units (IMUs), embedded in ubiquitous devices such as smartphones and smartwatches, offer a scalable and cost-effective sensing modality14. The challenge, however, is how to distill the discriminative knowledge captured from multimodal data during training into lightweight models that operate solely on inertial inputs during inference without sacrificing accuracy or generalizability15.

Another critical aspect often overlooked in deep learning-based HAR is explainability16. As these systems are increasingly adopted in sensitive applications such as elderly care or physical rehabilitation, stakeholders must trust and understand the system’s decisions17. Current transformer-based HAR models provide little transparency into their inner workings18, making them difficult to interpret or debug in practice.

To address these limitations, this paper proposes XTinyHAR, a compact and interpretable transformer-based HAR framework that combines the benefits of multimodal training and unimodal deployment. Specifically, XTinyHAR employs a knowledge distillation approach, where a powerful teacher model trained on both skeleton and inertial data transfers its knowledge to a lightweight student model that uses only inertial signals. The student model, dubbed the Inertial transformer (IT), is designed to be highly efficient and edge-friendly while retaining temporal modeling capabilities through patch-based attention mechanisms. Furthermore, the framework integrates a comprehensive suite of explainability techniques to offer insights into the model’s reasoning process and validate the success of cross-modal knowledge transfer.

The key contributions of this work are as follows:

-

Single-modality HAR via wearable IMUs: We target the challenging problem of HAR using only inertial signals from wearable devices, avoiding reliance on expensive or impractical sensing modalities such as video or skeleton inputs.

-

Methodological innovations in transformer design: We introduce temporal position embeddings, attention expansion modules, and a multimodal-to-unimodal distillation strategy to enable accurate HAR using only IMU signals.

-

Lightweight and deployable model architecture: The proposed Inertial Transformer (IT) is compact (0.62M parameters), fast (1.2 ms latency), and suitable for edge deployment on resource-constrained devices.

-

Strong results on benchmark datasets: XTinyHAR achieves 98.71% on UTD-MHAD and 98.55% on MM-Fit datasets using only inertial data, closely matching the multimodal teacher while significantly reducing hardware requirements.

Related works

This section reviews recent advancements in HAR focusing on deep learning and Transformer-based approaches, highlighting their methodologies, datasets, and comparative performance to contextualize the motivation for our proposed model.

Khatun et al.19 proposed a model combining CNN, LSTM, and a self-attention mechanism for HAR using wearable sensor data. The methodology involved preprocessing raw sensor data from accelerometers, gyroscopes, and linear acceleration sensors, segmenting the data into 10-second windows, and employing a CNN-LSTM architecture enhanced with self-attention to capture spatial and temporal features. The model was evaluated on three datasets: the authors’ own H-Activity dataset (collected from 10 participants performing activities like sitting/standing, walking, jogging, and running), as well as the publicly available MHEALTH and UCI-HAR datasets. The results demonstrated high accuracy, achieving 99.93% on the H-Activity dataset, 98.76% on MHEALTH, and 93.11% on UCI-HAR. Khan et al.20 developed a hybrid deep learning approach combining CNN and LSTM to address the challenges of HAR. The methodology leverages CNN for spatial feature extraction and LSTM for temporal feature learning, aiming to improve recognition accuracy for real-world, long-term HAR tasks. The study utilized a newly collected dataset comprising 12 different physical activities performed by 20 participants, captured using a Kinect V2 sensor to extract 25 skeleton joints per frame. The proposed CNN-LSTM model achieved an accuracy of 90.89%, outperforming traditional machine learning and standalone deep learning models.

Surek et al.21 presented a deep learning-based approach for video-based HAR using ResNet and ViT enhanced with self-supervised learning techniques. The methodology involved preprocessing video frames from the HMDB51 dataset, which contains 6,849 videos across 51 action classes, and evaluating two architectures: a 3D ResNet-50 for spatiotemporal feature extraction and a 2D ViT combined with LSTM to capture temporal dependencies. The self-distillation technique DINO (self-DIstillation with NO labels) was employed to improve feature learning without labeled data. The results demonstrated strong performance, with the hybrid 2D ViT-LSTM model achieving 96.7% training accuracy and 41.0% test accuracy on HMDB51, outperforming other self-supervised methods. Joudaki et al.22 introduced a novel hybrid technique for HAR that combines a two-dimensional convolutional restricted Boltzmann machine (2D Conv-RBM) with a LSTM network, enhanced by an optimized frame selection mechanism. The methodology leverages the 2D Conv-RBM for efficient spatial feature extraction, such as edges and textures, while the LSTM captures temporal dependencies across video frames. A smart frame selection mechanism reduces computational costs by identifying and processing only the most informative frames. The model was evaluated on three benchmark datasets KTH, UCF Sports, and HMDB51 achieving accuracies of 97.3%, 94.8%, and 81.5%, respectively.

Thakur et al.23 designed a novel approach for HAR that integrates a RNN and a CNN with a hybrid Grey Wolf Optimizer–Whale Optimization Algorithm (GWO-WOA) for feature selection. The methodology leverages CNN for spatial feature extraction and RNN for temporal pattern recognition, while the GWO-WOA optimization enhances feature selection to improve model performance. The study utilized a publicly available dataset collected from smartphone sensors, including accelerometer, gyroscope, magnetometer, and GPS data, covering activities such as inactivity, walking, active movement, and driving. The proposed model achieved an accuracy of 95%. Khan et al.24 introduced an ensemble deep learning framework for transition-aware HAR, combining 1D-CNN and LSTM networks to improve the detection of both static and dynamic activities as well as postural transitions. The methodology leveraged the strengths of CNNs for spatial feature extraction and LSTMs for temporal dependencies, integrating them into a unified model to enhance classification accuracy. The authors evaluated their approach on two datasets: the HAPT dataset, which includes 12 activities (6 basic and 6 transitional), and the Human Activity dataset, featuring 5 dynamic and static activities. Their results demonstrated superior performance, achieving 97.84% accuracy on the HAPT dataset and 99.04% on the HA dataset, outperforming existing state-of-the-art methods in HAR.

Several studies have explored the use of transformer-based models for HAR. Saidani et al.25 proposed an efficient HAR system using hybrid features and a transformer model to improve accuracy and robustness in complex scenarios. The methodology involved extracting a diverse set of 273 features including MFCCs, mel-spectrograms, and chromagrams from sensor data, followed by data augmentation techniques like time stretching and Gaussian noise to enhance generalization. The transformer model was then employed to capture long-range dependencies in the sequential data. The system was evaluated on three public datasets (PAMAP2, UCI HAR, and WISDM), achieving impressive accuracies of 98.2%, 98.6%, and 97.3%, respectively. Sun et al.26 developed a DCTCSS model to address the challenge of HAR with limited labeled data from wearable sensors. The methodology combines deep CNNs with transformer architectures under the BYOL contrastive learning framework, leveraging random data augmentation to enhance feature representation. The model was evaluated on three public datasets UCI-HAR, Skoda, and Mhealth covering daily life, industrial, and health monitoring scenarios. Results demonstrated superior performance, achieving mean F1 scores of 95.64%, 88.39%, and 98.40% on the respective datasets using only 10% labeled data.

Liu et al.27 presented TransTM, a device-free HAR method using RFID signals, which leverages a Time-streaming Multiscale Transformer to process raw RSSI data without manual preprocessing. The model combines multi-head self-attention and multiscale convolutional blocks to capture both temporal and spatial features, enabling recognition of single-person activities and human-to-human interactions. Evaluated on a newly collected dataset of 1.44 million RFID samples, TransTM achieved a state-of-the-art average accuracy of 99.1%, outperforming CNN- and LSTM-based baselines by up to 8.0%. The method also demonstrated strong generalization on WiFi-based HAR tasks, with accuracies of 95.5%, 94.7%, and 93.3% on public datasets. Han et al.28 designed HAR-ViT, a novel HAR method based on ViT designed to address challenges in skeleton-based action recognition, such as over-smoothing in Graph CNs and capturing long-range dependencies between joints. The methodology integrates an enhanced Adaptive Graph Convolutional Layer (eAGCL) from 2s-AGCN into ViT to process spatio-temporal skeleton data, leveraging a redefined position encoder to handle non-sequenced information and a transformer encoder to compress sequence features efficiently. The model was evaluated on three benchmark datasets NTU RGB+D 60, NTU RGB+D 120, and Kinetics-Skeleton 400 demonstrating state-of-the-art performance with significant improvements in accuracy (e.g., 91.06% on NTU60-Xsub and 96.73% on NTU60-Xview) and reduced computational complexity compared to existing methods.

Al-qanees et al.29 proposed PCNN-Transformer, a hybrid CNNs and Transformer architectures for HAR and fall detection using wearable sensor data. The methodology leverages parallel CNN blocks and Transformer encoders to capture both local and global temporal features, enhanced by a residual mapping mechanism to reduce computational complexity. The model was evaluated on three public datasets SiSFall, UniMiB-SHAR, and MobiAct demonstrating high accuracy rates, such as 99.95% for binary fall detection on SiSFall and 98.68% on UniMiB-SHAR. Li et al.30 introduced the DMFT network for multi-modal HAR, addressing challenges such as data quality issues and computational complexity in real-world deployments. The methodology integrates a teacher-student framework, where a teacher model employs a MSTT and a Temporal Mid-Fusion module to extract and fuse salient features, while a lightweight student model is trained via knowledge distillation for efficient edge deployment. The authors evaluated DMFT on two public datasets, UTD-MHAD and MMAct, using subject-independent protocols, and demonstrated competitive performance, achieving 93.97% accuracy on UTD-MHAD and 83.29% F1-score on MMAct.

As summarized in Table 1, prior works on HAR often suffer from limitations including dependence on multimodal input (e.g., video, skeleton, or audio), large model size unsuitable for edge deployment, or a lack of interpretability. Most Transformer-based methods focus on accuracy improvements without addressing energy efficiency, latency, or real-world deployability. Furthermore, interpretability techniques like attention visualization or attributions are rarely integrated. In contrast, XTinyHAR addresses these gaps by combining a lightweight ViT backbone with cross-modal distillation, edge deployment profiling, and explainability tools–making it practical for ubiquitous, low-power HAR applications.

Proposed methodology

This section details the architecture, data processing pipeline, and training strategy of the proposed XTinyHAR model, including its multimodal teacher, lightweight student, and explainability components for efficient and interpretable HAR.

Data description

To assess the performance of the proposed XTinyHAR model, we conduct experiments on two publicly available and widely used HAR datasets: UTD-MHAD and MM-Fit. Both datasets provide synchronized inertial and skeleton data, which are essential for training a multimodal teacher model and transferring knowledge to an inertial-only student network through knowledge distillation.

The UTD-MHAD dataset 31 is a benchmark dataset designed for HAR tasks involving multiple sensing modalities. It includes data from 8 subjects performing 27 actions, such as walking, clapping, punching, kicking, and sit-to-stand transitions. Each subject repeats every activity four times, resulting in 861 labeled samples. The dataset includes two synchronized data modalities: skeleton data obtained from a Microsoft Kinect sensor, which captures 3D positions of 20 joints per frame, and inertial data collected from a wearable sensor mounted on the wrist, recording tri-axial accelerometer, gyroscope, and magnetometer signals at 50 Hz. In our setup, we train the teacher model using both skeleton and inertial modalities, while the student model is restricted to inertial input only. The clean acquisition setup and accurate synchronization between the modalities make UTD-MHAD a strong candidate for controlled evaluation of the proposed model and its distillation effectiveness.

The MM-Fit dataset 32 is a large-scale multimodal dataset created for fine-grained fitness activity recognition in unconstrained environments. It features recordings from more than 70 participants performing 12 distinct exercises, including squats, lunges, jumping jacks, shoulder presses, and more. These activities are captured using both visual and wearable sensing devices. Skeleton data is extracted from RGB videos using the OpenPose library, providing 2D joint coordinates over time. While this introduces more noise compared to depth-based sensors like Kinect, it offers a more realistic representation of visual input variability encountered in real-world scenarios. Concurrently, inertial data is collected via wearable IMUs placed on various body parts, such as the wrists, ankles, and torso, measuring both acceleration and angular velocity at high sampling rates. For our purposes, the teacher model is trained using both modalities, and the student model uses only the IMU data. The diversity in subjects, motion complexity, and environmental conditions in MM-Fit presents a challenging yet valuable benchmark for evaluating the robustness and generalizability of the unimodal student model.

Data pre-processing

A carefully designed pre-processing pipeline is essential for ensuring high-quality, aligned, and interpretable input to both the multimodal teacher and the unimodal student models. Since the XTinyHAR model involves both skeleton and inertial data streams with different sampling characteristics and statistical properties, a combination of traditional and tailored techniques is used to transform raw sequences into transformer-ready embeddings. This section provides an in-depth explanation of each component of the pre-processing pipeline, with a focus on how it contributes to learning effectiveness and model generalization.

(1) Sliding window segmentation33

All sensor sequences are segmented into fixed-length windows using a sliding window approach with partial overlap. Specifically, we use a window size W (e.g., 100 time steps) and a stride of W/2 to maintain overlap between adjacent segments. This method ensures that sufficient temporal context is captured in each input sample while increasing the number of training examples available. For HAR, overlapping windows are particularly beneficial in capturing action transitions (e.g., from sitting to standing) and handling subtle temporal boundary inconsistencies. Moreover, this segmentation helps prevent label leakage across training and test folds by treating segments as atomic units.

(2) Signal normalization34

Inertial sensor readings, especially accelerometer and gyroscope values, are sensitive to variations in device placement, orientation, and subject dynamics. To ensure that the model learns relative motion patterns rather than absolute signal magnitudes, we normalize each channel independently using z-score normalization: zero mean and unit variance. This step is crucial to prevent certain axes or modalities from dominating the learning process due to scale differences. For skeleton data, we re-center joint coordinates around a central reference joint (e.g., pelvis or spine base) and normalize based on limb length or skeletal bounding box size. This ensures spatial invariance to subject size and positioning.

(3) Modality alignment and resampling35

In real-world multimodal HAR datasets like MM-Fit, different modalities (e.g., IMUs and OpenPose-based skeletons) are often captured at different frame rates and are not inherently synchronized. Misalignment between these streams can mislead the teacher model during multimodal fusion, especially in attention-based architectures that rely on strict temporal correspondence. To address this, we resample both modalities to a common frequency (e.g., 50 Hz) using linear interpolation. Timestamps are used to match the nearest corresponding frame across streams. This alignment ensures that the skeleton and inertial features used in the same training sample represent the same action phase, which is vital for learning accurate cross-modal representations and enabling effective knowledge transfer during distillation.

(4) Dynamic patch construction for transformer input36

Transformers operate on discrete tokens; therefore, continuous time-series data must be converted into patch-based representations36. For inertial data, we divide each segmented window into \(N = \left\lfloor W/P \right\rfloor\) non-overlapping patches of size \(P \times C_{\text {iner}}\), which are flattened and linearly projected into a D-dimensional latent space. While prior HAR methods rely on fixed patch sizes, our approach introduces a dynamic patching mechanism, where the patch size P is adaptively selected based on the statistical variability of motion within each window.

Specifically, we compute the intra-window motion variance using the average per-axis standard deviation of the inertial channels:

where \({\bf x}_c \in \mathbb {R}^{W}\) denotes the signal of the c-th inertial axis (e.g., accelerometer X, Y, Z and gyroscope X, Y, Z) over the window of length W. The resulting scalar \(\mathscr {V}_w\) captures the overall motion intensity and is used to determine the appropriate patch size:

Here, \(P_{\text {min}}\) and \(P_{\text {max}}\) denote the lower and upper bounds of patch sizes (empirically set to 10 and 30), while \(\tau _{\text {high}}\) and \(\tau _{\text {low}}\) define motion variance thresholds to trigger fine-grained or coarse-grained patching. For intermediate cases, we apply linear interpolation to smoothly adjust P based on \(\mathscr {V}_w\). This dynamic adjustment enables fine temporal resolution for fast, dynamic actions (e.g., clapping, waving) and reduces redundancy for slow or static behaviors (e.g., standing, sitting), enhancing temporal sensitivity and computational efficiency without sacrificing performance. For skeleton sequences, patches are constructed by grouping joint positions over a temporal interval, preserving both spatial and motion continuity. This dynamic scheme allows the Transformer to operate efficiently without sacrificing representational granularity.

(5) Noise-robust augmentation and filtering37

Sensor noise is an inevitable component of real-world HAR data, especially from wearable IMUs. Rather than eliminating all noise, we introduce controlled noise-aware augmentation during training to improve model robustness. Gaussian noise with low variance is added to the inertial signals, simulating slight tremors, misplacement, or environmental interference. Additionally, a first-order low-pass Butterworth filter (with a cutoff frequency of 20 Hz) is applied to the accelerometer signals to remove high-frequency artifacts not relevant to human movement. For skeleton data, we apply a temporal moving average filter (e.g., over 3 frames) to smooth sudden keypoint jitter introduced by OpenPose or Kinect tracking errors.

(6) Final embedding and encoding38

After segmentation, normalization, patching, and alignment, each patch is projected to a fixed-size embedding space using a learnable linear transformation. To encode temporal order which is otherwise ignored in vanilla Transformers we add learnable positional embeddings to each token. A class token is prepended to each sequence, allowing the Transformer to aggregate global context across all patches. These encoded sequences are then passed to the transformer-based encoders of the multimodal teacher or the unimodal student. This consistent encoding scheme ensures compatibility across modalities and maintains the structural requirements of the Transformer architecture.

Introducing the proposed model

In this section, we present the architecture and components of our proposed model (see Fig. 2), which leverages knowledge distillation to train a lightweight Inertial Transformer model for HAR using only inertial data.

Overview of the proposed Model XTinyHAR. A lightweight inertial transformer (right) is trained via knowledge distillation from a multimodal spatio-temporal ConvTransformer (left), which integrates skeleton and inertial data. The student model learns to replicate the teacher’s predictions using only inertial input.

Inertial transformer (IT)

The IT serves as the compact and efficient student model in the XTinyHAR framework. Unlike conventional transformer architectures that utilize both encoder and decoder modules for tasks such as sequence-to-sequence translation39, IT employs only the encoder. This is a deliberate design choice, as activity recognition is a classification task where the goal is to map a time-series input to a discrete activity label, rather than generating output sequences. Thus, only the encoder is required to extract meaningful temporal and spatial patterns from inertial signals.

(1) ViT-inspired temporal modeling

The architecture of IT draws inspiration from the Vision Transformer (ViT)40, where a 2D image is first partitioned into fixed-size patches that are flattened and linearly embedded. In the context of inertial data, we treat the multichannel time-series input as a 2D structure with shape \((W, C_{\text {iner}})\), where W is the number of time steps in the window and \(C_{\text {iner}}\) is the number of inertial channels (e.g., accelerometer and gyroscope axes).

To mimic the ViT structure, the sequence is divided into \(N = \left\lfloor \frac{W}{P} \right\rfloor\) non-overlapping temporal patches, each spanning P time steps. Every patch \({\bf x}_p^i \in \mathbb {R}^{P \cdot C_{\text {iner}}}\) is flattened and projected into a D-dimensional embedding space using a learnable linear transformation:

This patch-wise embedding enables the model to focus on localized patterns within short intervals of the signal, while still being able to reason over long-term dependencies across patches via attention.

where: \({\bf x}_p^i \in \mathbb {R}^{P \cdot C_{\text {iner}}}\) is the \(i{\text {th}}\) temporal patch, flattened into a vector from P time steps and \(C_{\text {iner}}\) sensor channels. \({\bf W}_e \in \mathbb {R}^{D \times (P \cdot C_{\text {iner}})}\) is the learnable linear projection matrix that maps each patch to a D-dimensional embedding space. \({\bf b}_e \in \mathbb {R}^{D}\) is a learnable bias term added after projection. \({\bf z}_i^0 \in \mathbb {R}^{D}\) represents the embedded vector of the \(i{\text {th}}\) patch in the transformer input space. \(N = \left\lfloor \frac{W}{P} \right\rfloor\) is the number of patches obtained by segmenting the window of W time steps using a patch size of P. This formulation enables the transformer to process each temporal segment independently while preserving temporal alignment across attention layers.

(2) Class token and positional embedding41

Following the transformer formulation, a learnable class token \({\bf x}_{\text {class}}\) is appended to the beginning of the patch embedding sequence. This token serves as a global summary vector that, through the process of self-attention, aggregates information from all other tokens in the sequence. To preserve the order of patches crucial for time-series, we add a learnable one-dimensional positional embedding \({\bf E}_{\text {pos}} \in \mathbb {R}^{(N+1) \times D}\) to the input sequence:

In this formulation, \({\bf z}_0 \in \mathbb {R}^{(N+1) \times D}\) represents the initial input sequence to the transformer encoder, consisting of N patch embeddings and one class token, all projected to a common D-dimensional space. Each patch \({\bf x}_p^i\) is linearly transformed using the matrix \({\bf E} \in \mathbb {R}^{\frac{(W \cdot C_{\text {iner}})}{P} \times D}\), where W is the time window length, \(C_{\text {iner}}\) is the number of inertial channels, and P is the patch length in time steps. The positional embedding \({\bf E}_{\text {pos}} \in \mathbb {R}^{(N+1) \times D}\) is added element-wise to inject information about temporal order into the sequence, which is critical for modeling the sequential nature of human activity signals. This setup ensures that the model can learn both the content and position of each patch, improving its ability to capture time-dependent patterns in HAR tasks.

(3) Transformer encoder and temporal feature extraction42

The embedded sequence is processed by a stack of L transformer encoder layers. Each encoder layer is composed of a multi-head self-attention (MHSA) mechanism43 followed by a feedforward MLP. Both components are wrapped with residual connections and preceded by Layer Normalization (LN) to promote stable and efficient training. The computations at the l-th encoder layer are as follows:

In this setup, the input to each transformer layer \({\bf Z}^{l-1} \in \mathbb {R}^{(N+1) \times D}\) represents the sequence of token embeddings from the previous layer, where N is the number of temporal patches and D is the embedding dimension. The MHSA module first normalizes this input using LN and computes attention across all token positions, including the class token, resulting in an output \({\bf Z}' \in \mathbb {R}^{(N+1) \times D}\). This is followed by a residual connection to preserve original information. The MLP block further processes the normalized \({\bf Z}'\), typically consisting of two linear layers with a GELU activation in between, producing the updated sequence \({\bf Z}^l \in \mathbb {R}^{(N+1) \times D}\). This structure allows each token to incorporate global context and model temporal dependencies, which are essential for accurately distinguishing between human activities that may share similar local patterns but differ in global temporal structure.

The self-attention mechanism enables the model to compute pairwise interactions between all patch tokens, allowing it to capture both short-range and long-range dependencies which are crucial in HAR for distinguishing activities with overlapping or variable-length motions.

(4) Multi-head self-attention and MLP layers

Each MHSA layer splits the input embedding into H attention heads. For each head h, we project the input into query, key, and value vectors:

where \(d_k = D / H\). The outputs of all heads are concatenated and linearly projected to reconstruct the original embedding size. This multi-head structure enables the model to attend to different temporal substructures and representations simultaneously, which is particularly useful when activity transitions are subtle or multi-phase in nature.

Following the attention layer, the MLP block enhances token representations via non-linear transformation:

In this context, the input to each attention head is a token embedding \({\bf z}_i \in \mathbb {R}^{D}\), which is linearly projected into queries \({\bf Q}_h \in \mathbb {R}^{(N+1) \times d_k}\), keys \({\bf K}_h \in \mathbb {R}^{(N+1) \times d_k}\), and values \({\bf V}_h \in \mathbb {R}^{(N+1) \times d_k}\), where \(d_k = D/H\) is the dimension of each attention head. The attention score matrix \({\bf Q}_h {\bf K}_h^\top \in \mathbb {R}^{(N+1) \times (N+1)}\) measures the relevance of all token pairs in the sequence. After computing the attention-weighted values, the outputs from all H heads are concatenated to form a matrix in \(\mathbb {R}^{(N+1) \times D}\) and then passed through a linear projection. The subsequent MLP block receives this sequence and transforms each token vector via a two-layer fully connected network with intermediate hidden size \(D_{\text {ff}}\) (commonly 2D or 4D), which expands the token representations before projecting them back to \(\mathbb {R}^D\). This architectural design allows richer feature transformation and enhances the model’s capacity to capture complex activity dynamics in time-series data.

(4) Compactness and deployment efficiency

To ensure the IT model remains lightweight for on-device deployment, we adopt a minimal configuration of just two transformer encoder blocks (\(L = 2\)), small embedding sizes (e.g., \(D = 64\)), and reduced MLP widths. This architecture results in a significantly smaller model size and computational footprint compared to standard transformers, while retaining competitive performance through effective modeling of temporal structure. The reduced depth also mitigates vanishing gradient issues and improves interpretability.

(5) Final classification and output

The output of the last encoder layer contains the contextualized embeddings for all input patches and the class token. We isolate the embedding corresponding to the class token \({\bf z}_{\text {class}}^L\) and feed it to a simple MLP classification head with a softmax layer to obtain the final prediction vector \(\hat{{\bf y}}\):

This token effectively summarizes the entire input window, making the final prediction robust to local noise while being sensitive to global activity context.

Summary and implications

The Inertial Transformer transforms raw time-series inertial data into a sequence of learned representations through a combination of linear patch embedding, positional encoding, and self-attention. Its compact yet expressive design enables it to learn from temporal dynamics and classify human activities with high efficiency. The use of ViT principles, tailored for sequential inertial data, allows the model to maintain strong performance while remaining lightweight enough for real-time inference on edge devices. Through knowledge distillation from a larger multimodal teacher model, the IT further inherits generalized activity representations.

Spatio-temporal ConvTransformer (ST-ConvT)

The ST-ConvT44 acts as the multimodal teacher model in our XTinyHAR framework. It is specifically designed to capture spatial dependencies from skeleton data and temporal dependencies from both skeleton and inertial modalities44. This model is composed of three tightly integrated components: the Spatial Block, the Temporal Block, and the Attention Feature Fusion (AFF) module. Each plays a unique role in extracting structured and complementary information from multimodal human activity data.

Spatial block The Spatial Block is responsible for extracting meaningful spatial relationships embedded in the skeleton data. This module is visually depicted in Fig. 1 (orange section) and is designed to handle the spatial configuration of human joints efficiently. Unlike 1D time-series processing, skeleton data consists of structured joint coordinates where spatial proximity holds semantic meaning such as joints on the same limb or in symmetrical configurations. To capture these patterns, the Spatial Block employs two sequential 2D convolutional layers. These layers benefit from translation-invariance, a key inductive bias of CNNs, which makes them particularly effective at modeling localized spatial dependencies regardless of joint ordering or body pose variance.

Let us denote the skeleton input as \({\bf X}_{\text {skl}} \in \mathbb {R}^{C_{\text {SK}} \times J_{\text {SK}} \times W_{\text {SK}}}\), where \(C_{\text {SK}}\) represents the number of channels in the skeleton data (e.g., joint coordinates or velocity features), \(J_{\text {SK}}\) is the number of predefined joints in the human pose skeleton, and \(W_{\text {SK}}\) is the temporal window size. The input is treated as a 3D tensor compatible with 2D convolutions, where the axes correspond to channels, joints (as spatial height), and time steps (as width), respectively.

To adapt this input to the standard convolutional format, we define \((C_{\text {in}}, H, W)\) for the convolution layer as \(C_{\text {in}} = C_{\text {SK}}, H = J_{\text {SK}}, W = W_{\text {SK}}\). Each 2D convolution layer uses a kernel of size (1, 9), which focuses on the time axis while maintaining the resolution across joints. This configuration enables the network to model motion correlations between adjacent joints over a temporal context of 9 frames, which is empirically shown to be sufficient for many human activities involving rhythmic limb motion or sequential transitions.

After passing through both convolution layers, the output of the Spatial Block is a feature tensor \({\bf s}_p \in \mathbb {R}^{C_{\text {out}} \times H_{\text {out}} \times W}\), where \(C_{\text {out}}\) is the number of output channels, \(H_{\text {out}}\) is the resulting height (joint feature dimension), and W is retained from the input. This output encapsulates joint-level motion descriptors distributed over time.

To align this representation with the Transformer architecture, the output tensor is reshaped into a patch-wise sequence. Assuming a patch size of P, the reshaped feature becomes \({\bf s}_p \in \mathbb {R}^{N \times (C_{\text {out}} \cdot H_{\text {out}} \cdot \frac{W}{P})}\), where \(N = \frac{W}{P}\) is the number of temporal patches. This transformation is essential to bridge the gap between convolutional encoding and sequence modeling via attention mechanisms.

The operation of the Spatial Block can be summarized as follows:

This reshaped feature sequence is then passed into the Temporal Block for self-attention-based temporal modeling. The Spatial Block, therefore, plays a vital role in translating joint-level pose data into a structured, temporally-aligned embedding that can be effectively processed by the rest of the Transformer pipeline.

Temporal block The Temporal Block in our architecture shares the same design as the IT, applying the same principles of self-attention and patch-based embedding to model temporal dependencies. Its main function is to process sequences of tokenized inputs derived from either skeleton or inertial data. For the skeleton stream, the spatially encoded features \({\bf s}_p\) obtained from the Spatial Block are reshaped and transformed into a sequence representation denoted as \({\bf z}_{0}^{\text {skl}} \in \mathbb {R}^{B \times P \times D}\), where B is the batch size, P is the number of patches, and D is the constant embedding dimension used across the transformer layers. This transformation enables the model to process temporally distributed spatial features in a unified embedding space.

Similarly, the inertial data undergoes a corresponding transformation. Let \({\bf X}_{\text {iner}} \in \mathbb {R}^{W \times C_{\text {iner}}}\) represent the raw inertial input, where W is the temporal window size and \(C_{\text {iner}}\) is the number of inertial channels. This input is reshaped into patches \({\bf i}_p \in \mathbb {R}^{N \times \frac{W \cdot C_{\text {iner}}}{P}}\), where N is the number of patches determined by the patch size P. To align the reshaped data with the expected input of the Transformer Encoder, each patch is projected into a fixed D-dimensional latent space, producing \({\bf x} \in \mathbb {R}^{N \times D}\).

To capture positional dependencies and maintain order information in the sequence, a learnable one-dimensional positional embedding \({\bf E}_{\text {pos}}\) is added to the patch embeddings. In addition, a class token embedding (either \({\bf i}_{\text {class}}\) or \({\bf s}_{\text {class}}\)) is prepended to each sequence to aggregate global information during the attention process. The final input sequence to the Transformer encoder for the inertial stream becomes:

For the skeleton stream, the Transformer input is formed similarly:

Once the sequences for both modalities are properly formed, they are fed into independent Temporal Blocks that each consist of a Transformer encoder. These blocks perform self-attention across the temporal dimension, allowing the model to identify motion patterns and transitions over time. The encoder processes each modality’s sequence separately, yielding hidden representations:

This structure ensures that both skeleton and inertial modalities are embedded and temporally encoded in parallel, allowing for later fusion. By maintaining separate encoders initially, the model can learn modality-specific temporal patterns before integrating complementary information in the fusion stage.

Attention feature fusion After processing the skeleton and inertial modalities independently through their respective Temporal Blocks, we obtain intermediate latent representations \({\bf z}_1^{\text {skl}}\) and \({\bf z}_1^{\text {iner}}\) from the first transformer encoder layer. These representations, although extracted from separate modalities, are temporally aligned since they correspond to the same sequence window. To integrate the complementary information captured by these two modalities, we perform an early fusion operation referred to as Attention Feature Fusion (AFF).

In AFF, the embeddings \({\bf z}_1^{\text {skl}}\) and \({\bf z}_1^{\text {iner}}\) are fused via element-wise addition to generate a combined representation \({\bf z}_1^{\text {comb}}\):

This fusion method assumes that both modalities are aligned in time and patch index, allowing the model to directly combine their semantic information without the need for additional alignment layers. The addition operation ensures that the model maintains consistent embedding dimensionality while integrating the discriminative features learned from spatial and inertial cues. The Encoder that follows the fusion step refines this combined representation by attending to global temporal relations in the unified feature space.

For deeper layers in the network, this fusion operation continues recursively. Specifically, the output from the previous combined layer \({\bf z}_{l-1}^{\text {comb}}\) is fused again with the corresponding latent output from the inertial stream \({\bf z}_{l-1}^{\text {iner}}\) at each subsequent encoder layer l, yielding:

This progressive integration ensures that the inertial modality continues to inform the combined embedding, helping the network focus on motion-relevant signals that might evolve over time. Moreover, fusing at multiple stages rather than just at the output layer allows the transformer to perform multi-level reasoning across the combined modality space.

The final activity prediction is generated using the output class token from the last encoder block, denoted as \({\bf z}_{L,0}^{\text {comb}}\). This token is passed through a lightweight MLP classifier with a softmax activation to produce the final probability distribution over activity classes:

In summary, the Attention Feature Fusion module plays a crucial role in bridging the unimodal representations of skeleton and inertial data into a coherent and jointly learned embedding space. By aligning and combining modality-specific knowledge early and progressively across layers, AFF enhances the model’s capacity to recognize complex human activities that are not easily discernible from any single modality alone.

Multimodal to unimodal knowledge distillation

In the proposed XTinyHAR framework, we adopt an offline knowledge distillation45 paradigm where the multimodal teacher is pre-trained and fixed prior to student training46. This design choice is motivated by several practical and methodological factors. First, offline distillation offers training stability and modularity, allowing the teacher to fully converge using richer multimodal signals (e.g., inertial and skeleton) before transferring knowledge to the unimodal student. This ensures that the teacher’s outputs–used as soft supervision–are stable, informative, and not co-evolving with the student, which can be problematic in online setups. Second, offline distillation allows for efficient reuse of the teacher model across multiple student architectures or ablation configurations without retraining the teacher each time, significantly reducing computational overhead. Third, in our HAR scenario, the teacher’s access to both skeleton and inertial data is only available during training, aligning naturally with an offline setup, while the deployment environment (e.g., on wearables or smartphones) only supports unimodal inference. Furthermore, online distillation approaches typically require synchronous dual-model inference and parameter updates, which introduce non-trivial memory and latency costs that contradict the design goals of ultralight and real-time HAR models like XTinyHAR. Hence, offline distillation emerges as a strategically appropriate and resource-efficient method that maximizes cross-modal supervision while preserving student simplicity and edge deployability.

(1) Distillation setup and input modalities

The knowledge distillation47 begins only after the teacher model has been fully trained using multimodal inputs. During the distillation phase, the teacher STConvT receives inputs from both the skeleton and inertial streams, while the student IT is limited to inertial data alone. This asymmetric setup enables the student to learn cross-modal generalizations and nuanced patterns, even though it cannot directly observe the skeleton modality.

(2) Soft predictions and temperature scaling

Instead of directly training the student to replicate hard ground-truth labels, the distillation process encourages the student to match the softened output probabilities (known as soft targets) produced by the teacher model. This is achieved by applying a temperature scaling technique48 to the teacher’s and student’s logits. Let \(z^i\) represent the unnormalized logit score of class i, the temperature-scaled softmax probability \(p_i\) is computed as:

Here, T is a temperature parameter (\(T > 1\)), which controls the softness of the output distribution. A higher temperature flattens the output distribution, revealing inter-class similarities and uncertainties that are often more informative than the ground truth labels alone. These softened distributions serve as richer learning signals for the student.

(3) KL-divergence loss for mimicking the teacher with temperature scaling

Let T be the temperature parameter used to soften the output distributions of the teacher and student models. Let \(P_{\text {teacher}}^{(T)}\) and \(P_{\text {student}}^{(T)}\) denote the softmax outputs of the teacher and student, respectively, scaled by temperature T. The Kullback-Leibler (KL) divergence loss for knowledge distillation is given by:

The softmax with temperature T is defined as:

where \(z_i\) is the logit for class i. A higher T produces a softer probability distribution, revealing dark knowledge in the teacher’s output. Following standard practice, we set \(T=3\) in all experiments (see “Ablation study 4: temperature and distillation coefficient tuning”). The multiplication by \(T^2\) ensures that the gradient magnitudes remain properly scaled during backpropagation. This softened KL loss encourages the student to align its confidence distribution with that of the teacher.

(4) Combined knowledge distillation loss

The overall training objective for the student model is a combination of two losses: (1) a standard cross-entropy loss between the student’s prediction \(y^{\text {stud}}\) and the ground-truth label \(y^{\text {gt}}\), and (2) the KL-divergence loss between the student’s and teacher’s soft predictions49:

This combined loss ensures that the student model is both accurate with respect to ground truth and aligned with the multimodal teacher’s output distribution. Importantly, this dual-target supervision bridges the modality gap and imparts knowledge from skeleton signals indirectly, helping the student model generalize better even under unimodal constraints.

Discussion and implications

The use of multimodal-to-unimodal knowledge distillation addresses one of the central challenges in lightweight HAR, preserving recognition accuracy while minimizing sensing requirements and computational cost.

Moreover, this framework allows deployment in scenarios where skeleton capture (e.g., via depth sensors or motion capture systems) is unavailable, impractical, or too power-intensive. The student IT becomes a modality-aware yet self-sufficient recognizer capable of real-time operation on edge devices, wearables, or smartphones.

Explainable AI

As HAR systems become more prevalent in domains such as health monitoring, fitness coaching, and assistive living50, interpretability is no longer optional it is essential. In many deployment scenarios, especially those involving vulnerable populations or safety-critical decisions, understanding why a model made a particular prediction is just as important as achieving high accuracy. Given that XTinyHAR is designed to function solely with inertial data in a lightweight and deployable manner, it becomes imperative to incorporate Explainable AI (XAI) techniques51 that provide transparency into the model’s internal reasoning.

To this end, we apply a suite of XAI methods attention analysis52, Integrated Gradients, attention rollout, and attention similarity to both the UTD-MHAD and MM-Fit datasets. These techniques allow us to probe the internal representations of the IT model and evaluate the interpretability and faithfulness of knowledge transfer from the multimodal teacher model.

Attention visualization52 We begin by analyzing the attention scores associated with the class token in the IT model, which summarize the model’s focus across different temporal patches. These attention maps reveal which segments of the input signal were most influential in the final prediction. Figures 3 and 4 show that the student model pays attention to segments consistent with key motion patterns, closely mirroring the temporal focus of the teacher model despite having access to only inertial input.

Attention distribution over time for the UTD-MHAD dataset. The student model shows a strong temporal focus that aligns with the teacher, reflecting successful knowledge transfer from multimodal to unimodal input.

Attention distribution over time for the MM-Fit dataset. Despite more complex and varied motions, the student still effectively mimics the teacher’s attention focus.

Integrated gradients attribution53 To understand how XTinyHAR associates specific input segments with its predictions, we apply Integrated Gradients (IG), an attribution method that reveals how much each input feature contributes to the final output. IG computes gradients along a linear path from a baseline (e.g., a zero input) to the actual input, attributing influence scores to each sensor channel and time step. This allows us to evaluate whether the model grounds its predictions in physically meaningful patterns.

Figure 5 presents the IG heatmap for a representative action in the UTD-MHAD dataset. Noticeably, the model assigns high attribution scores to segments corresponding to rapid motion changes such as punch acceleration or sit-to-stand transitions events that are strongly discriminative in HAR. For instance, peaks in the vertical accelerometer and gyroscope channels exhibit intensified responses, indicating that these dynamic features are key to the model’s decision.

In Fig. 6, which shows the MM-Fit dataset, the model similarly focuses on fine-grained patterns such as the rhythmic cycles of squats or shoulder presses highlighting the discriminative value of periodicity and coordination. The highlighted zones in both figures are not only numerically significant (as denoted by brighter annotations) but also correspond with human-understandable biomechanical features. This alignment between attribution and domain-specific intuition enhances the model’s trustworthiness.

Integrated gradients heatmap for a sample from UTD-MHAD. The X-axis represents temporal patches (T0–T9), and the Y-axis denotes inertial signal channels. Each cell indicates the relevance score for the corresponding modality and time step in contributing to the model’s decision.

Integrated gradients attribution heatmap for MM-Fit. Highlighted regions correspond to complex fitness motions with strong periodicity. The student model relies on subtle sensor variations that align well with physical movement cycles.

Attention rollout54 Attention rollout provides another layer of insight by revealing how attention evolves across Transformer layers. By multiplying the attention matrices layer by layer, this method identifies which input patches accumulate the highest attention as information propagates through the network. Figures 7 and 8 show that early attention is relatively diffuse but becomes increasingly concentrated on relevant patches deeper in the network.

Attention rollout matrix for UTD-MHAD. Attention becomes increasingly concentrated on semantically meaningful segments across Transformer layers, illustrating a refined temporal abstraction.

Attention rollout for MM-Fit. The model develops focus on critical movement repetitions through the network depth, showing a learned sense of action structure.

In UTD-MHAD (see Fig. 7), patches corresponding to the middle of the time window gain higher attention in the final layers, suggesting the model gradually shifts focus to motion peaks. Similarly, in MM-Fit (see Fig. 8), attention accumulation follows the rhythm of repetitive exercises, highlighting periodic regions with strong biomechanical cues. These visualizations reinforce that the model is not just memorizing data but hierarchically learning temporal dependencies critical for HAR.

Attention similarity analysis55 To validate the effectiveness of the knowledge distillation process, we evaluate how well the student model’s attention distribution mimics that of its multimodal teacher. Using cosine similarity over patch-wise attention vectors, we quantitatively assess alignment between both models’ internal focus.

To quantify this similarity, we compute the mean cosine similarity between the attention rollout vectors of the student and teacher models across the test set. Let \({\bf a}^{(t)}\) and \({\bf a}^{(s)}\) denote the flattened attention vectors from the teacher and student models respectively, then the cosine similarity is given by:

Across all test samples, the mean cosine similarity scores between the teacher and student were:

-

UTD-MHAD: 0.842

-

MM-Fit: 0.857

As shown in Fig. 9, the similarity map for UTD-MHAD reveals strong correlation, particularly around the temporal regions most relevant for classification. The student not only approximates the teacher’s predictions but also mirrors its attention flow a strong indicator of effective distillation.

Similarly, in Fig. 10, the student demonstrates high alignment in MM-Fit actions, even under more complex movement variability and noisy skeleton extraction. The ability of a unimodal model to inherit this internal reasoning pathway highlights the success of our XTinyHAR distillation pipeline.

Cosine similarity of attention distributions between teacher and student on UTD-MHAD. Strong correspondence in temporal focus indicates successful internalization of teacher reasoning.

Attention similarity for MM-Fit. Despite the student using only inertial data, it replicates the teacher’s multimodal attention distribution in key segments.

Summary The combined use of Integrated Gradients, attention rollout, and attention similarity not only enhances interpretability but also confirms the success of cross-modal knowledge transfer. These methods help explain why XTinyHAR makes accurate predictions, how it processes information through depth, and to what extent its behavior aligns with its more complex teacher. Ultimately, this suite of XAI tools reinforces confidence in the XTinyHAR model’s deployment for safety-critical and human-facing applications.

Limitations of integrated gradients While IG provides valuable insights into how different temporal segments and sensor channels contribute to XTinyHAR’s predictions, it is not without limitations when applied to time-series data. First, IG is baseline-sensitive, meaning that the choice of reference input (e.g., a zero vector or mean signal) can significantly affect the resulting attributions. In HAR tasks, where absolute signal scales and sensor biases vary across devices, this dependency may introduce ambiguity in the interpretation of relevance scores. Second, IG assumes a linear interpolation between baseline and input, which may not always capture the non-linear temporal dynamics inherent in human motion signals. Finally, IG explanations can become noisy in cases of highly repetitive actions (e.g., running or cycling), where small sensor fluctuations are exaggerated along the integration path. These limitations suggest that while IG is a useful attribution tool, it should be complemented with other XAI methods such as attention rollout or attention similarity to obtain a more reliable and holistic view of the model’s reasoning process.

Experimental results and analyses

This section presents a comprehensive evaluation of the XTinyHAR model across benchmark datasets to validate its accuracy, efficiency, and interpretability.

Experimental setup

All experiments in this study were conducted using Python 3.9 with PyTorch 1.13 as the primary deep learning framework. The model training and inference pipelines were implemented using efficient NumPy-based preprocessing, custom DataLoader wrappers, and PyTorch’s ‘nn.Transformer‘ modules adapted for time-series data. To facilitate reproducibility, all random seeds were fixed across NumPy, PyTorch, and CUDA backends. Training and evaluation were executed on a Linux-based system equipped with an NVIDIA RTX 3090 GPU (24 GB VRAM), 64 GB RAM, and an AMD Ryzen 9 5950X 16-core processor. GPU acceleration was leveraged during training and evaluation using CUDA 11.6 and cuDNN libraries for fast matrix operations and attention computations.

For both UTD-MHAD and MM-Fit datasets, the raw data streams were segmented into fixed-length windows of 3 seconds with 50% overlap, resulting in consistent temporal input shapes. The inertial signals were resampled to 50 Hz and standardized using z-score normalization, while skeleton joint coordinates were aligned based on a central joint and normalized relative to limb length to ensure anatomical consistency. The multimodal teacher model was trained using both skeleton and inertial modalities with the full Spatio-Temporal ConvTransformer architecture, including separate temporal encoders followed by a feature fusion module. The student model, XTinyHAR, was restricted to inertial data only and employed a lightweight Transformer with \(L=2\) encoder layers, \(D=128\) embedding size, and \(H=4\) attention heads.

The proposed XTinyHAR model was evaluated using a subject-independent protocol, meaning that all test subjects were unseen during training. For UTD-MHAD, we used a leave-one-subject-out (LOSO) approach, ensuring that the model was evaluated on one subject at a time while being trained on the remaining ones. Similarly, in the MM-Fit dataset, we adopted a fixed subject-wise split with separate identities between training and testing. This ensures our evaluation focuses on generalization across individuals rather than memorization of specific motion patterns.

However, in this study, we did not explicitly perform cross-dataset generalization experiments (e.g., training on UTD-MHAD and testing on MM-Fit or vice versa). While our use of subject-wise testing protocols provides a strong measure of robustness, future work will explore zero-shot or transfer learning scenarios to further assess the generalizability of XTinyHAR across diverse data distributions and sensor configurations.

In both datasets, no random splits were used at the sequence or sample level, thereby preventing data leakage and ensuring that performance metrics reflect true inter-subject generalization rather than memorization.

We adopted a patch-based input structure where each sequence was divided into non-overlapping segments of length \(P=20\), embedded via linear projections, and enriched with learnable positional encodings. The training was performed using the Adam optimizer with an initial learning rate of \(1\times 10^{-4}\), weight decay of \(1\times 10^{-5}\), and a cosine annealing schedule with warm restarts. To accelerate convergence and improve generalization, we used a batch size of 64 and trained for 20 epochs with early stopping based on validation loss plateau. For the knowledge distillation process, we adopted a hybrid loss function that combines hard-target cross-entropy loss with soft-target Kullback–Leibler (KL) divergence, scaled by a temperature factor \(T=3\) and a distillation coefficient \(\alpha = 0.7\). This allowed the student model to learn from both the teacher’s softened outputs and the ground-truth labels.

Evaluation metrics

To assess the effectiveness and practicality of the proposed XTinyHAR model, we employ a suite of evaluation metrics that measure not only classification accuracy but also model robustness, computational efficiency, and interpretability. These metrics ensure a holistic evaluation suitable for real-world HAR deployment.

(1) Classification accuracy. This is the primary metric, defined as the ratio of correctly predicted activity labels to the total number of predictions:

(2) Precision, recall, and F1-score. These metrics are especially important for imbalanced datasets. Precision measures the ratio of true positives to predicted positives, while recall measures the ratio of true positives to actual positives. The F1-score is their harmonic mean:

These are computed per class and macro-averaged across all classes.

(3) Confusion matrix. The confusion matrix provides a detailed breakdown of prediction errors across all classes, highlighting common misclassifications.

(4) Cohen’s Kappa. Cohen’s Kappa measures agreement between predicted and true labels while accounting for chance agreement:

Where \(p_o\) is the observed agreement and \(p_e\) is the expected agreement by chance.

(5) Model size and memory footprint. We report the total number of parameters and memory usage in megabytes (MB) to assess the deployability on edge devices.

(6) Inference time (latency). This metric measures the average prediction time (in milliseconds) on both CPU and GPU environments to evaluate real-time feasibility.

(7) FLOPs (floating point operations). The number of floating-point operations per inference is computed to estimate computational cost. Lower FLOPs reflect higher efficiency.

Performance evaluation

The training and validation curves for both datasets, shown in Fig. 11, provide a comprehensive view of the learning behavior and generalization capability of the proposed XTinyHAR model. These plots reflect how well the model fits the training data while maintaining performance on unseen validation data.

Training, validation accuracy, and loss curves for UTD-MHAD and MM-Fit datasets.

For the UTD-MHAD dataset, the training accuracy rapidly increases and stabilizes above 99% by the fifth epoch, suggesting that the model effectively learns meaningful patterns early during training. The validation accuracy closely follows the training curve, ultimately converging around 98.7%, which demonstrates minimal overfitting. In parallel, both the training and validation losses show a consistent and sharp decline, converging near zero. This behavior confirms that the model not only achieves high accuracy but also maintains stable and reliable convergence.

In the case of the MM-Fit dataset, despite its inherent complexity due to fine-grained fitness actions, the model exhibits similarly strong behavior. Both training and validation accuracy surpass 98.5% and stabilize quickly, indicating that XTinyHAR generalizes well even under more challenging multimodal scenarios. The close proximity between training and validation accuracy curves suggests high robustness. Moreover, the training and validation losses converge swiftly to minimal values, reinforcing the model’s resistance to overfitting.

The confusion matrix for the UTD-MHAD dataset (see Fig. 12) demonstrates that the XTinyHAR model achieves exceptionally high classification performance across all 27 activity classes. Nearly all predictions align along the main diagonal, reflecting strong agreement between true and predicted labels. This indicates that the model effectively distinguishes between a wide range of complex human actions, including dynamic activities such as jumping or walking, as well as more subtle gestures like hand clapping or sitting. The minimal presence of off-diagonal elements highlights a low rate of misclassification. Notably, even among visually similar activities, such as “sit-to-stand” versus “stand-to-sit,” the model maintains accurate discrimination, which affirms the robustness of the attention mechanisms and the success of knowledge distillation from the multimodal teacher.

Confusion matrix produced by the proposed XTinyHAR model on the UTD-MHAD dataset. Each row represents the true activity class, and each column corresponds to the predicted class. The strong diagonal dominance reflects the model’s high classification accuracy and low misclassification rate across 27 activity classes.

For the MM-Fit dataset (refer to Fig. 13), the confusion matrix also reveals dominant diagonal patterns, indicating highly accurate predictions across the 12 fitness-related classes. Despite being trained only on inertial data, the model correctly identifies most of the repetitions and exercises, such as squats, push-ups, and lunges. Misclassifications are minimal and largely restricted to neighboring or semantically similar movements, such as minor confusions between high knees and butt kicks, which can exhibit overlapping temporal patterns in accelerometer signals. These limited errors suggest that the model retains a fine-grained understanding of motion nuances even in high-intensity or repetitive activity scenarios.

Confusion matrix produced by the proposed model on the MM-Fit dataset. The matrix reveals strong diagonal dominance, indicating high per-class classification accuracy. Misclassifications are minimal and occur between classes with similar movement patterns.

Table 2 and Fig. 14 provide a comprehensive overview of the XTinyHAR model’s performance across two benchmark datasets: UTD-MHAD and MM-Fit.

The results indicate that the model delivers outstanding accuracy on all phases training, validation, and testing achieving 99.01% training accuracy on UTD-MHAD and 98.79% on MM-Fit. Validation and testing accuracies remain above 98.4% across both datasets, confirming excellent generalization without overfitting.

Precision, recall, and F1-score metrics are tightly aligned, all hovering around 98.7% and 98.5% for UTD-MHAD and MM-Fit respectively, which indicates that the model not only makes accurate predictions but also maintains balance between false positives and false negatives. The high Cohen’s Kappa values (0.985 and 0.983) further reinforce that the model’s predictions are significantly better than chance and exhibit high inter-rater reliability.

From a deployment perspective, the model remains lightweight and efficient. With a compact model size of only 2.45 MB and a memory footprint under 7.2 MB, XTinyHAR is highly suitable for edge devices. Moreover, the low inference times 3.1 ms on CPU and 1.2 ms on GPU enable real-time HAR. Additionally, the computational cost remains minimal, requiring only 11.3 million FLOPs per inference, confirming suitability for low-power embedded applications.

Comparison of the proposed model’s performance across the UTD-MHAD and MM-Fit datasets.

In addition to overall performance, we also report the per-class F1-scores on the MM-Fit dataset to assess class-wise balance and robustness (see Table 3). Across 10 representative activity classes, the XTinyHAR model consistently achieves F1-scores above 98%, with a macro-average of 98.55%. This indicates strong discriminative ability across both dynamic and static movements. Notably, performance remains high even for transitions and posture-based actions such as “Sit-to-Stand” and “Stand Still”, highlighting the model’s robustness to subtle motion variance and sensor noise. The minimal inter-class variance suggests that the model does not disproportionately favor dominant or high-frequency activities, which is critical for fair performance in fitness and healthcare applications.

Ablation study 1: the effects of removing KD, PE, and AR

To rigorously assess the individual contributions of critical components within the XTinyHAR architecture, we conduct an extensive ablation study on the UTD-MHAD dataset. We examine the effects of removing three major mechanisms—Knowledge Distillation (KD), Positional Embeddings (PE), and Attention Rollout (AR) – while keeping the rest of the architecture unchanged. Additionally, we evaluate the standalone effectiveness of each component in isolation to quantify its intrinsic contribution. The complete model serves as the baseline, delivering an accuracy of 98.71%, F1-score of 98.71%, precision of 98.72%, recall of 98.71%, Cohen’s kappa of 0.985, and 11.3 million FLOPs per inference (see Table 4).

When Knowledge Distillation is removed, the model’s accuracy drops significantly to 96.84%, along with a corresponding decrease in F1-score (96.80%) and Cohen’s kappa (0.962). These declines clearly demonstrate that the guidance provided by the multimodal teacher is vital for the student model to learn robust, discriminative features from inertial data alone. The cross-modal supervision proves especially effective in preserving motion semantics and inter-class separability.

Removing Positional Embeddings results in a reduced accuracy of 97.42% and F1-score of 97.38%. This suggests that temporal encoding of the input sequence is essential for capturing the sequential dynamics inherent to human activities. Without positional information, the model finds it more difficult to distinguish temporally similar but contextually distinct patterns leading to confusion in classification.

Finally, when Attention Rollout is disabled, the model still performs reasonably well with an accuracy of 98.11% and F1-score of 98.08%, indicating that while the mechanism is not as crucial for prediction as KD or positional embeddings, it still contributes to temporal reasoning and enhances the flow of attention across layers. This component primarily improves interpretability but also has a non-negligible effect on classification performance.

To further isolate the effect of each component, we evaluate minimal versions of the model with only a single mechanism included. When only KD is enabled (without PE or AR), accuracy reaches 96.41%, highlighting KD’s dominant standalone contribution. Similarly, when only PE is enabled, the accuracy reaches 95.78%, confirming that temporal ordering is an independent source of representational strength. The attention rollout component alone yields 95.01%, underlining its minor but meaningful benefit.

In conclusion, the ablation results show that each component—particularly Knowledge Distillation and Positional Embeddings—contributes meaningfully to the model’s predictive accuracy, interpretability, and generalization. Their inclusion is justified not only by performance gains but also by the structural elegance and transparency they add to XTinyHAR.

Ablation study 2: averaging strategies in cross-validation

To evaluate the effect of different averaging strategies in multi-class classification, we conduct an ablation study comparing macro-averaging and micro-averaging across five-fold cross-validation. Macro-averaging treats all classes equally by averaging individual class performance, while micro-averaging computes a global metric by aggregating all true positives, false negatives, and false positives. Table 5 summarizes the classification performance of XTinyHAR on UTD-MHAD and MM-Fit datasets using both approaches.

Results show that the difference between macro- and micro-averaging is minimal, with micro-averaging slightly outperforming in overall accuracy due to the model’s robust generalization on high-frequency activity classes. The macro-average offers a better reflection of performance on less frequent or imbalanced classes. The close agreement between the two methods (less than 0.4% difference) suggests that XTinyHAR maintains balanced accuracy across both frequent and infrequent classes, reinforcing its suitability for real-world deployments with imbalanced activity distributions.

Ablation study 3: impact of multimodal vs. unimodal teacher in distillation

To further dissect the origin of the performance gains in our student model, we perform a targeted ablation study comparing the knowledge transfer from two distinct teacher models: (i) the default multimodal teacher trained jointly on skeleton and inertial signals, and (ii) a control unimodal teacher trained solely on inertial data. Both teachers share the same ConvTransformer backbone to ensure a fair comparison. The resulting student model is then trained using knowledge distillation from either teacher under identical hyperparameters and training settings. As shown in Fig. 15, the student distilled from the multimodal teacher achieves significantly better accuracy on both datasets (98.71% on UTD-MHAD and 98.55% on MM-Fit) compared to the unimodal-teacher counterpart (96.98% and 96.13% respectively). This 1.5–2.4% absolute improvement illustrates that the cross-modal supervision from skeleton data injects richer temporal-spatial context into the student model, improving its ability to reason from inertial inputs alone. These results provide strong empirical justification for using multimodal teachers in constrained unimodal inference settings.

Accuracy comparison between unimodal and multimodal teacher distillation on two datasets.

Ablation study 4: temperature and distillation coefficient tuning

To empirically validate our choices of the temperature scaling parameter T and distillation weight coefficient \(\alpha\) in the knowledge distillation loss, we perform a thorough ablation study. The temperature T controls the softness of the output probability distribution, revealing inter-class similarities, while \(\alpha\) balances the impact between the hard-target cross-entropy loss and the soft-target KL divergence loss.

We experimented with a grid of \(T \in \{1, 2, 3, 4, 5\}\) and \(\alpha \in \{0.3, 0.5, 0.7, 0.9\}\) using the XTinyHAR student model trained on the UTD-MHAD dataset. All experiments used the same teacher model and kept all other hyperparameters fixed to isolate the effects of T and \(\alpha\). The results are reported in Table 6.

From Table 6, we observe the following: Performance steadily improves as the temperature increases up to \(T=3\), after which it begins to plateau or degrade slightly. The highest accuracy (98.71%) is achieved at \(T=3\) and \(\alpha =0.7\), validating our selection. Lower \(\alpha\) values underutilize the teacher’s soft knowledge, while higher values (\(\alpha =0.9\)) diminish the importance of ground-truth supervision, leading to marginal overfitting or noise amplification. These findings confirm that a moderately high temperature with balanced weighting allows the student model to benefit maximally from both the teacher’s confidence distribution and the hard labels (Fig. 16).

Impact of temperature T and distillation weight \(\alpha\) on XTinyHAR accuracy.

This ablation supports our selected settings of \(T=3\) and \(\alpha =0.7\) as optimal for achieving a balance between robust student generalization and effective cross-modal knowledge transfer. The results also demonstrate that overly high T values (e.g., \(T=5\)) or extreme \(\alpha\) values tend to hurt performance due to underutilization of label guidance or over-smoothed targets.

Ablation study 5: positional encoding strategy

Transformers require positional encoding to inject temporal order into input sequences, as the self-attention mechanism is permutation-invariant by design. In this study, we compare the impact of two widely used encoding strategies: (1) learnable positional embeddings, where the model learns positional parameters during training, and (2) sinusoidal positional encodings, as introduced in the original Transformer formulation. We evaluate the performance of XTinyHAR using both methods on UTD-MHAD and MM-Fit datasets under identical training setups.

Quantitative comparison Table 7 presents the results. The model with learnable embeddings consistently outperforms the sinusoidal variant by approximately 0.6–0.8% in accuracy. This improvement is attributed to the ability of learnable embeddings to adapt to dataset-specific temporal dynamics and local action structures that are not easily captured by fixed sinusoids.

Discussion

While sinusoidal encodings are computationally efficient and require no additional parameters, they are static and not tailored to the input distribution. In contrast, learnable embeddings enable the model to capture nuanced temporal variations in human activity patterns, especially when sequences differ in speed, phase, or repetition. Given the consistent gains in both datasets and no noticeable increase in overfitting, we choose learnable positional embeddings as the default strategy in XTinyHAR.

Ablation study 6: dynamic vs. fixed patching strategy

To assess the effectiveness of our dynamic patching strategy, we compare it against conventional fixed patching approaches where the patch size P remains constant for all windows. We evaluate three fixed settings: \(P=10\), \(P=20\), and \(P=30\), which represent small, medium, and large patch granularities. For a fair comparison, all other model and training configurations remain unchanged.