Abstract

Variational quantum algorithms (VQAs) promise near-term quantum advantage, yet parametrized quantum states commonly built from the digital gate-based approach often suffer from scalability issues such as barren plateaus, where the loss landscape becomes flat. We study an analog VQA ansätze composed of M quenches of a disordered Ising chain, whose dynamics is native to several quantum simulation platforms. By tuning the disorder strength we place each quench in either a thermalized phase or a many-body-localized (MBL) phase and analyse (i) the ansätze’s expressivity and (ii) the scaling of loss variance. Numerics shows that both phases reach maximal expressivity at large M, but barren plateaus emerge at far smaller M in the thermalized phase than in the MBL phase. Here we propose an MBL initialization strategy by exploiting this gap: initialize the ansätze in the MBL regime at intermediate quench M, enabling initial trainability while retaining sufficient expressivity for subsequent optimization. The results link quantum phases of matter and VQA trainability, and provide practical guidelines for scaling analog-hardware VQAs.

Similar content being viewed by others

Introduction

Variational quantum algorithms (VQAs) offer a promising near-term approach to quantum advantage and have already addressed modest-scale optimization tasks in quantum chemistry1,2,3, combinatorial optimization4,5,6, high-energy physics7, and quantum machine learning8,9,10,11. This hybrid approach combines a quantum processor to prepare a parametrized quantum state with a classical optimizer that adjusts those parameters to minimize a problem-specific loss12,13. Yet scaling VQAs to large system sizes where quantum advantage could arise is far from trivial. Barriers to scalability include proliferation of spurious local minima14,15, efficient classical surrogatability16,17,18, and most prominently the barren plateau (BP) phenomenon: the loss landscape becomes exponentially flat (together with vanishing loss gradients) in the system size, reflecting the curse of dimensionality in the space where the parametrized states operate19,20,21. In a BP regime one needs exponentially many measurement shots to reliably navigate through the flat landscape regions, rendering optimizing VQAs impractical at scale22.

While these parametrized states are typically built through a sequence of quantum gates (digital, gate-based VQAs) and can achieve universality with deep circuits, the deep circuits’ expressivity (i.e., the ability to explore vast regions of Hilbert space) can be excessive for a given task and has been identified as a root cause of BPs19,20,23,24. A complementary approach is to utilize native many-body dynamics of analog quantum simulators as a quantum processor, letting the hardware evolve under its natural, yet controllable, quantum evolution. Indeed, state-of-the-art experimental platforms such as Rydberg arrays, trapped ions, and superconducting circuits can realize target Hamiltonian with high fidelity and provide tunable controls that help probing quantum phases of matter25,26,27,28,29,30,31. A striking demonstration is the observation of many-body localization (MBL) phase in a 125-site two-dimensional lattice32. Whereas thermalized systems equilibrate33,34,35,36, MBL states retain local memory due to strong disorder and interactions and are expected to survive in the thermodynamic limit37,38,39,40,41,42,43. MBL signatures have since been reported across multiple hardware platforms44,45,46,47,48,49, showcasing the ability of analog devices to simulate complex, classically intractable dynamics while remaining experimentally controllable.

Motivated by these experimental advances, a growing body of work seeks to merge analog dynamics with the variational framework. Pulse-level and quench-based ansätzes can be optimized in situ, turning the tunable simulator itself into a variational quantum processor and bypassing gate-decomposition overheads50,51,52,53,54,55,56,57,58,59,60,61. Such schemes align with broader digital-analog approaches62,63 and exploit hardware-native interactions that naturally realize non-trivial quantum phases50,53,54,58. Initial studies show that the underlying phase can significantly influence both expressivity and trainability51, although how these effects scale with system size remains largely unexplored. In addition, in the gate-based setting, MBL-inspired initializations have also been proposed to mitigate BPs64,65,66. These observations raise two key questions: Which phases of matter are favorable for analog VQAs, and how can they be leveraged to mitigate BPs?

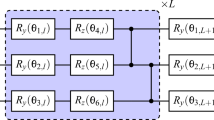

In this work, we tackle these questions using an analog ansätze built from M global quenches of a disordered nearest-neighbor Ising chain (Fig. 1). By tuning the on-site disorder each quench can be placed in either a thermalized or an MBL phase, allowing us to probe how phase structure shapes two key VQA properties: expressivity and the scaling of loss variances, and propose practical BP-mitigating initialization strategy.

a Schematic representation of a periodic Ising spin chain with nearest-neighbor interactions J, disordered on-site energy \({h}_{i}^{(m)}\) at the m-th quench, and a transverse field B. Each on-site disorder energy is uniformly drawn from the interval [−W/2, W/2], with W controlling the disorder strength. b Analog VQA modeled as a series of quench dynamics. Each block represents a unitary evolution under the quench Hamiltonian H(θm) for a time tm, parametrized by the disorder configuration \({{{\boldsymbol{\theta }}}}_{m}=\{{h}_{i}^{(m)}\}\) and measured by local observables. While the evolution times can in general be trainable parameters, we fix them here for our investigation on the connection with phases but they can be made trainable when solving the actual optimization problems (see the “Numerical results” subsection in the Results and Supplementary Note 4). c A schematic phase diagram of the system as a function of disorder strength W (defined for a sufficiently long evolution time of a single quench dynamics) shows a transition between the many-body localized (MBL) phase and the thermalized phase. Bottom panels depict spin density profiles after long-time evolution: in the MBL phase (W < W*), the system retains memory of the initial state, while in the thermalized phase (W > W*), the system relaxes to a thermal state. This setup enables us to study the interplay between disorder-induced quantum phases and the performance and scalability of analog VQAs setup in (b).

How quantum phases shape fundamental properties of analog VQAs

Our results show that both phases become maximally expressive in the deep-quench limit, but BPs appear at far fewer quenches M in the thermalized phase than in the MBL phase. This difference stems from the underlying many-body dynamics; significantly larger portion of the Hilbert space is explored during each quench in the thermalized phase. In line with the connection between expressivity and the vanishing loss gradients shown by Holmes et al.67, we observe that loss variances shrink as the number of quenches makes the ansätze approach a unitary 2-design, irrespective of the quantum phase. This relationship is summarized in Fig. 2. Further investigation into the entanglement entropy growth in each phase well aligns with the trend observed in the flatness of the loss landscape.

A visualization of Hilbert space exploration and loss landscapes for our initialization strategies using two different phases of matter: thermalized and MBL. The results are categorized by the number of quenches (analogous to the circuit depth in gate-based VQA approaches): shallow, intermediate, and deep. Shallow quenches—Both initializations exhibit non-flat loss landscapes. Thermalized dynamics lead to faster state evolution in Hilbert space compared to MBL dynamics, but the model expressivity in both initializations is far from maximal. Intermediate quenches—the thermalized initialization begins to exhibit the barren plateaus and achieves maximal expressivity, while the MBL loss landscape remains non-flat and Hilbert space is not yet completely explored. This intermediate quench regime highlights our proposed initialization strategy: using MBL initialization strategy at intermediate quenches allows the model to attain high expressivity while retaining trainability. Deep quenches—For both initializations, barren plateaus appear, and the Hilbert space is fully explored, indicating maximal expressivity.

MBL initialization strategy

The separation of expressivity scaling suggests a practical initialization strategy. Initialize in the MBL ansätze at an intermediate number of quenches M such that it is deep enough that the corresponding thermalized phase is maximally expressive, yet shallow enough to initially avoid BPs in the MBL phase. This strategy provides significant gradients at the first optimization step while retaining a sufficient expressive power for subsequent optimization. We benchmark the strategy on small proof-of-principle tasks (ground-state VQE and a Max-Cut problem of random-weight complete graphs). This MBL initialization strategy can be viewed as an analog counterpart of near-identity initialization for gate-based circuits68,69.

Our approach aims to bridge digital and analog variational framework and highlight the role of quantum phases of matter in variational algorithms. We expect this approach to be testable on current analog hardware and to inform the design principles of scalable quantum phase-aware analog VQAs.

Results

We study two key fundamental aspects of VQAs in the context of the analog simulators, namely expressivity and the emergence of BP phenomenon and connect them to the phases of matter. The summary of our fundamental results is illustrated in Fig. 2. In addition, we propose the practical BP-free initialization strategy based on MBL phase.

Expressivity

The expressivity of a model considered in this work refers to its ability to uniformly explore the entire Hilbert space. More precisely, we consider the unitary ensemble of the parametrized ansätze corresponding to each phase, defined as

where ΘD is a region of the parameter space such that the Hamiltonian in each quench is in the corresponding phase D (here, D = “Thermalized” or D = “MBL”). This expressivity measures how close the ensemble \({{\mathbb{U}}}_{{{{\boldsymbol{\theta }}}}_{D}}\) is to forming a unitary 2-design over the unitary group—that is, a pseudo-random distribution that matches the Haar random distribution up to the second moment67,70,71.

To formalize this, let us consider the t-order frame potential70

where dμ(U) (and dμ(V)) is a probability measure of U (and of V) over the ensemble \({{\mathbb{U}}}_{{\Theta }_{D}}\). The expressivity can be quantified by the second-order frame potential \({{{\mathcal{F}}}}_{{{\mathbb{U}}}_{{\Theta }_{D}}}^{(2)}\), which reaches its minimum when the ensemble forms a unitary 2-design, in which case \({{{\mathcal{F}}}}_{{{\rm{Haar}}}}^{(2)}=2({2}^{n}-1)!/({2}^{n}+1)!\). Because a 2-design is automatically a 1-design, the same ensemble also saturates the Haar value \({{{\mathcal{F}}}}_{{{\rm{Haar}}}}^{(1)}=1/{2}^{n}\). Note that there also are alternatives for defining expressivity in VQAs, such as through output function space72,73,74,75, or geometric structure of the parametrized states76,77,78.

Quench dynamics drive the ensemble toward a 2-design as the number of quenches M grows, and we therefore expect both phases to attain maximal expressivity at large M. Figure 3 confirms this expectation numerically, with the thermalized phase converging markedly faster than the MBL phase, consistent with its more chaotic dynamics and the larger portion of Hilbert space explored by each thermalized quench.

a The difference between the second-order frame potential of the ansätze ensemble and of the Haar distribution is plotted against the number of quenches M for the system size ranging from n = 5 to n = 9, under the thermalized initialization. The second-order frame potential is estimated by averaging the square of fidelity over N(N − 1)/2 independent pairs with N = 30,000. The saturated values of the second-order frame potential difference are plotted against the system size n for b the thermalized and c MBL initializations, with the error bars indicating the variance of the differences. d Same as (a), but under the MBL initialization.

Barren plateaus

One challenge that prevents the scalability of VQAs is the BP phenomenon, where the loss landscape on average becomes exponentially flat with increasing system size19,20,21,23,24,51,67,71,79,80,81,82,83,84,85,86,87,88,89,90,91,92,93,94,95,96,97,98,99,100,101,102. Upon randomly initializing the training over the BP region, the number of measurement shots required from quantum hardware to reliably navigate through the landscape also scales exponentially with the system size. This renders training VQAs impractical for large numbers of qubits.

More formally, we say the loss landscape is exponentially flat over the region of the loss landscape with parameters sampled according to probability i.e., \({{\boldsymbol{\theta }}} \sim {{\mathcal{P}}}\) if

for some b > 1, where n is the number of qubits.

Intuitively, the root of BPs can be understood from the curse of dimensionality perspective, where parametrized quantum states in an exponentially large Hilbert space are poorly handled21. Various sources that lead to the occurrence of BPs due to these inappropriately designed parametrized states have been identified, including excessive expressivity in the ansätze19,67,83, global measurements84,101, high levels of entanglement79,100, and the presence of noise81,102. In addition, while initially discussed in the context of VQA untrainability, the curse of dimensionality is later found to also plague the scalability of non-variational quantum models such as quantum kernel methods71,103,104,105,106,107 and quantum reservoir processing94,99. Furthermore, an increasing number of approaches have been proposed to tackle BPs20,108 including expressivity-limited architectures109,110, imposing symmetries on circuits111,112,113,114, as well as employing alternative initialization strategies64,65,66,68,69,100,115,116,117. Lastly, caution must be exercised when claiming that an approach can circumvent BPs, particularly due to the complex interplay with shot noise118.

In this work, we mainly focus on studying BPs arising from the expressivity and entanglement of the analog quench dynamics and their connection to the quantum phases in which the ansätze operates. Following similar treatments as Holmes et al.67 which studies the relationship between expressivity and BPs, we have the loss variance bound at the level of loss values expressed as

with the expressivity-dependent correction term

Remark that the bound in Eq. (4) is saturated as the ensemble approaches a unitary 2-design \(({{{\mathcal{F}}}}_{{{\mathbb{U}}}_{{\Theta }_{D}}}^{(2)}\to {{{\mathcal{F}}}}_{{{\rm{Haar}}}}^{(2)})\). Namely, the correction term \({{{\mathcal{B}}}}_{n}\) vanishes and the variance saturates at its Haar value. The proof of Eq. (4) is presented in Supplementary Note 2.

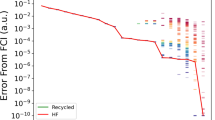

In Fig. 4, we study the variance scaling of the local loss in different phases with respect to the number of qubits and the number of quenches. The observable is chosen to be a product of Pauli-Z operators on the first and second qubits i.e., \({{\mathcal{L}}}({{\boldsymbol{\theta }}})={\langle {Z}_{1}{Z}_{2}\rangle }_{{{\boldsymbol{\theta }}}}\), to ensure that the observed BPs do not originate from global measurements84.

The top panels illustrate the comparison between the variance of the loss function for a thermalized and b MBL initializations and its bounds against the number of quenches M. Specifically, the bounds are functions of the frame potential difference between the ansätze ensemble and the Haar distribution. The solid line represents the variance of the loss \({\langle {Z}_{1}{Z}_{2}\rangle }_{{{\boldsymbol{\theta }}}}\), the dotted line shows the empirical bound for the variance, and the dashed line indicates the theoretical bound for the variance as presented in Eq. (4). These panels display data for 5, 7, and 9 qubits from left to right. The middle panels show the variance of the loss \({\langle {Z}_{1}{Z}_{2}\rangle }_{{{\boldsymbol{\theta }}}}\) for c thermalized and d MBL initialization as the number of quenches increases for systems with 5 to 13 qubits, averaged over 400 realizations. The bottom panels present the saturated variance of \({\langle {Z}_{1}{Z}_{2}\rangle }_{{{\boldsymbol{\theta }}}}\) plotted on a logarithmic scale against the number of qubits for e thermalized and f MBL phase. This provides evidence for the possible emergence of barren plateaus when the ansätze is initialized in both phases.

Crucially, the overall trend in both phases aligns with what we observe from the expressivity scaling in Fig. 4. Firstly, in panels (a) and (b), we verify Eq. (4) by explicitly plotting the upper bounds for different quenches and for some fixed qubits. In addition, we also found an empirical bound of the form

with k = 0.7, which is tighter than the theoretical one with \({{{\mathcal{B}}}}_{n}\left({{\mathbb{U}}}_{{\Theta }_{D}},O\right)\), suggesting that there is room for improvement on the tightness of the expressivity bound. Next, as presented in panels (c) and (d), for a large number of quenches, the loss variances in both phases saturate at the same value for a fixed number of qubits. When plotting the saturated values against the number of qubits (as shown in the panels (e) and (f)), we observe the exponential vanishing of the variance, demonstrating the presence of BPs around the initialization region. Furthermore, the decay rate of the variance in the thermalized phase is observed to be much faster than that in the MBL phase.

The flatness of the landscape can also be physically understood from the perspective of entanglement growth in each phase. In particular, for each thermalized quench, the amount of entanglement generated is much larger than that in the MBL case. In panel (a) of Fig. 5, we plot the growth of entanglement entropy as a function of the number of quenches for different phases. One can see that the convergence behavior of the entanglement entropy, shown in Fig. 5, mirrors that of the local observable variance in Fig. 4.

a Bipartite von Neumann entanglement entropy SE, averaged over 400 realizations, is plotted against the number of quenches M for a bipartition made at the middle of the chain. Results are shown for the thermalized phase (Diamond) and MBL phase (Circle). The horizontal dotted lines indicate the average bipartite entanglement entropy for Haar-random states. The faster convergence of the thermalized initialization compared to the MBL initialization is attributed to the differences in entanglement growth characteristics of each phase. b The estimated numbers of quenches required for the convergence, Msat, of the variance of \({\langle {Z}_{1}{Z}_{2}\rangle }_{{{\boldsymbol{\theta }}}}\) (Square) and the entanglement entropy (Diamond) are plotted against the number of qubits for both the thermalized and the MBL initializations, with the error bars showing the variance of estimation. The consistent trend between entanglement and the onset of barren plateaus in both phases highlights the role of quantum phases in shaping the untrainability of the analog VQA ansätze.

Lastly, as shown in panel (b) of Fig. 5, the number of quenches Msat corresponding to the saturation values of the loss variance, and entanglement entropy share similar scaling behavior with respect to the system size. In particular, the gap between the number of quenches required for saturation in the thermalized and MBL phases is empirically observed to increase linearly with the number of qubits.

Initialization strategy

From the fundamental properties of the analog quench ansätze empirically studied in the previous section, we propose an initialization strategy that provides polynomially large gradients at the initial stage of training while leaving room for large expressivity at a later stage of training. To see how this is possible, we note that one can categorize the initialization regimes based on the number of quenches at which the saturation onset occurs in each phase as follows:

-

Regime I (small number of quenches): Before the saturation in thermalized initialization, both thermalized and MBL phases are free from BPs but lack sufficient parameters to reach maximum expressivity.

-

Regime II (intermediate number of quenches): After regime I, the thermalized phase becomes maximally expressive but suffer from BPs. In contrast, the MBL phase exhibits a large initial loss difference and becomes increasingly expressive compared to the Regime I.

-

Regime III (large number of quenches): Once the MBL initialization loss value saturates, both phases are maximally expressive but enter BP regime.

This categorization is summarized in Fig. 2 and numerically illustrated in the top panel of Fig. 6.

a The variances of the local loss function for the thermalized (Diamond) and MBL (Circle) initializations are plotted against the number of quenches for a 10-qubit system. To categorize the initialization regimes, we divide them into three based on the differences in variance between the thermalized and MBL initializations: Regime I (Shallow) (Green) captures the range of quenches where both initializations are trainable and do not exhibit barren plateaus, Regime II (Intermediate) (Blue) defines the range of quenches where the MBL initialization remains trainable, while the thermalized counterpart already has suffered from the barren plateau, and Regime III (Deep) (Red) encapsulates the range of quenches where both phases exhibit barren plateaus, making the VQA untrainable. b The estimated difference in the number of quences between the MBL and the thermalized initialization when barren plateau onset is plotted against the number of qubits, with the error bars showing the variance of the estimations. This difference is the width of Regime II. As the number of qubits increases, the difference grows linearly with the number of qubits. This suggests the advantage of the MBL initialization over the thermalized initialization.

In practice, employing an ansätze for a standard VQAs typically requires predetermining the number of quenches (i.e., treating M as a hyperparameter). Hence, our findings suggest a novel initialization strategy where we initialize the ansätze in the MBL phase when the number of quenches falls within Regime II. With this strategy, the benefits are twofold. First, the initial training is guaranteed not to suffer from BPs. Second, during later training iterations, the parameters can be tuned such that the ansätze no longer corresponds to the MBL phase. In this scenario, the number of quenches in Regime II is sufficient in the sense that maximal expressivity can, in principle, be achieved by the ansätze as indicated by the saturation in the thermalized phase. Additionally, we note that by parameterizing dynamic times and interaction strengths for individual quenches, the ansätze becomes universal, being able to express any unitary62,119,120. In the next subsection and Supplementary Note 4, we benchmark the proposed MBL initialization scheme on small toy model examples (up to 10 qubits), including a ground state finding task and a Max-Cut problem.

One could then further ask whether this initialization strategy is scalable with the system size. As illustrated in Fig. 6, the difference between the least number of quenches required for the MBL and thermalized phases to achieve saturated variance is empirically found to scale linearly with the number of qubits. Hence, this suggests that Regime II should persist for large system sizes.

While our strategy offers a promising approach to mitigate BPs, it is essential to stress that it does not entirely avoid them. More precisely, the ansätze with the number of quenches in Regime II is sufficiently expressive such that there are BP regions in the loss landscape. While the training is initialized in a region with substantial gradients, there is no guarantee that the training trajectory will not later wander into flat regions. Additionally, this initialization strategy can be seen as an analog version of the close-to-identity initialization68,69, which is generally regarded as problem-agnostic. Hence, there is no guarantee that the solution obtained using this strategy will correspond to a good local minimum.

Numerical results

To demonstrate the capability of the proposed MBL initialization scheme, we apply it to solve two standard optimization problems: (i) Finding the ground state energy of some target Hamiltonians, and (ii) Solving a combinatorial optimization. We compare the performance of the MBL initialization strategy against the thermalization-based strategy. The depths for each are chosen such that both ansätze exhibit comparable variance in the loss landscape: a shallow depth for the thermalized phase and an intermediate depth for the MBL phase.

Specifically, we employ a shallow depth of M = 2 quench for the thermalized initialization, and an intermediate depth of M = 22 (for n = 6) or M = 24 (for n = 8) for the MBL initialization. Although the initialization phases are fixed, during the optimization process we train all relevant parameters of the ansätze, including the longitudinal field, the transverse field, and the evolution time for each quench.

Finding the ground state of some Hamiltonian

We test our approach by considering two target Hamiltonians in an 8-qubit system:

-

Long-range disordered Ising model: A disordered Ising model with power-law decaying long-range interactions is of the form

$${H}_{{{\rm{Ising}}}}=J{\sum }_{i < j}\frac{{Z}_{i}{Z}_{j}}{| i-j{| }^{\alpha }}+{\sum }_{i=1}^{n}{h}_{i}{X}_{i}\,\,,$$(7)where we choose α = 1 and the local fields hi are sampled independently from a uniform distribution over [−0.3J, 0.3J].

-

Heisenberg model: The Heisenberg model with periodic boundary condition is defined as

$${H}_{{{\rm{XYZ}}}}=J{\sum }_{i=1}^{n}({X}_{i}{X}_{i+1}+{Y}_{i}{Y}_{i+1}+{Z}_{i}{Z}_{i+1})\,\,.$$(8)

The performance is evaluated using the relative error, defined as the normalized difference between the energy obtained at a particular iteration E and the true ground state energy \({E}_{\min }\):

Figure 7 shows the results, averaged over 5 initializations after 300 training iterations. For the case of HIsing, both initialization strategies achieve comparable performance with an averaged relative error of 1.44 × 10−3% for the thermalized initialization and 1.82 × 10−3% for the MBL initialization. We argue that since the target Hamiltonian HIsing structurally resembles the ansätze Hamiltonian in Eq. (16), the ansätze possesses a built-in inductive bias towards the solution. This facilitates the faster convergence of the thermalized initialization strategy despite its limited expressivity at shallow quenches.

The average relative error over 5 initializations is plotted against training iterations on a logarithmic scale for estimating two target Hamiltonians in the 8-qubit systems: (a) the long-range disordered Ising model (Eq. (7)) and (b) the Heisenberg model with periodic boundary conditions (Eq. (8)). The red line represents the optimization with thermalized initialization for M = 2, while the blue line shows the optimization with the MBL initialization for M = 24. The shaded region around each line indicates the 20th to 80th percentile range of the relative error.

Next, we discuss the results for the Heisenberg model HXYZ. Here, the ansätze is less structurally aligned with the solution of the form HXYZ. Empirical observations show that the MBL initialization achieves an averaged relative error of 0.11%, significantly outperforming the thermalized initialization, which yields a 7.7% error. We attribute this performance discrepancy to the enhanced expressivity of the MBL initialization. Consequently, for target Hamiltonians that are less structurally aligned with the ansätze, the MBL initialization in the intermediate quench regime is a more suitable candidate.

Combinatorial optimization

Here we consider the Max-Cut problem, a canonical combinatorial optimization task121. The problem asks for a partition of a graph’s vertices into two disjoint sets such that the sum of weights of edges connecting the two sets is maximized. Despite its simple definition, Max-Cut is NP-hard on general graphs121,122.

To solve this using a quantum framework, we map the problem to finding the ground state of an Ising spin glass. By assigning a spin Zi = ± 1 to each vertex, the cut size is maximized when connected spins are anti-aligned (i.e., ZiZj = −1). This is equivalent to minimizing the Ising Hamiltonian122:

where wij are the edge weights defined by the problem instance. We focus on a family of random complete graphs where the connectivity is all-to-all and the weights wij are sampled uniformly from [−1, 1]. This fully connected model with random weights corresponds to the Sherrington-Kirkpatrick spin glass model, which is known to possess a complex energy landscape with many local minima.

The optimization objective is to maximize the cut value, which relates to the Hamiltonian via the linear transformation C(θ) ∝ const − 〈HMC〉. Specifically, the cost function is defined as121,122:

To evaluate the solution quality, we use the approximation ratio

which measures how close the obtained cost value (ansätze-dependent) is to the global maximum \({C}_{\max }\), the ground state of the target problem (ansätze-independent)5.

Figure 8 shows the optimization result after 100 training iterations for graphs with 6 vertices, averaged over 10 random graph instances and 5 initializations (for each strategy) per instance. The thermalized initialization strategy achieves an average approximation ratio of 96.5%, which is slightly lower than the MBL initialization’s ratio of 99.9%.

The average approximation ratio of the Max-Cut problem on 10 random weighted complete graphs with n = 6 vertices is plotted against training iterations. Results are averaged over 5 initialization per graph instance. The red line represents the initialization in the thermalized phase with M = 2, and the blue line represents the MBL phase with M = 22. The shaded area around each line indicates the standard deviation of the approximation ratio.

Furthermore, we empirically observe that while the shallow quenches initialized in the thermalized phase has sufficient expressivity to represent the ground state, the training occasionally becomes trapped in local minima, as indicated by the relatively large shaded variance region. On the other hand, the MBL initialization strategy shows a reliable convergence to the ground state. This observation can be explained by the overparameterization phenomenon78. In particular, the number of parameters used for M = 22 in the n = 6 case significantly exceeds the dimension of the Dynamical Lie Algebra of our ansätze119, which satisfies the lower bound required for the overparametrized regime78. We note, however, that for larger system sizes, exponentially many parameters cannot be practically realized; hence, the interplay between the MBL initialization strategy in the intermediate regime and the local minima traps remains to be explored. We refer readers to Supplementary Note 4 for extended results.

Conclusions

This work proposes a series of analog quench dynamics as an ansätze for VQAs and investigates the interplay between quantum phases in which the ansätze operates and fundamental aspects of VQAs, including expressivity and the occurrence of BPs. Our findings indicate that the dynamical properties of each quantum phase influences how fast expressivity increases and how quickly the loss landscape becomes flat. Specifically, the chaotic dynamics induced by the thermalized phase ansätze lead to maximal expressivity and the onset of BPs at intermediate numbers of quenches, while the MBL phase ansätze reaches this regime much later due to the slower growth of entanglement. Overall, the trends of expressivity increases and variance scaling of the loss landscape closely follow each other, in agreement with the results that Holmes et al. show67.

Based on these observations, we propose an initialization strategy where the ansätze operates in the MBL phase with the number of quenches chosen such that the MBL phase does not yet suffer from BPs and the thermalized phase has already achieved the maximal expressivity. This approach aims to maintain significant geometric features in the loss landscape around the initial training point, thereby preserving initial trainability, while ensuring sufficient expressivity for later training iterations. We note however that although this initialization strategy can help to circumvent BPs, it is generally problem-agnostic. Consequently, the quality of the solution obtained using this strategy cannot be universally guaranteed, and its effectiveness may vary depending on the specific problem or objective function, as shown in the optimization results for VQE problems and a Max-Cut problem in the “Numerical results” subsection in the Results and Supplementary Note 4.

On a related note, recent studies suggest a link between the absence of BPs and the classical surrogatability of the loss landscape21. By allowing an initial data acquisition phase on quantum hardware with non-classical initial states, it may be possible to efficiently reconstruct a BP-free loss landscape for classical optimization98,123,124,125,126,127,128. In this aspect, the MBL initialization in our analog ansätze is expected to be consistent with these claims. Investigating this relationship in details requires both theoretically proving polynomial scaling of the loss variance which can perhaps be achieved by using the framework for generic alternative initializations developed by Mhiri et al.98, as well as carefully analyzing resource scaling for classical simulation with approaches such as tensor-network129,130 or Pauli-back propagation methods131,132,133.

Moreover, while previous works have utilized MBL concepts to facilitate initialization strategies that evade BPs64,65,66, these efforts have primarily focused on digital gate-based quantum circuits, where the entire circuit initialization can often be viewed as a single quench of analog MBL dynamics. Here we distinguish our work by focusing on a series of analog quantum dynamics consisting of multiple quenches, thereby providing a different perspective on leveraging MBL phases in VQAs. Our findings are aligned with, and can be seen as an extension of, other MBL initialization proposals.

It is crucial to emphasize that BP is not the only challenge facing VQAs. For example, an equally important yet understudied problem is the prevalence of poor local minima in the quantum loss landscape14,15,134,135. While we observe empirical evidence of this on the small-scale systems, this is far from a comprehensive study. Previous studies of poor local minima14,14 typically rely on the assumption that the ansätze is constructed from local quantum gates and these results may not directly apply to our analog setting. Further investigation is required to shed the light on how different quantum phases influence the local minima landscape.

Moving forward, we anticipate that analytical tools such as local integrals of motion (LIOMs)39,41 could be useful for analytically substantiating the empirical trends observed in our study. Finally, conducting similar investigation on analog models with other different quantum phases such as time-crystals136,137,138 or topological phases139 is an interesting extension which would deepen our understanding of the role of phases on VQAs. As analog quantum devices continue to advance, implementing and testing experimentally our proposed ansätze and initialization strategy would be a significant step toward practical applications of analog VQAs.

Methods

Analog simulator as a VQA ansätze

We commence by introducing a series of quench dynamics as an ansätze for analog VQAs, serving as a framework to study how the dynamical properties of different quantum phases of matter can influence analog VQA performance.

Given an initial state \(| {\psi }_{0}\rangle\) and some parametrized quantum dynamics U(θ) on n qubits with trainable parameters θ, we consider the loss function which is defined as the expectation value of an observable O

By using a classical optimizer to navigate through the loss function landscape, the task is to optimize the loss function

In this work, the parametrized quantum dynamics is chosen to be a series of M quenches where each quench is a quantum evolution under the system’s Hamiltonian H(θm) characterized by parameters θm, with an evolution time tm. The overall unitary evolution is given by

The model Hamiltonian we study is a disordered Ising spin chain with nearest-neighbor interactions under periodic boundary conditions, given by

where Zi and Xi are the single-qubit Pauli-Z and Pauli-X operators acting on qubit i, respectively, J is the interaction strength, B is the transverse field strength, and \({\{{h}_{i}^{(m)}\}}_{i=1}^{n}\) are the on-site disorder energies at the m-th quench, which are treated as trainable parameters θm. The periodic boundary conditions are implemented by identifying Zn+1 = Z1 in the interaction term. When analyzing the fundamental VQA properties, we fix the evolution time for each quench to be 1/J, but later on for solving actual optimization tasks these times can be treated also as additional trainable parameters. Note that the choice of evolution time is not unique. An alternative timescale of interest is, for example, n/J, which should be sufficient to generate correlations between the first and last qubits in this topology within a single quench. We explore this case in Supplementary Note 5.

We initialize the spin configuration in the all-zero state \(| {\psi }_{0}\rangle =| {{\boldsymbol{0}}}\rangle\) in the computational basis. For each on-site disorder energy, \({h}_{i}^{(m)}\) will initially be drawn randomly from a uniform distribution over the interval \(\left[-\frac{W}{2},\frac{W}{2}\right]\), where W represents the disorder strength. By adjusting the disorder strength, each quench dynamics can explore different phases of matter, as will be discussed in the next subsection.

This model serves as a simplified version of the trapped ion Hamiltonian used in experiments like those from Smith et al.46, but without long-range interactions. It has been employed in previous studies for investigating quantum generative modeling51, phase transitions52, as well as sampling quantum advantage140. While our focus is on this simplified model, we expect that the results obtained will generalize to other systems as well. Indeed, in Supplementary Note 3, we extend our analysis to include the long-range interaction version of the model.

Phases of matter

Depending on the disorder strength W, the long-time evolution under the Hamiltonian in Eq. (16) (i.e., \({lim}_{t\to \infty }{e}^{-iH({{{\boldsymbol{\theta }}}}_{0})t}| {\psi }_{0}\rangle\) for a given disorder configuration θ0) can exhibit two distinct phases of matter: (i) the thermalized phase and (ii) the MBL phase.

In the weak disorder limit, the system thermalizes under its own dynamics to some finite temperature and is said to obey the Eigenstate Thermalization Hypothesis (ETH)33,141,142,143. According to the ETH, the eigenstates of the Hamiltonian behave as thermal states, meaning that any subsystem becomes thermalized due to interactions with the rest of the system. This leads to a volume law for entanglement entropy144, where the entanglement entropy scales linearly with sub-system’s size and the entanglement growth is linear in time.

Conversely, strong disorder can prevent the system from thermalizing, leading to the MBL phase43,145. This non-ergodic behavior is associated to the emergence of LIOMs39, resulting in uncorrelated localized eigenstates38,146. Consequently, the entanglement entropy in the MBL phase grows relatively slow only logarithmically over time, and the dynamics do not erase initial state information40. Notably, the MBL phase is robust against local perturbations and persists in the thermodynamic limit n → ∞.

A standard approach to distinguish these two phases involves analyzing the level statistics of the Hamiltonian146,147. Here, one computes the ratios of consecutive level spacings from the eigenenergies defined as

with Δi = ϵi+1 − ϵi and the eigenenergies \({\{{\epsilon }_{i}\}}_{i}\) are ordered such that ϵi+1 ⩾ ϵi. Then, the distribution of these ratios \({\{{r}_{i}\}}_{i}\) is referred to as the level statistics Pr(r).

In the thermalized phase, the level statistics follows that of the Gaussian Orthogonal Ensemble (GOE):

which characterizes the statistics of eigenvalues of random matrices with the orthogonality constraint. This connection to the random matrix ensemble partially justifies the chaotic behavior of the dynamics in this phase. Additionally, PrGOE(0) = 0 indicates level repulsion, implying that eigenvalues avoid crossing and are correlated.

In contrast, the level statistics in the MBL phase follows the Poisson distribution,

The absence of level repulsion at r = 0 implies that eigenvalues are uncorrelated and can be arbitrarily close to each other, allowing level crossings. This behavior reflects the localized nature of the eigenstates in the MBL phase; localized eigenstates do not overlap significantly, so the energy levels are determined almost independently of one another.

In Fig. 9, we illustrate the two different phases exhibited by the analog model in Eq. (16) using the level statistics (with W = 5J for the thermalized phase, and W = 50J for the MBL phase; both with B = − 2J). Note that in the limit where the disorder is absent W ≈ 0, the model in Eq. (16) becomes equivalent to a system of non-interacting fermions and hence departing from the thermalized phase148. We refer to Supplementary Note 1 for more details of the level statistics with varying disorder strengths.

The histograms display the level statistics of 500 instances of 9-qubit systems governed by the Hamiltonian in Eq. (16) for two different disorder strengths. a With W = 5J, the histogram follows the GOE distribution, indicating that the system is in the thermalized phase characterized by level repulsion and correlated eigenvalues. b With W = 50J, the histogram follows the Poisson distribution, indicating that the system is in the MBL phase where eigenvalues are uncorrelated and level crossings are allowed. These different disorder strengths are used to initialize the parameters in our ansätze for the thermalized and MBL initializations.

Initialization

To associate the notion of quantum phases into our analog VQA framework, we consider two initialization strategies corresponding to the thermalized and MBL phases. First, the thermalized initialization involves setting each quench in Eq. (15) to be in the thermalized phase. Specifically, for each quench, the variational parameters are independently drawn with a disorder strength W such that H(θm) corresponds to the thermalized phase. Similarly, the MBL initialization is defined by choosing the disorder strength W for each quench such that H(θm) corresponds to the MBL phase at long times. We note that in these strategies, we enforce that all quenches correspond to the same phase (either all thermalized or all MBL), but the parameters θm for different quenches are generally different, i.e., \({{{\boldsymbol{\theta }}}}_{m}\ne {{{\boldsymbol{\theta }}}}_{{m}^{{\prime} }}\) for \(m\ne {m}^{{\prime} }\).

Data availability

The data that support the findings of this study are available from the corresponding author upon request.

Code availability

Further implementation details are available from the corresponding authors upon request.

References

Peruzzo, A. et al. A variational eigenvalue solver on a photonic quantum processor. Nat. Commun. 5, 1–7 (2014).

Quantum, G. A. et al. Hartree-Fock on a superconducting qubit quantum computer. Science 369, 1084–1089 (2020).

McArdle, S., Endo, S., Aspuru-Guzik, A., Benjamin, S. C. & Yuan, X. Quantum computational chemistry. Rev. Mod. Phys. 92, 015003 (2020).

Farhi, E., Goldstone, J. & Gutmann, S. A quantum approximate optimization algorithm. arXiv preprint. https://arxiv.org/abs/1411.4028 (2014).

Abbas, A. et al. Challenges and opportunities in quantum optimization. Nat. Rev. Phys. 1–18. https://www.nature.com/articles/s42254-024-00770-9 (2024).

Tangpanitanon, J. et al. Hybrid quantum-classical algorithms for loan-collection optimization with loan-loss provisions. Phys. Rev. Appl. 19, 064001 (2023).

Meglio, A. D. et al. Quantum Computing for High-Energy Physics: State of the Art and Challenges. PRX Quantum. 2016, 073101 (2024).

Schuld, M., Sinayskiy, I. & Petruccione, F. An introduction to quantum machine learning. Contemp. Phys. 56, 172–185 (2015).

Biamonte, J. et al. Quantum machine learning. Nature 549, 195–202 (2017).

Benedetti, M., Lloyd, E., Sack, S. & Fiorentini, M. Parameterized quantum circuits as machine learning models. Quantum Sci. Technol. 4, 043001 (2019).

Cerezo, M., Verdon, G., Huang, H.-Y., Cincio, L. & Coles, P. J. Challenges and opportunities in quantum machine learning. Nat. Comput. Sci. https://www.nature.com/articles/s43588-022-00311-3 (2022).

Cerezo, M. et al. Variational quantum algorithms. Nat. Rev. Phys. 3, 625–644 (2021).

Bharti, K. et al. Noisy intermediate-scale quantum algorithms. Rev. Mod. Phys. 94, 015004 (2022).

Anschuetz, E. R. & Kiani, B. T. Quantum variational algorithms are swamped with traps. Nat. Commun. 13, 7760 (2022).

Anschuetz, E. R. Critical points in quantum generative models. International Conference on Learning Representations. https://openreview.net/forum?id=2f1z55GVQN (2022).

Schreiber, F. J., Eisert, J. & Meyer, J. J. Classical surrogates for quantum learning models. Phys. Rev. Lett. 131, 100803 (2023).

Sweke, R. et al. Potential and limitations of random fourier features for dequantizing quantum machine learning. Quantum 9, 1640 (2025).

Sahebi, M., Barthe, A., Suzuki, Y., Holmes, Z. & Grossi, M. On dequantization of supervised quantum machine learning via random Fourier features. arXiv preprint. https://arxiv.org/abs/2505.15902 (2025).

McClean, J. R., Boixo, S., Smelyanskiy, V. N., Babbush, R. & Neven, H. Barren plateaus in quantum neural network training landscapes. Nat. Commun. 9, 1–6 (2018).

Larocca, M. et al. A review of barren plateaus in variational quantum computing. Nat. Rev. Phys. 3, 625–644 (2025).

Cerezo, M. et al. Does provable absence of barren plateaus imply classical simulability? Nat. Commun. 16, 7907 (2025).

Arrasmith, A., Cerezo, M., Czarnik, P., Cincio, L. & Coles, P. J. Effect of barren plateaus on gradient-free optimization. Quantum 5, 558 (2021).

Fontana, E. et al. Characterizing barren plateaus in quantum ansätze with the adjoint representation. Nat. Commun. 15, 7171 (2024).

Ragone, M. et al. A lie algebraic theory of barren plateaus for deep parameterized quantum circuits. Nat. Commun. 15, 7172 (2024).

Bernien, H. et al. Probing many-body dynamics on a 51-atom quantum simulator. Nature 551, 579–584 (2017).

Zhang, J. et al. Observation of a discrete time crystal. Nature 543, 217–220 (2017).

Gross, C. & Bloch, I. Quantum simulations with ultracold atoms in optical lattices. Science 357, 995–1001 (2017).

Elben, A. et al. Cross-platform verification of intermediate scale quantum devices. Phys. Rev. Lett. 124, 010504 (2020).

Semeghini, G. et al. Probing topological spin liquids on a programmable quantum simulator. Science 374, 1242–1247 (2021).

Braumüller, J. et al. Probing quantum information propagation with out-of-time-ordered correlators. Nat. Phys. 18, 172–178 (2022).

Shaw, A. L. et al. Benchmarking highly entangled states on a 60-atom analogue quantum simulator. Nature 628, 71–77 (2024).

Choi, J. -y et al. Exploring the many-body localization transition in two dimensions. Science 352, 1547–1552 (2016).

Rigol, M., Dunjko, V. & Olshanii, M. Thermalization and its mechanism for generic isolated quantum systems. Nature 452, 854–858 (2008).

Gogolin, C. & Eisert, J. Equilibration, thermalisation, and the emergence of statistical mechanics in closed quantum systems. Rep. Prog. Phys. 79, 056001 (2016).

D’Alessio, L., Kafri, Y., Polkovnikov, A. & Rigol, M. From quantum chaos and eigenstate thermalization to statistical mechanics and thermodynamics. Adv. Phys. 65, 239–362 (2016).

Mori, T., Ikeda, T. N., Kaminishi, E. & Ueda, M. Thermalization and prethermalization in isolated quantum systems: a theoretical overview. J. Phys. B: ., Mol. Opt. Phys. 51, 112001 (2018).

Žnidarič, M., Prosen, T. & Prelovšek, P. Many-body localization in the Heisenberg XXZ magnet in a random field. Phys. Rev. B Condens. Matter Mater. Phys. 77, 064426 (2008).

Pal, A. & Huse, D. A. Many-body localization phase transition. Phys. Rev. b 82, 174411 (2010).

Serbyn, M., Papić, Z. & Abanin, D. A. Local conservation laws and the structure of the many-body localized states. Phys. Rev. Lett. 111, 127201 (2013).

Serbyn, M., Papić, Z. & Abanin, D. A. Universal slow growth of entanglement in interacting strongly disordered systems. Phys. Rev. Lett. 110, 260601 (2013).

Huse, D. A., Nandkishore, R. & Oganesyan, V. Phenomenology of fully many-body-localized systems. Phys. Rev. B 90, 174202 (2014).

Serbyn, M., Papić, Z. & Abanin, D. A. Quantum quenches in the many-body localized phase. Phys. Rev. B 90, 174302 (2014).

Abanin, D. A., Altman, E., Bloch, I. & Serbyn, M. Colloquium: many-body localization, thermalization, and entanglement. Rev. Mod. Phys. 91, 021001 (2019).

Schreiber, M. et al. Observation of many-body localization of interacting fermions in a quasirandom optical lattice. Science 349, 842–845 (2015).

Bordia, P. et al. Coupling identical one-dimensional many-body localized systems. Phys. Rev. Lett. 116, 140401 (2016).

Smith, J. et al. Many-body localization in a quantum simulator with programmable random disorder. Nat. Phys. 12, 907–911 (2016).

Roushan, P. et al. Spectroscopic signatures of localization with interacting photons in superconducting qubits. Science 358, 1175–1179 (2017).

Xu, K. et al. Emulating many-body localization with a superconducting quantum processor. Phys. Rev. Lett. 120, 050507 (2018).

Lukin, A. et al. Probing entanglement in a many-body–localized system. Science 364, 256–260 (2019).

Khaneja, N., Reiss, T., Kehlet, C., Schulte-Herbrüggen, T. & Glaser, S. J. Optimal control of coupled spin dynamics: design of NMR pulse sequences by gradient ascent algorithms. J. Magn. Reson. 172, 296–305 (2005).

Tangpanitanon, J., Thanasilp, S., Dangniam, N., Lemonde, M.-A. & Angelakis, D. G. Expressibility and trainability of parametrized analog quantum systems for machine learning applications. Phys. Rev. Res. 2, 043364 (2020).

Thanasilp, S., Tangpanitanon, J., Lemonde, M.-A., Dangniam, N. & Angelakis, D. G. Quantum supremacy and quantum phase transitions. Phys. Rev. B 103, 165132 (2021).

Meitei, O. R. et al. Gate-free state preparation for fast variational quantum eigensolver simulations. npj Quantum Inf. 7, 155 (2021).

Magann, A. B. et al. From pulses to circuits and back again: a quantum optimal control perspective on variational quantum algorithms. PRX Quantum 2, 010101 (2021).

Michel, A., Grijalva, S., Henriet, L., Domain, C. & Browaeys, A. Blueprint for a digital-analog variational quantum eigensolver using rydberg atom arrays. Phys. Rev. A 107, 042602 (2023).

Liang, Z. et al. Hybrid gate-pulse model for variational quantum algorithms. In Proc. 60th ACM/IEEE Design Automation Conference (DAC) 1–6. https://ieeexplore.ieee.org/abstract/document/10247923 (IEEE, 2023).

Asthana, A. et al. Leakage reduces device coherence demands for pulse-level molecular simulations. Phys. Rev. Appl. 19, 064071 (2023).

De Keijzer, R., Tse, O. & Kokkelmans, S. Pulse based variational quantum optimal control for hybrid quantum computing. Quantum 7, 908 (2023).

Egger, D. J. et al. Pulse variational quantum eigensolver on cross-resonance-based hardware. Phys. Rev. Res. 5, 033159 (2023).

Meirom, D. & Frankel, S. H. Pansatz: pulse-based ansatz for variational quantum algorithms. Front. Quantum Sci. Technol. 2, 1273581 (2023).

Liang, Z. et al. Napa: intermediate-level variational native-pulse ansatz for variational quantum algorithms. IEEE Transactions on Computer-Aided Design of Integrated Circuits and Systems. https://ieeexplore.ieee.org/abstract/document/10402000 (2024).

Parra-Rodriguez, A., Lougovski, P., Lamata, L., Solano, E. & Sanz, M. Digital-analog quantum computation. Phys. Rev. A 101, 022305 (2020).

Garcia-de Andoin, M. et al. Digital-analog quantum computation with arbitrary two-body Hamiltonians. Phys. Rev. Res. 6, 013280 (2024).

Park, C.-Y., Kang, M. & Huh, J. Hardware-efficient ansatz without barren plateaus in any depth. arXiv preprint. https://arxiv.org/abs/2403.04844 (2024).

Cao, C., Zhou, Y., Tannu, S., Shannon, N. & Joynt, R. Exploiting many-body localization for scalable variational quantum simulation. Quantum 9, 1942 (2025).

Xin, L. & Yin, Z.-q. Improve variational quantum eigensolver by many-body localization. arXiv preprint. https://arxiv.org/abs/2407.11589 (2024).

Holmes, Z., Sharma, K., Cerezo, M. & Coles, P. J. Connecting ansatz expressibility to gradient magnitudes and barren plateaus. PRX Quantum 3, 010313 (2022).

Grant, E., Wossnig, L., Ostaszewski, M. & Benedetti, M. An initialization strategy for addressing barren plateaus in parametrized quantum circuits. Quantum 3, 214 (2019).

Zhang, K., Liu, L., Hsieh, M.-H. & Tao, D. Escaping from the barren plateau via Gaussian initializations in deep variational quantum circuits. In Advances in Neural Information Processing Systems. https://openreview.net/forum?id=jXgbJdQ2YIy (2022).

Sim, S., Johnson, P. D. & Aspuru-Guzik, A. Expressibility and entangling capability of parameterized quantum circuits for hybrid quantum-classical algorithms. Adv. Quantum Technol. 2, 1900070 (2019).

Thanasilp, S., Wang, S., Cerezo, M. & Holmes, Z. Exponential concentration in quantum kernel methods. Nat. Commun. 15, 5200 (2024).

Pérez-Salinas, A., Cervera-Lierta, A., Gil-Fuster, E. & Latorre, J. I. Data re-uploading for a universal quantum classifier. Quantum 4, 226 (2020).

Schuld, M., Sweke, R. & Meyer, J. J. Effect of data encoding on the expressive power of variational quantum-machine-learning models. Phys. Rev. A 103, 032430 (2021).

Gil-Fuster, E., Eisert, J. & Dunjko, V. On the expressivity of embedding quantum kernels. Mach. Learn.: Sci. Technol. 5, 025003 (2024).

Gan, B. Y., Leykam, D. & Thanasilp, S. A unified framework for trace-induced quantum kernels. arXiv preprint. https://arxiv.org/abs/2311.13552 (2023).

Haug, T., Bharti, K. & Kim, M. S. Capacity and quantum geometry of parametrized quantum circuits. PRX Quantum 2, 040309 (2021).

Abbas, A. et al. The power of quantum neural networks. Nat. Comput. Sci. 1, 403–409 (2021).

Larocca, M., Ju, N., García-Martín, D., Coles, P. J. & Cerezo, M. Theory of overparametrization in quantum neural networks. Nat. Comput. Sci. 3, 542—-551 (2023).

Marrero, C. O., Kieferová, M. & Wiebe, N. Entanglement-induced barren plateaus. PRX Quantum 2, 040316 (2021).

Sharma, K., Cerezo, M., Cincio, L. & Coles, P. J. Trainability of dissipative perceptron-based quantum neural networks. Phys. Rev. Lett. 128, 180505 (2022).

Wang, S. et al. Noise-induced barren plateaus in variational quantum algorithms. Nat. Commun. 12, 1–11 (2021).

Arrasmith, A., Holmes, Z., Cerezo, M. & Coles, P. J. Equivalence of quantum barren plateaus to cost concentration and narrow gorges. Quantum Sci. Technol. 7, 045015 (2022).

Larocca, M. et al. Diagnosing Barren Plateaus with tools from quantum optimal control. Quantum 6, 824 (2022).

Cerezo, M., Sone, A., Volkoff, T., Cincio, L. & Coles, P. J. Cost function dependent barren plateaus in shallow parametrized quantum circuits. Nat. Commun. 12, 1–12 (2021).

Khatri, S. et al. Quantum-assisted quantum compiling. Quantum 3, 140 (2019).

Rudolph, M. S. et al. Trainability barriers and opportunities in quantum generative modeling. npj Quantum Inf. 10, 116 (2024).

Kieferova, M., Carlos, O. M. & Wiebe, N. Quantum generative training using rényi divergences. arXiv preprint. https://arxiv.org/abs/2106.09567 (2021).

Thanaslip, S., Wang, S., Nghiem, N. A., Coles, P. J. & Cerezo, M. Subtleties in the trainability of quantum machine learning models. Quantum Mach. Intell. 5, 21 (2023).

Holmes, Z. et al. Barren plateaus preclude learning scramblers. Phys. Rev. Lett. 126, 190501 (2021).

Martín, E. C., Plekhanov, K. & Lubasch, M. Barren plateaus in quantum tensor network optimization. Quantum 7, 974 (2023).

Letcher, A., Woerner, S. & Zoufal, C. Tight and efficient gradient bounds for parameterized quantum circuits. Quantum 8, 1484 (2024).

Anschuetz, E. R. A unified theory of quantum neural network loss landscapes. arXiv preprint. https://arxiv.org/abs/2408.11901 (2024).

Chang, S. Y., Thanasilp, S., Saux, B. L., Vallecorsa, S. & Grossi, M. Latent style-based quantum gan for high-quality image generation. arXiv preprint. https://arxiv.org/abs/2406.02668 (2024).

Xiong, W. et al. On fundamental aspects of quantum extreme learning machines. Quantum Mach. Intell. 7, 20 (2025).

Crognaletti, G., Grossi, M. & Bassi, A. Estimates of loss function concentration in noisy parametrized quantum circuits. arXiv preprint. https://arxiv.org/abs/2410.01893 (2024).

Mao, R., Tian, G. & Sun, X. Towards determining the presence of barren plateaus in some chemically inspired variational quantum algorithms. Commun. Phys. 7, 342 (2024).

Deshpande, A. et al. Dynamic parameterized quantum circuits: expressive and barren-plateau free. arXiv preprint. https://arxiv.org/abs/2411.05760 (2024).

Mhiri, H. et al. A unifying account of warm start guarantees for patches of quantum landscapes. arXiv preprint. https://arxiv.org/abs/2502.07889 (2025).

Xiong, W. et al. Role of scrambling and noise in temporal information processing with quantum systems. arXiv preprint. https://arxiv.org/abs/2505.10080 (2025).

Patti, T. L., Najafi, K., Gao, X. & Yelin, S. F. Entanglement devised barren plateau mitigation. Phys. Rev. Res. 3, 033090 (2021).

Uvarov, A. & Biamonte, J. D. On barren plateaus and cost function locality in variational quantum algorithms. J. Phys. A Math. Theor. 54, 245301 (2021).

Mele, A. A. et al. Noise-induced shallow circuits and absence of barren plateaus. arXiv preprint. https://arxiv.org/abs/2403.13927 (2024).

Kübler, J., Buchholz, S. & Schölkopf, B. The inductive bias of quantum kernels. Adv. Neural Inf. Process. Syst. 34, 12661–12673 (2021).

Shaydulin, R. & Wild, S. M. Importance of kernel bandwidth in quantum machine learning. Phys. Rev. A 106, 042407 (2022).

Huang, H.-Y. et al. Power of data in quantum machine learning. Nat. Commun. 12, 1–9 (2021).

Suzuki, Y. & Li, M. Effect of alternating layered ansäatze on trainability of projected quantum kernels. Phys. Rev. A 110, 012409 (2024).

Suzuki, Y., Kawaguchi, H., Yamamoto, N. Quantum Fisher kernel for mitigating the vanishing similarity. Quantum Sci. Technol. 9, 035050 (2024).

Gelman, M. A survey of methods for mitigating barren plateaus for parameterized quantum circuits. arXiv preprint. https://arxiv.org/abs/2406.14285 (2024).

Volkoff, T. & Coles, P. J. Large gradients via correlation in random parameterized quantum circuits. Quantum Sci. Technol. 6, 025008 (2021).

Skolik, A., McClean, J. R., Mohseni, M., Van Der Smagt, P. & Leib, M. Layerwise learning for quantum neural networks. Quantum Mach. Intell. 3, 5 (2021).

Gard, B. T. et al. Efficient symmetry-preserving state preparation circuits for the variational quantum eigensolver algorithm. npj Quantum Inf. 6, 1–9 (2020).

Zhang, F. et al. Shallow-circuit variational quantum eigensolver based on symmetry-inspired hilbert space partitioning for quantum chemical calculations. Phys. Rev. Res. 3, 013039 (2021).

Lyu, C., Xu, X., Yung, M.-H. & Bayat, A. Symmetry enhanced variational quantum spin eigensolver. Quantum 7, 899 (2023).

Meyer, J. J. et al. Exploiting symmetry in variational quantum machine learning. PRX Quantum 4, 010328 (2023).

Verdon, G. et al. Learning to learn with quantum neural networks via classical neural networks. arXiv preprint. https://arxiv.org/abs/1907.05415 (2019).

Lyu, C., Montenegro, V. & Bayat, A. Accelerated variational algorithms for digital quantum simulation of many-body ground states. Quantum 4, 324 (2020).

Liu, H.-Y., Sun, T.-P., Wu, Y.-C., Han, Y.-J. & Guo, G.-P. Mitigating barren plateaus with transfer-learning-inspired parameter initializations. N. J. Phys. 25, 013039 (2023).

Aghaei R., Tafreshi, S. B., Holmes, Z., Thanasilp, Z. Pitfalls when tackling the exponential concentration of parameterized quantum models. Quantum Sci. Technol. 11, 015049 (2026).

Wiersema, R., Kökcü, E., Kemper, A. F. & Bakalov, B. N. Classification of dynamical Lie algebras of 2-local spin systems on linear, circular and fully connected topologies. npj Quantum Inf. 10, 110 (2024).

Hu, Hong-Ye, et al. “Universal dynamics with globally controlled analog quantum simulators.” arXiv preprint arXiv:2508.19075 (2025).

Haribara, Y., Utsunomiya, S. & Yamamoto, Y. A Coherent Ising Machine for MAX-CUT Problems: Performance Evaluation against Semidefinite Programming and Simulated Annealing, 251–262. https://doi.org/10.1007/978-4-431-55756-2_12 (Springer Japan, Tokyo, 2016).

Lucas, A. Ising formulations of many NP problems. Front. Phys. 2, 5 (2014).

Puig, R., Drudis, M., Thanasilp, S. & Holmes, Z. Variational quantum simulation: a case study for understanding warm starts. PRX Quantum 6, 010317 (2025).

Lerch, S. et al. Efficient quantum-enhanced classical simulation for patches of quantum landscapes. arXiv preprint. https://arxiv.org/abs/2411.19896 (2024).

Pesah, A. et al. Absence of barren plateaus in quantum convolutional neural networks. Phys. Rev. X 11, 041011 (2021).

Bermejo, P. et al. Quantum convolutional neural networks are (effectively) classically simulable. arXiv preprint. https://arxiv.org/abs/2408.12739 (2024).

Goh, M. L., Larocca, M., Cincio, L., Cerezo, M. & Sauvage, F. Lie-algebraic classical simulations for quantum computing. Phys. Rev. Res. 7, 033266 (2025).

Schatzki, L., Larocca, M., Nguyen, Q. T., Sauvage, F. & Cerezo, M. Theoretical guarantees for permutation-equivariant quantum neural networks. npj Quantum Inf. 10, 12 (2024).

Pollmann, F., Khemani, V., Cirac, J. I. & Sondhi, S. L. Efficient variational diagonalization of fully many-body localized hamiltonians. Phys. Rev. B 94, 041116 (2016).

Wahl, T. B., Pal, A. & Simon, S. H. Efficient representation of fully many-body localized systems using tensor networks. Phys. Rev. X 7, 021018 (2017).

Rudolph, M. S., Fontana, E., Holmes, Z. & Cincio, L. Classical surrogate simulation of quantum systems with LOWESA. arXiv preprint. https://arxiv.org/abs/2308.09109 (2023).

Miller, A. et al. Simulation of fermionic circuits using majorana propagation. arXiv preprint. https://doi.org/10.48550/arXiv.2503.18939 (2025).

Rudolph, M. S., Jones, T., Teng, Y., Angrisani, A. & Holmes, Z. Pauli propagation: a computational framework for simulating quantum systems. arXiv preprint. https://arxiv.org/abs/2505.21606 (2025).

Kiani, B. T., Lloyd, S. & Maity, R. Learning unitaries by gradient descent. arXiv preprint. https://arxiv.org/abs/2001.11897 (2020).

Wiersema, R. et al. Exploring entanglement and optimization within the Hamiltonian variational ansatz. PRX Quantum 1, 020319 (2020).

Wilczek, F. Quantum time crystals. Phys. Rev. Lett. 109, 160401 (2012).

Kongkhambut, P. et al. Observation of a continuous time crystal. Science 377, 670–673 (2022).

Zaletel, M. P. et al. Colloquium: quantum and classical discrete time crystals. Rev. Mod. Phys. 95, 031001 (2023).

Wen, X.-G. Colloquium: zoo of quantum-topological phases of matter. Rev. Mod. Phys. 89, 041004 (2017).

Tangpanitanon, J., Thanasilp, S., Lemonde, M.-A., Dangniam, N. & Angelakis, D. G. Signatures of a sampling quantum advantage in driven quantum many-body systems. Quantum Sci. Technol. 8, 025019 (2023).

Deutsch, J. M. Quantum statistical mechanics in a closed system. Phys. Rev. A 43, 2046 (1991).

Srednicki, M. The approach to thermal equilibrium in quantized chaotic systems. J. Phys. A: Math. Gen. 32, 1163 (1999).

Deutsch, J. M. Eigenstate thermalization hypothesis. Rep. Prog. Phys. 81, 082001 (2018).

Eisert, J., Cramer, M. & Plenio, M. B. Colloquium: area laws for the entanglement entropy. Rev. Mod. Phys. 82, 277 (2010).

Alet, F. & Laflorencie, N. Many-body localization: an introduction and selected topics. Comptes Rendus Phys. 19, 498–525 (2018).

Oganesyan, V. & Huse, D. A. Localization of interacting fermions at high temperature. Phys. Rev. b 75, 155111 (2007).

Atas, Y. Y., Bogomolny, E., Giraud, O. & Roux, G. Distribution of the ratio of consecutive level spacings in random matrix ensembles. Phys. Rev. Lett. 110, 084101 (2013).

Russomanno, A., Santoro, G. E. & Fazio, R. Entanglement entropy in a periodically driven Ising chain. J. Stat. Mech. Theory Exp. 2016, 073101 (2016).

Acknowledgements

The authors gratefully thank Zoë Holmes and Marco Cerezo for valuable discussions. T.C. and S.T. acknowledge the funding support from the NSRF via the Program Management Unit for Human Resources & Institutional Development, Research and Innovation [grant number B39G680007]. The authors acknowledge high performance computing resources including NVIDIA A100 GPUs from Chula Intelligent and Complex Systems Lab, Faculty of Science, Chulalongkorn University, Thailand. S.T. is partially supported from the Sandoz Family Foundation-Monique de Meuron program for Academic Promotion. S.T. further acknowledges the grants for development of new faculty staff, Ratchadaphiseksomphot Fund, Chulalongkorn University [grant number 3230120336 DNS_68_052_2300_012], as well as funding from National Research Council of Thailand (NRCT) [grant number N42A680126]. This Research is funded by Thailand Science research and Innovation Fund Chulalongkorn University (IND_FF_69_258_2300_062).

Author information

Authors and Affiliations

Contributions

S.T. and T.C. conceived the project. K.S., S.T., and T.C. designed the numerical experiments. K.S. developed the numerical packages and implementations. All authors contributed to writing the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Communications Physics thanks the anonymous reviewers for their contribution to the peer review of this work. [A peer review file is available].

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Srimahajariyapong, K., Thanasilp, S. & Chotibut, T. Connecting phases of matter to the flatness of the loss landscape in analog variational quantum algorithms. Commun Phys 9, 111 (2026). https://doi.org/10.1038/s42005-026-02528-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s42005-026-02528-4