Abstract

Human motion intent prediction (HMIP) combines data sources, feature extraction, modeling and prediction algorithms, etc., which is the key for providing artificial intelligent assistance and protection from intelligent wearable systems. HMIP has been intensively explored. A timely and comprehensive overview of this field is provided. Several main aspects are covered: biomechanical features of human motion, architecture of intelligent wearable systems, data processing and algorithm designs, application scenarios and challenges.

Similar content being viewed by others

Introduction

With the rapid development of artificial intelligence and sensing technologies, human motion intent prediction (HMIP) has become a critical research direction in the fields of human-computer interaction and intelligent control. HMIP systems can infer users’ behavioral intentions or movement requirements by collecting and analyzing human biological signals and motion data, thereby enabling natural interaction between humans and devices. Intelligent wearable systems are evolving toward intelligence, flexibility, and multimodality, integrating advanced sensors, signal acquisition modules, and wireless communication modules. Integrating advanced sensors enable real-time capture of user motion data and physiological signals such as joint angles1,2, acceleration3,4, electromyography (EMG)5,6,7, electrocardiography (ECG)8,9, and electroencephalography (EEG)10, providing high-quality and diverse raw data support for HMIP.

HMIP has undergone a rapid evolution from traditional signal processing to modern intelligent algorithm-driven approaches. Early studies primarily focused on signal processing, feature extraction, and simple pattern recognition algorithms, typically employing data preprocessing combined with basic machine learning algorithms such as Linear Discriminant Analysis (LDA) and Support Vector Machines (SVM) for prediction11,12,13,14. However, these methods exhibit limitations when handling complex nonlinear signals or high-dimensional dynamic data. Deep learning, technologies including Convolutional Neural Network (CNN)15,16, Recurrent Neural Network (RNN)17,18,19, and Long Short-Term Memory network (LSTM)20,21,22 have been widely applied to motion intent prediction. Deep learning methods can automatically extract spatiotemporal features from biological signals, capture complex dynamic changes, and demonstrate superior performance in multimodal data fusion processing and prediction accuracy23,24,25. Benefiting from advancements in intelligent wearable systems and artificial intelligence (AI), HMIP is progressively advancing toward high precision, multimodality, and real-time capability26,27,28.

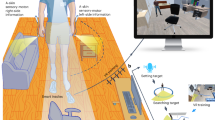

HMIP technology has permeated multidimensional application scenarios. In human-computer interaction, HMIP significantly enhances the naturalness and efficiency of interactions. For instance, in prosthetics (Fig. 1a)29,30,31, exoskeletons (Fig. 1b)32,33,34,35, and robotic assistance systems (Fig. 1c, e)36,37,38,39,40, including adaptive control strategies from the Herr group at MIT41 and high-density EMG interfaces from Imperial College London42, precise prediction of user intent enables faster and more intuitive device responses. In sports training, HMIP alerts users to incorrect postures, reduces injury risks, and offers scientific guidance (Fig. 1d)43,44,45,46. In the medical field, HMIP drives devices to assist in completing movements, providing personalized therapy and rehabilitation (Fig. 1f)47,48,49. Additionally, immersive interactions in virtual reality (Fig. 1g, h)50,51 and driver behavior monitoring in intelligent vehicles (Fig. 1i)52,53,54 rely on HMIP technology to accurately capture complex motion intentions.

a Implanted muscle sensors and HMIP algorithms improve prosthetic control to create high-performance bionic limbs, reprinted/adapted with permission from ref. 31. b Multimodal sensors enable more accurate intent prediction, enhancing interactive control of lower-limb exoskeletons, reprinted/adapted with permission from ref. 35. c Robots assist humans in close-range operations through HMIP systems, reprinted/adapted with permission from ref. 39. d Predicting human motion intent via joint angles to alert incorrect postures, reprinted/adapted with permission from ref. 46. e Assistive robots rapidly respond to tasks by anticipating human actions, reprinted/adapted with permission from ref. 40. f Wearable ECL-TVS for finger rehabilitation monitoring, reprinted/adapted with permission from ref. 49. g Integrating HMIP technology with tactile sensors to achieve rapid sensing for immersive interaction, reprinted/adapted with permission from ref. 51. h Tactile-sensor-based HMIP interaction enhancing immersive virtual reality (VR) experiences, reprinted/adapted with permission from ref. 51. i HMIP-based prediction of driver behavior for interactive planning with vehicle systems, reprinted/adapted with permission from ref. 54.

Despite significant progresses have achieved, HMIP research still faces multifaceted challenges. First, the selection of biomechanical features lacks standardized frameworks, with significant discrepancies in quantification criteria for key biomechanical parameters across studies, limiting model generalizability in cross-scenario applications. Second, wearable systems face sensor performance trade-off dilemmas, where multimodal fusion remains at the stage of superficial feature concatenation, neglecting critical issues such as intermodal heterogeneity, information redundancy/missing, and computational complexity. In addition, algorithms exhibit insufficient scenario adaptability, with traditional machine learning and deep learning methods lacking systematic selection guidelines regarding real-time performance, interpretability, and computational efficiency, constraining research in resource-limited scenarios.

This review provides a comprehensive overview of the published work reported in the past decade. It covers biomechanical features of human motion, wearable sensors, algorithms for HMIP, devices, system integration, and wearable applications as well as the challenges.

Biomechanical features of the human motion

Basic biomechanical features

Human motion is driven by multiple interconnected mechanical factors. Biomechanical features reflect the fundamental motion modes and behavioral traits55,56. Biomechanical features span, from macroscopic to microscopic levels, across three core scales: the whole body (system level), joints (local level), and muscles (tissue level). The synergistic interactions among different biomechanical features form a complete information chain from microscopic tissue deformation to macroscopic behavioral output, providing robust data support for HMIP.

At the system level, body acceleration is the core indicator of human motion. Its spatiotemporal evolution is directly related to motion stability and intent transition. This provides biomechanical precursor signals for fall risk grading models and gait trajectory prediction. For example, gait trajectory prediction based on gravitational acceleration shows that global motion characteristics can provide key precursor signals for fall risk assessment (Fig. 2a)57, while balance recovery analysis through trunk acceleration further reveals the role of motion intent in dynamic regulation (Fig. 2b)58.

a Predicting gait trajectories via a gravitational acceleration estimation procedure, reprinted/adapted with permission from ref. 57. b Predicting human motion and balance recovery responses through trunk acceleration, reprinted/adapted with permission from ref. 58. c Capturing the primary skeletal joint matrix to record whole-body motion behavior for disease progression prediction, reprinted/adapted with permission from ref. 59. d Analyzing elbow joint activities using an anatomic axis-based coordinate system, reprinted/adapted with permission from ref. 60. e Capturing frame sequences of motion data to analyze movement states, reprinted/adapted with permission from ref. 61. f Performing elbow gesture recognition with a joint-sensing elbow sleeve and waveform visualization, reprinted/adapted with permission from ref. 62. g Achieving dynamic joint motion control through a muscle tactile device, reprinted/adapted with permission from ref. 63. h Assessing gesture variation trends via a forearm-wrist surface electromyography (sEMG) wearable device, reprinted/adapted with permission from ref. 64. i Enhancing human walking robustness using muscle pre-activation techniques, reprinted/adapted with permission from ref. 65.

At the local level, the temporal variation of joint angle and angular velocity caused by joint motion constructs the spatiotemporal encoder of motion intent. As shown in Fig. 2c, motion behavior can be effectively recognized through the capture of skeletal joint matrices59, and analysis based on the anatomical axis coordinate system can characterize joint activity patterns (Fig. 2d)60. This can more finely reveal the angular variation under joint degree-of-freedom constraints, directly mapping the execution trajectory of specific motion patterns. In addition, the introduction of joint action frame sequences reveals the dynamic evolution trend of motion intent (Fig. 2e)61, while the combination with elbow sensing devices further improves the accuracy of gesture recognition (Fig. 2f)62.

At the tissue level, muscle activation characteristics reveal the transformation process from neural drive to biomechanical output, and muscle strength provides clues of motion intensity for intent prediction. As shown in Fig. 2g, dynamic joint activity control can be achieved through muscle haptic devices63, while gesture trend analysis based on surface EMG (sEMG) signals demonstrates the value of muscle layer features in prediction (Fig. 2h)64. It is worth noting that muscle pre-activation technology plays an important role in improving the robustness of human walking (Fig. 2i)65. This is a force transmission process dominated by muscle tissue, revealing the physiological essence of microscopic analysis of motion intent.

Body acceleration

As a global parameter, body acceleration correlates with motion intent through two key mechanisms: the relative stability of the vertical acceleration of the human body in daily activities, and the feature mutation of acceleration in special movements. This paper designates these as the center of gravity stability mechanism and intent transition mechanism. The human center of gravity exhibits corresponding sway patterns across different movement states66, forming the center of gravity stability mechanism. This mechanism reflects movement states and intensity through rhythmic variations during motion. Specifically, the extrema and variation frequency of acceleration can identify and distinguish between the stance and swing phases of gait66. Moreover, the strong correlation between vertical acceleration peaks and ground reaction force (GRF) reflects the energy output efficiency of lower limb muscles67, which can be used to quantify motor ability and assist rehabilitation training (Fig. 3a)68. A common application is gait pattern recognition, where acceleration signals from different body parts are synthesized into whole-body gait activity, and gait speed is estimated by combining with time-series data (Fig. 3b)69. Furthermore, by analyzing typical action sequences in daily life using acceleration components (Fig. 3c)58, it is possible to reveal motion disturbance features and establish a matrix model to achieve fall monitoring (Fig. 3d)58.

a Quantifying motor capabilities using vertical acceleration to monitor health status and assist exercise rehabilitation, reprinted/adapted with permission from ref. 68; b Synthesize the gait activity of the entire human body based on acceleration from multiple locations, and estimate gait speed based on time series data, reprinted/adapted with permission from ref. 69; c Analyzing typical activities of daily living sequences using angular acceleration components, reprinted/adapted with permission from ref. 58; d Establishing a matrix scheme for movement perturbation detection based on body acceleration for fall monitoring, reprinted/adapted with permission from ref. 58; e Capturing motion detail trajectories through signal coupling of acceleration and angular velocity, reprinted/adapted with permission from ref. 74.

The initiation and termination of human motion are accompanied by acceleration and deceleration phases. Based on this, an intent transition mechanism can be established, providing biomechanical precursor signals for changes in movement direction. Relevant studies have shown that trunk acceleration exhibits characteristic mutations approximately 200 ms before sudden movements (such as abrupt stops or turns)70,71,72. This not only enables earlier recognition of instantaneous gait responses in daily life73, but also supports action prediction in professional sports74. For example, by coupling acceleration and angular velocity signals, detailed motion trajectories can be captured, thereby achieving higher precision in motion analysis (Fig. 3e)74.

Body acceleration serves as a core mechanical indicator at the human motion system level, revealing macroscopic dynamic principles of human movement by analyzing spatiotemporal evolution of holistic motion parameters. This spatiotemporal evolution fundamentally represents higher-order functional expressions of the human center of gravity trajectory. A representative example is the relatively stable sway pattern of the human center of gravity during movement66, where abrupt motions alter its trajectory. This enables judgment of motion trends and intent prediction. Body acceleration acts as the mechanical precursor signal in such studies, reflecting intrinsic mechanisms of joint load distribution, energy transfer, and movement pattern stability during human motion75. Consequently, body acceleration is widely applied in HMIP-related domains including gait prediction76, fall detection77, and health assessment66,78.

Angles, angular velocities and moments of joints

Joint motion is the core of human kinematics research, and its bone-lever mechanical mechanism carries the function of fine analysis of motion intention1,79. The predictive value of these parameters stems from the specificity of their variation trajectories, as their curves exhibit characteristic peak trajectories under different motion states, thereby constructing a mechanical database of motion patterns46,80. Joint parameters not only define the biomechanical boundaries of human joint motion but also predict and correct human joint movements through dynamic pattern analysis81. For example, the knee flexion angle reaches a peak (approximately 72°) in the late stance phase of gait, which can serve as a basis to preliminarily distinguish normal gait from pathological gait82.

Each joint of the human body has a specific motion form (Fig. 4a)83. Based on the degree-of-freedom characteristics of each joint, targeted motion prediction and assistance schemes can be further formed. For example, the torque-based flexion-extension symmetry of the hip joint provides key mechanical evidence for the design of gait assistance strategies, and when combined with exoskeleton systems, can achieve prediction and assistance of walking (Fig. 4b)84. In knee joint analysis, by establishing an anatomical coordinate system to identify the motion axis, continuous monitoring and prediction of the angle can be realized (Fig. 4c)85. Similarly, for ankle joint flexion and extension movements, wearable monitoring devices developed for this purpose provide new pathways for lower limb motion intention prediction (Fig. 4d)86. In the upper limb, the small deviations of the shoulder joint rotation axis can be estimated through mechanical models, thereby capturing complex shoulder motion patterns (Fig. 4e)87. Meanwhile, elbow joint motion can be quantified through a sensor coordinate system based on anatomical axes, enhancing the stability of motion capture (Fig. 4f)60. More refined motion recognition can even be extended to the hand, where the combination of joint anatomical structure and waveform features enables dynamic analysis and classification of different gestures (Fig. 4g)62.

a Specific articulation patterns of human joints, reprinted/adapted with permission from ref. 83; b Using ultra-lightweight robotic hip exoskeletons to predict and assist walking, reprinted/adapted with permission from ref. 84; c Monitoring knee joint motion using an axis-identification approach within a coordinate system, reprinted/adapted with permission from ref. 85; d Monitoring device for ankle flexion and extension movements, reprinted/adapted with permission from ref. 86; e Estimating shoulder joint motion based on deflection of joint rotation axes, reprinted/adapted with permission from ref. 87; f Anatomic axis-based sensor coordinate system at the elbow joint, reprinted/adapted with permission from ref. 60; g Joint anatomy and waveform visualizations for different hand gestures, reprinted/adapted with permission from ref. 62.

Muscle activation and strength

Underlying joint motion constrained by osseous structures, muscle activation serves as the origin of the neuromuscular transmission chain, converting neural impulses into biomechanical output that directly determines muscle strength output and the dynamic range of joint torque. As shown in Fig. 5a, muscle activation and control are primarily determined by four key components: the motor cortex, motor neurons, neuromuscular junctions, and muscle fibers88. The amplitude of muscle fiber length changes varies with joint angles, movement force, and posture83, while its contraction intensity and frequency reflect different movement phases89,90. In the two most common human gait patterns (walking and running) muscles employ similar biomechanical mechanisms to provide support and forward propulsion in both tasks. However, during running, the contribution of the soleus to forward progression decreases, while the knee extensors exhibit greater strength output91.

a Four key components of muscle control: motor cortex, motor neurons, neuromuscular junctions, and muscle fibers, reprinted/adapted with permission from ref. 88; b Monitoring muscle activation levels via sensing electrodes, reprinted/adapted with permission from ref. 92; c Real-time measurement of muscle strength to enhance athletic performance and rehabilitation processes, reprinted/adapted with permission from ref. 95; d Enhancing prosthetic control through surface electromyography (sEMG) sensors specifically designed for amputees, reprinted/adapted with permission from ref. 92.

It is worth noting that neural-system-based sensing electrodes can monitor the degree of muscle activation (Fig. 5b)92, and the spatiotemporal characteristics of this activation provide lead-time biomechanical signals for HMIP. Studies have revealed that muscles exhibit characteristic burst activation approximately 100 ms before movement initiation, and the high correlation between activation slope and muscle activity makes it a sensitive indicator for motion prediction93,94. Based on this feature, real-time measurement of muscle strength has been proven to enhance both athletic performance and rehabilitation outcomes (Fig. 5c)95, while sEMG sensors developed for amputees have also significantly improved prosthetic control capability (Fig. 5d)92. In complex motion scenarios, introducing EMG into time-domain joint analysis based on IMUs can improve the prediction accuracy of HMIP by more than 10%96,97,98, highlighting the unique value of muscle activity parameters in the analysis of complex scenarios.

Motion mode and wearable sensors

Motion mode with sensor deployment

Figure 6 shows the common sensors and their placement sites, including the limbs, soles of the feet, and waist. These areas are either close to joints or key load-bearing regions, enabling efficient motion signal collection. The selection and placement of sensors must precisely align with the spatial hierarchy of human biomechanics and ergonomic principles, ensuring comfortable wearability, ease of use, and minimal signal interference99. Table 1 illustrates the biomechanical features and common motion patterns in life. The distinct motion patterns exhibit relatively prominent biomechanical features and provide essential references for feature selection and motion model construction. This sensor layer design adheres to the spatial hierarchy of human biomechanics and enables efficient capture of intent-predictive signals: at the body level, it tracks movement baselines and center of mass shifts; at the joint level, it analyzes multi-degree-of-freedom motion correlations; and at the muscle level, it reveals the physiological origin of neural drive. This layered, coordinated mechanism realizes full-spectrum signal coverage: from macro-level situational awareness and mechanical dynamics coupling to micro-level neural activation. Thus, establishing a comprehensive biomechanical feature mapping framework for HMIP.

a Wearable wireless electroencephalography (EEG) earbuds, reprinted/adapted with permission from ref. 172. b Multi-channel reflectance photoplethysmography (PPG) watch, reprinted/adapted with permission from ref. 160. (c) Tactile finger ring for finger flexion sensing, reprinted/adapted with permission from ref. 51. d Smart textile glove, reprinted/adapted with permission from ref. 104. e Wireless stretchable array electromyography (EMG) sensor, reprinted/adapted with permission from ref. 275. f Smart shoe for foot pressure sensing, reprinted/adapted with permission from ref. 161. g Wearable exoskeleton, reprinted/adapted with permission from ref. 276. h Smart sports armband, reprinted/adapted with permission from ref. 97. i Wearable 12-lead electrocardiography (ECG) collector, reprinted/adapted with permission from ref. 8. j Multi-electrode NeuroLife EMG sleeve, reprinted/adapted with permission from ref. 277. k Multimodal sweat sensor, reprinted/adapted with permission from ref. 278.

Sensors are the core of intelligent wearable systems, providing multidimensional support for motion intent prediction by continuously collecting physiological, motion, and environmental data in real time. As shown in Table 2, sensors can be roughly classified based on their working principles into inertial sensors, mechanical sensors, bioelectrical sensors, acoustic sensors, optical sensors, temperature sensors, and chemical sensors. Each category utilizes distinct physical, biological, or chemical properties to capture biomechanical data from different parts of the human body. Therefore, sensors need to be worn on appropriate body locations to fully leverage the advantages of each sensing component and achieve optimal data acquisition performance.

Sensor layer design based on wearable systems, corresponding to core biomechanical features, inertial sensors (Fig. 6g), mechanical sensors (Fig. 6c), and EMG (Fig. 6j) have become the most commonly used sensor types in motion monitoring. The widespread application of these sensors in motion monitoring is due to their high sensitivity, real-time capability, and versatility in various scenarios, providing reliable support for motion intent prediction.

Body acceleration signal capture

To monitor the body’s center of mass stability, most studies place triaxial accelerometers on the waist to measure body acceleration100,101. This location is considered highly representative of the body’s center of gravity. The rapid development of micro-electromechanical systems (MEMS) has enabled the extensive application of inertial sensors in wearable devices102. Among them, the commonly used inertial measurement unit (IMU) is a compact electronic device that includes triaxial accelerometers, gyroscopes, and gravity sensors to measure body acceleration, limb angular acceleration, and joint angle variations. By fusing data from these components, IMUs can estimate 2D or 3D linear acceleration and angular velocity relative to a global reference frame103. This greatly contributes to identifying various complex movements of different parts of the human body. Compared with traditional inertial sensing systems such as early mechanical gyroscopes, IMUs significantly improve portability and cost-effectiveness. Their flexible packaging design and wireless feature allow the device to be continuously worn on human joints, greatly enhancing ease of use compared to traditional systems that require fixed installation and frequent calibration. In addition, the vibration reliability design of current MEMS102 helps suppress motion artifacts, providing a practical solution with high reliability and low interference for HMIP.

Researchers have conducted experiments with IMU sensors worn on the torso, with tracking system units such as GPS and LPS68 being the most commonly used. These sensors are often used in conjunction with accelerometers to calibrate motion data. Moreover, to capture nuanced transitions in motion intent, researchers frequently place triaxial accelerometers on the limbs (Fig. 6h) to provide biomechanical precursors for changes in movement direction74,97. Inertial sensors are typically placed on the joints of the limbs and the waist (Fig. 6h) to monitor limb movement trajectories and acceleration information, suitable for full-body dynamic analysis. This facilitates the rapid identification of complex, multi-segmental body movements and accelerates the construction of mathematical models for predicting subsequent motion intent.

Joint angle signal capture

At the local level, HMIP requires biomechanical analysis of joint angles and angular velocities. Joint motion is highly complex due to multiple degrees of freedom in major joints, making accurate motion capture and analysis more challenging103. To obtain precise joint mechanics data, flexible strain sensors are often affixed at joint flexion points to monitor micro-strains on the skin or ligaments through resistance variation. In gait prediction studies, flexible strain sensors are attached to the knee joint and combined with micro-gyroscopes to capture gait phase transitions caused by sudden angular velocity changes. Additionally, pressure sensors (Fig. 6f) can be embedded in insoles to detect plantar pressure distribution via piezoelectric or capacitive changes, thus analyzing ankle movement and predicting specific gait intent. In studies of fine joint movements, such as hand gestures, flexible strain sensors are commonly integrated into wearable fabrics like smart gloves104 (Fig. 6d) to monitor subtle finger joint angle variations in real time.

Mechanical sensors are mostly placed at joints (Fig. 6d) and under the feet (Fig. 6f) to accurately capture skin deformation and contact force distribution, used for limb motion and gait analysis. Early mechanical sensors often used traditional metal or semiconductor materials, but limitations such as large thickness and low extensibility have driven the development of flexible and stretchable polymer composites as new alternative materials105. Currently, breakthroughs in flexible electronic materials allow ultrathin strain sensors to be directly attached to skin folds or embedded in clothing and insoles106, converting micro-deformations into electrical signals through piezoresistive/piezo-capacitive effects. Among them, sensor units based on novel materials such as graphene107, MXenes108, and hydrogels70 achieve an excellent combination of high stretchability and high sensitivity, accurately capturing strain gradients of the epidermis during joint flexion and the trajectory of plantar pressure center migration. On the other hand, the development of high-sensitivity pressure sensors is also significant for ultra-sensitive touch technology and the development of high-precision E-skins, especially in the micro-pressure range below 100 Pa109. The high sensitivity (0.1 Pa)109 and fast response (50 μs)109 of this type of sensor provide precise mechanical signals and valuable data processing time for HMIP. Moreover, the biocompatible substrates of new mechanical sensors greatly reduce skin contact pressure, providing high-precision and comfortable wearable devices for long-term imperceptible monitoring.

Muscle signal capture

At the tissue level, to analyze the dynamic coupling between physiological signals such as electromyographic activity and biomechanical parameters, sensors focus on monitoring neuromuscular connections and muscle fiber activity. As the primary mechanical source driving joint motion, muscle groups responsible for force generation in the limbs (e.g., biceps brachii, quadriceps, and gastrocnemius) are commonly used sites for electromyographic monitoring110,111,112. Researchers employ sEMG with high-density electrodes placed over target muscle bellies to capture anticipatory mechanical signals indicated by the slope of EMG pre-activation at high sampling rates93,94. These signals serve as sensitive indicators for motion prediction and motion intent forecasting. Furthermore, HMIP systems use bioelectrical signals such as EMG to accurately quantify the transformation from neural drive to biomechanical output, thereby analyzing biomechanical boundaries of human activity and predicting forthcoming motion intent.

EMG is often attached to target muscle groups on the limbs and trunk (Fig. 6e) to detect bioelectrical signals generated by muscle activity through neuromuscular interfaces, identifying fiber type composition and muscle fiber cross-sectional area113, directly reflecting the timing and intensity of muscle activation. Among them, surface electromyography (sEMG) technology has become the mainstream solution for measuring muscle activity due to its non-invasive nature114,115. sEMG allows surface electrodes to be directly placed on the skin covering muscles or muscle groups to non-invasively detect potentials generated by muscle fibers under the skin. In recent years, advances in material manufacturing processes have reduced electrode thickness (8–100 μm)92, making sEMG more portable and accurate, and easier to apply to the human body. The potentials measured near the electrodes can be used to describe the level of muscle activation, as muscle cell activation leads to muscle fiber contraction and generation of mechanical force from muscle fibers89. This forms the EMG-force relationship, clearly illustrating the transformation process from neural intent to EMG signals to mechanical actions, and highlights the vital role of EMG as a bridge in intent prediction. In addition, by detecting temporal features such as muscle pre-activation and contraction duration, short-term muscle activity can be calculated in advance, enabling more accurate motion intent prediction.

Besides EMG, ultrasound sensors offer one of the few means to directly observe muscle structure and biomechanics. Ultrasound sensors are typically worn on key muscle groups in the limbs and trunk. These sensors monitor dynamic muscle signals such as muscle thickness, muscle bundle movement, and tendon displacement by emitting ultrasound pulses and analyzing the echoes. Based on this, dynamic changes in muscle strength can be analyzed116,117. Furthermore, since muscle morphology often precedes and constrains joint movement, ultrasound signals can serve as a key intermediate variable between the muscle and joint levels for decoding movement intent, as validated in joint angle118,119,120 and torque estimation120,121,122.

Multimodal signal capture

Although single modal sensors perform well in specific mechanical dimensions, they have inherent limitations123. Inertial sensors can accurately capture dynamic parameters such as body acceleration and angular velocity, but in gait monitoring, robustness to changes in speed and step frequency is particularly important124. It is difficult for them to distinguish between the stance and swing phases during the gait cycle and they lack sensitivity to pathological gait behaviors such as those seen in Parkinson’s disease. Mechanical sensors can sensitively monitor stress and strain on the body, but commonly used patch-type sensors can only acquire single-point deformation data, making it difficult to analyze global motion patterns involving multi-joint coordination. EMG can decode neural drive in advance, but signal quality can be affected by sweat98 and crosstalk from adjacent muscle groups125, leading to mapping errors between EMG signals and mechanical actions.

Therefore, integrating complementary data from different sensors is extremely important for improving prediction accuracy, which makes multimodal fusion technology highly valued in HMIP research123,126,127,128. The significance of multimodal sensor fusion lies in harnessing the strengths of each type by integrating inertial, mechanical, and bioelectrical sensing data to build a comprehensive system for analyzing human biomechanical features. Its core aim is to overcome the limitations of single sensors in terms of spatial-temporal resolution, mechanical dimension coverage, and anti-interference capabilities. The three core sensors form three heterogeneous data types: dynamics, deformation mechanics, and electrophysiology. Integrating these data enables the construction of a complementary verification chain for biomechanical features. Table 3 shows the applications of common multimodal sensors and their recognition accuracy.

In macroscopic motion monitoring, common multimodal sensors combine waist or leg IMUs with plantar stress-strain sensors to eliminate blind spots of single-point monitoring. This can capture sudden changes in body acceleration while monitoring shifts in the plantar pressure center, allowing accurate discrimination of body center of mass displacement and gait phases through a weighted hierarchical decision model127. In the studies of Chen et al.129 and Papapicco et al.124, the recognition accuracy of steady-state stride length exceeded 98.0%. In addition, researchers have combined pressure sensor and EMG data to analyze neuro-induced changes in gait intention, detecting delays as low as −1002 ± 603 ms130. This has significant implications for fall monitoring and the control of robot-powered lower-limb prostheses.

In fine motion monitoring, current research favors using EMG and IMU as data sources for multimodal fusion. Researchers typically record biomechanical signals from EMG and joint IMUs, analyze the slope of EMG pre-activation, and record the precision of joint angle changes, finally integrating them at the feature and decision levels. Motion trajectories of corresponding limbs are quantified through a sensor fusion-based hierarchical planner131, improving the recognition accuracy of motion intent in common fine movements such as grasping or knee flexion. In the study by Song et al.98, hand motion recognition accuracy increased by more than 11.4% compared with a single sensor. This type of research is often used in hand rehabilitation training98, gesture recognition systems132, and prosthetic control131,133.

The above sensors form the sensing foundation of HMIP from three dimensions: motion dynamics, mechanical deformation, and neuromuscular activation. They provide a complementary data chain for motion intent decoding and establish a technical foundation for the “perception-prediction-interaction” closed-loop.

Algorithm of human motion intent prediction

Core framework

As the high-level controller in the hierarchical control strategies of assistive devices such as intelligent prostheses, exoskeletons, sports training, and medical rehabilitation, the HMIP system is responsible for perceiving the user’s motion intent based on core biomechanical signals. Figure 7 illustrates the core framework, which progresses through five main stages: data collection and preprocessing, motion feature extraction, user generalization and adaptation, mapping to actions, and real-time feedback.

a Signal collection and preprocessing of corresponding biomechanical features through wearable devices, reprinted/adapted with permission from ref. 279; b Data segmentation and feature extraction of biomechanical signals through algorithms, reprinted/adapted with permission from ref. 280; c Data dimensionality reduction and user generalization, reprinted/adapted with permission from ref. 281; d Mapping to specific actions for motion intent recognition and prediction, reprinted/adapted with permission from ref. 282; e Real-time feedback to corresponding assistive devices, reprinted/adapted with permission from ref. 51.

In the initial phase, multimodal wearable sensors such as IMUs, EMG arrays, smart textiles, and insoles capture critical biomechanical features such as acceleration, joint angles, and muscle activity. Preprocessing techniques like filtering and normalization are then applied to remove noise and stabilize the signals. Next, motion feature extraction transforms raw signals into discriminative representations. Traditional machine learning methods rely on handcrafted feature engineering, while deep learning approaches learn spatiotemporal correlations automatically through neural networks, enabling greater robustness across varying motion scenarios. To ensure adaptability across different users, the system incorporates user generalization and adaptation strategies, combining user-independent datasets with transfer learning and adaptive tuning to balance cross-user generalization with personalized accuracy. These extracted features are then mapped to specific actions, either discrete categories such as hand gestures or continuous motion trajectories such as joint angle curves, ensuring that biomechanical signals are translated into interpretable motion commands. Finally, the predicted intent is delivered to assistive devices through real-time feedback, creating a closed-loop human–machine interaction that is both intuitive and responsive. This framework highlights the transition from raw biomechanical sensing to actionable intent, underscoring the importance of multimodal integration, adaptive learning, and feedback mechanisms for reliable HMIP applications.

Application and comparison of common algorithms

The operation process of the entire core framework requires balancing computational efficiency and prediction accuracy, particularly considering model complexity during the deployment of sensors and other devices. In response to this, current algorithms are mainly divided into two technical types: traditional machine learning methods and deep learning methods. The former is based on manually designed feature engineering combined with classifiers for analysis. The latter automatically learns spatiotemporal correlation features in signals through end-to-end neural network architectures. In recent years, with breakthroughs in deep learning technology in analyzing complex biomechanical signals, HMIP algorithms have gradually shifted from traditional methods relying on manual feature extraction to data-driven end-to-end models.

Table 4 illustrates the applications and prediction accuracies of common HMIP algorithms. Traditional machine learning methods demonstrate high efficiency and considerable accuracy during prediction but are limited to analyzing relatively simple motion scenarios due to the completeness constraints of manual feature engineering. In contrast, deep learning approaches exhibit significantly enhanced capabilities in parsing complex motions. However, the data dependency and computational costs of deep learning restrict its application in resource-constrained settings. Representative algorithms such as LSTM and TCN typically achieve real-time prediction latency below 150–250 ms, while lightweight ML models (e.g., LDA, SVM) often operate below 50 ms with low computational demand134. Practical selection requires comprehensive consideration of application scenarios. For resource-constrained embedded devices (e.g., exoskeletons), unimodal sensors with lightweight CNNs or LDA can be prioritized. Conversely, for systems demanding stringent precision (e.g., rehabilitation robots), multimodal sensors combined with deep learning offer greater advantages.

Machine learning for simple signal processing

The core value of traditional machine learning in HMIP lies in the precise extraction of biomechanical signals highly correlated with motion intent through manual feature design, and the construction of highly interpretable predictive models through classifiers. Machine learning is typically used to process low-dimensional, low-modality, or few-channel simple signals. These signals are usually collected under constrained action conditions and have a relatively high signal-to-noise ratio.

Manual feature extraction refers to the use of manually designed methods and feature extraction algorithms based on human biomechanical features to extract key biomechanical indicators from raw signals, forming a meaningful feature set for subsequent analysis tasks such as classification and regression. Table 5 shows common manual feature extraction methods in HMIP systems, which usually correspond to different feature types.

In the research of HMIP, typical features include time-domain features, frequency-domain features, and spatial features. In time-domain feature extraction, muscle activation frequency is calculated using EMG zero-crossing rate to analyze peak values and duration changes during muscle contraction for real-time gesture recognition and prediction135; joint angular velocity variance is used to quantify gait stability. In frequency-domain feature extraction, wavelet transform’s local analysis capability is used to amplify the instantaneous changes in gait information for more stable exploration of gait phase transitions136,137; fast Fourier transform is used to analyze the frequency spectrum energy distribution of gait cycles to distinguish motion patterns such as walking, running, and jumping. In spatial feature extraction, principal component analysis is used for dimensionality reduction of multi-channel sEMG to extract principal components of synergistic activation among different EMG signals, improving prediction accuracy138; common spatial pattern is used to extract discriminative motion intent features from multi-channel EEG139, such as distinguishing between finger and arm movements, enhancing classification accuracy.

These feature extraction methods compress high-dimensional biomechanical signals into low-dimensional feature vectors, greatly reducing computational load and better meeting the practical constraints of smart wearable devices in real-life applications.

In model construction, classifiers play a crucial role. They can perform feature construction and feature selection from manually extracted features obtained from multiple sensors, thereby generating additional features for classification decisions, resulting in more accurate motion pattern recognition.

Table 6 presents common machine learning algorithms and classifiers in HMIP systems. It can be seen that machine learning has unique application value in handling simple tasks and datasets. Compared with other algorithms, machine learning algorithms have advantages in interpretability and real-time performance. On the one hand, biomechanical feature engineering can extract low-dimensional features highly correlated with motion intent from raw signals, directly associated with mechanical thresholds, thus reducing computational load. On this basis, machine learning classifiers can achieve classification accuracy as high as 95–100% in gesture recognition140,141,142. On the other hand, lightweight classifiers (such as LDA, SVM) can avoid long training times caused by high-dimensional feature spaces and achieve low-latency real-time prediction140,142,143, meeting the immediacy requirements of human-computer interaction.

However, traditional machine learning methods rely heavily on manual feature extraction and classifier design, requiring a large amount of diverse training data for the desired motion patterns so that classifiers perform well in real-world scenarios. Using multiple sensor data can improve classification accuracy but also increases the wearing burden on subjects, limiting its application in daily life. Additionally, using different datasets can affect classification results. These shortcomings make machine learning insufficiently adaptive to cross-individual and cross-scenario data in practical applications, and its generalization ability for complex motion patterns is limited. Consequently, machine learning is better suited to processing single signals to achieve superior results.

Deep learning for complex signal processing

Compared to traditional machine learning, which relies heavily on handcrafted features, deep learning leverages end-to-end architectures to directly learn hierarchical feature representations from raw biomechanical signals. Its key strength lies in the ability to autonomously capture latent spatiotemporal correlations across multiple sensor modalities, thus effectively modeling complex nonlinear dynamics of human motion intent. With the increasing complexity of wearable sensor networks, deep learning demonstrates superior adaptability and generalization, making it particularly suitable for high-dimensional, multimodal, and dynamically changing complex signals. Such signals involve more degrees of freedom and richer spatiotemporal dependencies, and they often arise under conditions with more complex noise or less constrained movements.

Table 7 presents common deep learning algorithms and their typical applications in HMIP systems. Deep learning has advantages in handling complex tasks and multimodal datasets. For complex tasks, CNN can automatically extract local spatiotemporal features and is widely used in pattern recognition tasks involving multidimensional sensor data, such as posture and gait recognition52,144,145. RNN and LSTM can capture the temporal dependencies of biomechanical signals, effectively modeling the evolution of motion intent146,147,148. Additionally, with the growing need for multimodal fusion, architectures such as CNN-LSTM138,149, CNN-GNN145, BiLSTM96,150, and Transformer151,152 enable joint modeling of cross-modal features, improving the integration of multi-source biomechanical signals. Moreover, emerging lightweight deep learning models such as TCN have demonstrated low computational latency in real-time prediction (up to 200 ms in advance153), gradually compensating for the heavy computational load of traditional deep models.

As shown in Table 8, multimodal fusion technology can effectively improve the accuracy of prediction results. Multimodal fusion is a key driver of deep learning’s advantage over traditional machine learning123,126,127,128. Multimodal fusion models generally exhibit 5–15% accuracy improvement at the cost of increased computation, whereas lightweight fusion strategies maintain real-time performance suitable for embedded HMIP systems. Multimodal fusion is commonly employed in deep learning models, though less frequently applied to machine learning models. Moreover, compared to the latter, deep learning models demonstrate a more pronounced improvement in predictive accuracy.

During the multimodal fusion process, data from different sensors may have issues such as time synchronization, scale differences, and noise. Therefore, it is necessary to employ appropriate data fusion methods to mitigate the heterogeneity gaps between different modalities. As illustrated in Fig. 8, the commonly employed multimodal data fusion methods within HMIP currently encompass data-level fusion, feature-level fusion, and decision-level fusion154. Table 9 compares the advantages, disadvantages, and application scenarios of the three fusion methods.

The three-layer data fusion model.

Data-level fusion is the lowest-level fusion method, which directly integrates multimodal information at the raw sensor data level. For example, the acceleration data collected by IMU is synchronously spliced with the raw EMG signal155 to form a unified time series input. This method can completely retain the temporal features of biomechanical signals and is suitable for HMIP systems with a small number of sensors and high data synchronization accuracy, such as IMU and EMG signals jointly recognizing gestures.

Feature-level fusion first independently extracts key features from each modal signal and then integrates them, which is the current mainstream method. For example, CNN is used to extract spatial features from IMU, and LSTM is used to extract temporal features from EMG. Then multimodal features are spliced or fused through weighting155 or attention mechanisms156, finally forming a unified feature vector as input to the prediction model. This method retains core biomechanical features while reducing the data dimension and improves the fusion effect through attention mechanisms or weighting strategies, which is suitable for tasks such as motion intent classification and rehabilitation motion recognition.

Decision-level fusion trains independent models for each sensor channel, outputs results respectively, and integrates results at the model output layer through methods such as adaptive weighting157 or multimodal adversarial training149,158. Decision-level fusion emphasizes fusion at the model result level and is suitable for systems with large sensor differences or difficult signal synchronization149,157.

Although fusion methods are continuously developing, multimodal fusion still faces a series of challenges, including data heterogeneity among modalities, information redundancy and missing, and computational complexity. To address these challenges, researchers have proposed various solutions. For example, adaptive weighting dynamically adjusts the fusion weights of different modalities to improve fusion flexibility and robustness145,155,157. Attention mechanisms can guide the model to focus on feature areas that contribute more to intent recognition146,156,159. Multimodal adversarial training introduces adversarial loss to optimize the adaptability of the model and enhance compatibility with heterogeneous data149,158. These methods significantly improve the fusion effect and provide effective technical paths for real-time applications of multimodal sensing systems.

Wearable applications and future prospects

Common wearable devices

The core of HMIP lies in the precise capture of multiscale biomechanical features, and the morphological evolution and technological innovation of wearable devices serve as the key enablers for achieving this goal. Based on the wearing form and functional orientation of wearable devices, existing platforms can be categorized into three types: accessory type devices (Accessories), electronic textiles (E-textiles), and electronic skins (E-skins). These devices each have their own advantages forming a multi-level support system from daily applications to high precision monitoring.

Accessories usually integrate inertial sensors or optical sensors are easy to wear and suitable for daily use and are applicable for simple motion data collection and preliminary prediction of motion intent. The most common in daily life is the smartwatch which can monitor heart rate and blood oxygen level in real time providing reference for exercise intensity evaluation (Fig. 9a)160. In upper limb motion prediction armbands can synchronously capture arm acceleration and EMG signals (Fig. 9b)97. In lower limb motion prediction smart shoes can predict posture patterns through changes in plantar pressure distribution expanding gait monitoring capability (Fig. 9c)161.

a Smart photoplethysmography (PPG) watch monitoring physiological signals such as heart rate and blood oxygen, reprinted/adapted with permission from ref. 160. b Myo motion armband capturing acceleration, angular velocity, and electromyography (EMG) signals, reprinted/adapted with permission from ref. 97. c Smart shoe analyzing plantar pressure variations to predict gait postur, reprinted/adapted with permission from161. d Interactive fiber-based apparel for fabric-integrated motion monitoring, respiration tracking, and energy harvesting, reprinted/adapted with permission from ref. 162. e Smart textile glove detecting joint angles and pressure to estimate complex gestures, reprinted/adapted with permission from ref. 104. f Full-texture dual-tactile (pressure/tension) motion suit for monitoring joint stress-strain relationships, reprinted/adapted with permission from ref. 163. g Spray-on electrode tattoo capturing electrophysiological signals including EMG and electrocardiography (ECG), reprinted/adapted with permission from ref. 164. h Cellulose aerogel electronic skin for motion monitoring, thermotherapy, and temperature control, reprinted/adapted with permission from ref. 165. i Ultrathin self-powered PPG sensor for wearable real-time health monitoring, reprinted/adapted with permission from ref. 166.

In contrast E-textiles embed flexible sensors into clothing structures which can capture joint activity and motion patterns more finely and are suitable for the recognition of complex motion states and intent prediction. In daily motion perception interactive fiber clothing can accurately identify arm movement and simultaneously achieve respiration monitoring and energy harvesting (Fig. 9d)162. In complex gesture prediction involving finger joint motion smart fabric gloves can acquire key signals such as joint angle and pressure (Fig. 9e)104. In the analysis of professional movements dual tactile motion suits can perceive joint stress and strain distribution providing more comprehensive analytical data for optimized training in competitive sports (Fig. 9f)163.

Under higher precision demands E-skins has become the frontier form for refined motion monitoring. It usually integrates strain sensors and biosensors which can monitor EMG signals and subtle motion changes with high accuracy. In the synchronous evaluation of neuromuscular activity and physiological state spray on electrode tattoo while maintaining sweat permeability and skin compliance can capture electrical stimulation signals such as EMG and ECG (Fig. 9g)164. In subtle motion monitoring cellulose aerogel E-skins can achieve micro-strain sensing through the breaking and reconnection of microcracks in the sensing layer and also possesses functions such as motion monitoring thermotherapy and temperature control (Fig. 9h)165. In vital signs monitoring ultra-thin self-powered PPG sensors realize real time monitoring of signals such as heart rate and blood oxygen providing a low power lightweight flexible optoelectronic device solution (Fig. 9i)166.

These three types of wearable devices each play their own advantages in motion monitoring Accessories focus on portability and simple data collection E-textiles highlight motion analysis and E-skins is oriented toward high precision signal perception. They provide different levels of adaptive platforms and data support for motion intent prediction.

Application demonstration

Based on the three-level biomechanical feature framework of “body-joint-muscle” the HMIP system has achieved multi-dimensional technological breakthroughs from data perception and feature extraction to intelligent decision making. At present modeling of biomechanical signals such as body acceleration joint angle joint torque and muscle activation not only supports high precision intent decoding but also provides theoretical basis and engineering leverage for the expansion of application layer functions. As shown in Fig. 10 this technical system is widely applied in multiple scenarios including medical rehabilitation industrial production competitive sports virtual reality and intelligent driving demonstrating the universality and core value of intelligent wearable systems in the field of HMIP.

a Exoskeleton control based on biological joint torque, using clothing-integrated exoskeleton, reprinted/adapted with permission from ref. 46; b Unpowered knee joint rehabilitation robot based on knee joint angle, velocity, and torque, reprinted/adapted with permission from ref. 167; c Prosthesis with flexible stretchable surface electromyography (sEMG) sensor designed for amputees, reprinted/adapted with permission from ref. 92; d Intelligent upper limb exoskeleton system predicting human strength augmentation intention by collecting real-time muscle activity and providing sensory feedback, reprinted/adapted with permission from ref. 169; e Industrial robot using motion capture to assist and learn from the operator’s wor, reprinted/adapted with permission from39; f Liquid metal tattoo for monitoring motion status, where bioelectric potential signals recorded by skin surface electrodes reflect body status, reprinted/adapted with permission from ref. 164; g Immersive interaction through enhanced tactile perception and feedback ring with multimodal sensing and feedback functions, reprinted/adapted with permission from ref. 51; h Wearable kinesthetic haptic device for enhancing tactile experience or promoting muscle training rehabilitation, reprinted/adapted with permission from ref. 63; i E-skins achieves holistic tactile perception by integrating transient and sustained potential artificial neurons, reprinted/adapted with permission from ref. 171; j Predicts driver behavior through non-invasive wearable devices and interacts with in-vehicle systems for planning, reprinted/adapted with permission from ref. 54.

In medical rehabilitation biomechanical modeling at the joint level and muscle level is mainly relied upon to realize personalized responses for assistance and rehabilitation. For patients with walking difficulties clothing integrated exoskeletons take joint torque as the control core to achieve on demand compensation of lower limb propulsion (Fig. 10a)46. For joint rehabilitation training rehabilitation robots drive training rhythms with three parameters of joint angle speed and torque meeting the needs of progressive rehabilitation therapy (Fig. 10b)167. For amputees intelligent prostheses can achieve closed loop coupling of EMG signals and sensory feedback improving the stability and refinement of prosthetic control (Fig. 10c)92.

In industrial applications body acceleration and EMG signals play important roles in ensuring safety and assisting production respectively. Wearable IMU systems can collect human acceleration features and by recognizing gait fluctuations and fatigue states assist in detecting dangerous behaviors during work (recall rate as high as 93.0%168). Intelligent upper limb exoskeleton systems collect real time muscle activity to predict the human intent for strength enhancement and provide force feedback greatly reducing the upper limb burden (Fig. 10d)169. Further extended to human machine collaborative production sites industrial robots can recognize workers’ operational intent based on EMG signals achieving assistance and sharing of heavy repetitive or high risk tasks (Fig. 10e)39.

In the field of competitive sports bioelectrical sensors are mainly used to obtain athletes’ EMG and ECG signals supplemented by lightweight IMUs to capture acceleration and joint dynamic features. For seamless wearability, lightweight E-skins can continuously acquire bioelectric potentials on the skin surface, providing a non-intrusive signal interface for electromyographic signal collection (Fig. 10f)164. Based on muscle activation features intelligent recognition of compensatory behavior of non-force exerting muscle groups and abnormal signals during exercise can be realized. Supplemented by acceleration and joint dynamic features early warning of motion deviations can be achieved maximizing performance optimization and reducing injury risk170.

In the field of virtual reality immersive interactive devices are constantly emerging. Enhanced haptic rings can realize precise grasping interaction with virtual objects based on finger joint angles and muscle control signals (Fig. 10g)51. At the same time integrated wearable kinesthetic haptic devices can also enhance interactive immersion (Fig. 10h)63. In addition bionic E-skins achieves holistic tactile perception by integrating transient and sustained potential artificial neurons providing a more realistic mechanical feedback experience for virtual environments (Fig. 10i)171.

In intelligent driving scenarios noninvasive bioelectrical wearable devices are usually used to realize intelligent prediction of driving behavior (Fig. 10j)54. A control platform based on EEG signals can achieve path selection and user preference reinforcement through classification, thereby assisting driving decision making172. E skin based on electrooculography (EOG) can evaluate fatigue levels in real time through eye tracking and predict emergency braking intent thereby enhancing proactive driving safety173. In addition, monitoring biomechanical signals of joints and muscles can realize dynamic evaluation of driving posture and reaction delay supporting the construction of safe assisted driving systems.

It can be seen that biomechanical signals at the body-joint-muscle levels provide solid support for accurate intention recognition of HMIP systems in various scenarios. These practical cases not only verify the guiding role of biomechanical features in intelligent wearable systems but also accelerate the evolution of HMIP technology from laboratory prototypes to actual large-scale applications.

Current technical challenges

Despite the significant progress of intelligent wearable systems in the HMIP field, they still face multiple challenges in practical applications, especially in data processing, material preparation, and privacy security. These challenges may lead to the failure of biomechanical signal interpretation and restrict their universality and practicality across different scenarios.

-

(1)

In terms of data processing, current HMIP systems generally rely on deep learning algorithms to analyze multimodal mechanical data such as body acceleration, joint angle variation, and muscle electrical activation signals. Although these models exhibit high prediction accuracy under experimental conditions, their cross-individual and cross-task transferability is insufficient. Meanwhile, in realistic noise-rich conditions, sensor electrodes are affected by motion artifacts, relative displacement among multimodal sensors, and changes in bioelectrical impedance, which makes models trained in controlled laboratory settings difficult to transfer directly. In practical deployment, these disturbances are more likely to cause IMU drift or saturation, as well as EMG baseline drift, crosstalk, and intermittent signal dropout. For example, muscle activation features are mainly acquired through sEMG, but this signal is easily affected by electrode displacement and sweat interference during actual wearing, leading to signal distortion or loss, which severely impacts the accuracy of muscle intention extraction174. In addition, the stable acquisition of body and joint motion signals also depends on high-precision IMU and stress-strain sensors, and their data usually need to be uploaded to cloud servers for processing. The current health data cloud infrastructure is inadequate, causing data transmission delays and insufficient real-time feedback, which affects the response efficiency of intention prediction in complex scenarios175,176.

-

(2)

In terms of material preparation, flexible sensors for joint strain sensing and epidermal electromyographic signals need to possess excellent wearing comfort and stability. However, flexible sensor materials often face the contradiction between mechanical performance degradation and signal stability reduction, especially under long-term wearing or complex dynamic environments177,178. For example, graphene electrodes have excellent conductivity, but they are prone to cracking under high-frequency bending or prolonged stretching, affecting the acquisition of muscle activation signals179. Flexible pressure sensors used for joint motion monitoring are easily affected by motion artifacts, leading to sensitivity reduction and feature extraction distortion180. At the same time, the power supply system is limited by energy density storage, making it difficult for multi-module sensors to work collaboratively for extended periods181,182, especially in tasks involving long-term monitoring of muscle strength, where device endurance becomes more problematic.

-

(3)

In terms of privacy security, HMIP systems usually involve the collection and transmission of large-scale personal physiological data, especially high-frequency dynamic data closely related to muscle and joint movements, such as continuously monitored sEMG or acceleration signals. These data may reflect users’ living habits and health status and are at risk of being maliciously obtained or tampered with176,183,184. Since wearable devices generally use wireless transmission and cloud storage, the distributed nature of mobile connections also exacerbates privacy exposure during data transmission183.

Future development directions

To further promote the practical and intelligent development of HMIP systems, future research should focus on systematic improvements in key areas such as biomechanical feature modeling, fusion algorithm optimization, sensor material upgrading, and privacy security protection, comprehensively enhancing the stability, adaptability, and user experience of the system.

Firstly, to address the limitations of existing models in cross-subject and cross-task generalization capabilities, future HMIP systems should develop hybrid modeling approaches that integrate self-supervised learning with dynamic mechanical models based on personalized biological parameters. This methodology enables personalized intent prediction tailored to individual physiological variations, reduces reliance on large annotated datasets, and enhances the model’s adaptability and robustness under degraded sEMG signal conditions such as electrode displacement and sweat interference. In addition, conducting data-scale–driven representation learning using large-scale and diverse datasets provides a complementary pathway to achieving stronger cross-user generalization185,186.

Secondly, in response to the redundant information and computational complexity issues in the current multimodal fusion process, future methods such as multimodal attention mechanisms, adaptive weighted fusion, and adversarial training can be adopted. This can further enhance the intelligence level of fusion strategies and optimize feature interaction and decision accuracy. At the same time, to better transfer models trained in the lab, learning noise-robust representations via cross-modal learning for real HMIP deployment requires systematic quantification and robustness guarantees. This calls for standardized real-world benchmarks, reproducible perturbation and noise protocols, and online adaptation strategies187. In addition, to improve real-time responsiveness and reduce cloud dependence, exploring edge computing and low-power algorithm deployment can enable local real-time processing. This can overcome the challenges of inadequate cloud infrastructure, and improve system response speed and practicality.

Thirdly, in terms of sensor materials, confronting the challenges of material durability and signal stability is paramount. Future research needs to enhance the mechanical durability and strain adaptability of materials by innovating nanocomposite materials and biomimetic flexible electronic structures while maintaining high conductivity and biocompatibility. Meanwhile, to solve the problem of limited energy density, combining novel self-powered technologies such as triboelectric nanogenerators and biofuel cells can significantly improve the endurance of devices. This can make them suitable for complex tasks such as long-term motion monitoring and rehabilitation training.

Finally, in the face of privacy and data security risks, it is necessary to establish comprehensive mechanisms for data encryption, access control, and blockchain-based data traceability to ensure the secure transmission and compliant use of personal physiological data. At the same time, implement a layered privacy architecture that emphasizes local preprocessing of high-sensitivity signals. In addition, unified ethical standards should be established to clarify the application boundaries of sensitive technologies such as brain-computer interfaces and prevent technology abuse, thereby balancing individual privacy and technological innovation.

Beyond these technical challenges, a critical direction is enhancing the translational potential of HMIP technologies in real-world medical and industrial environments, future work should also consider relevant regulatory and certification requirements. For any HMIP system intended for clinical rehabilitation or health monitoring, developers must engage with regulatory pathways early. This includes aligning wearable sensors and intent-prediction modules with medical device safety standards. Typically, the biocompatibility of sensor materials must comply with the ISO 10993 standard188, while the electrical safety of medical equipment must comply with the IEC 60601 standard189. Algorithms for Software as a Medical Device (SaMD)190 require rigorous clinical validation to demonstrate their safety and effectiveness (e.g., through FDA approval191 or EU Medical Device Regulation192). Integrating these regulatory strategies into the design life cycle can accelerate clinical validation, enhance user safety, and support large-scale deployment.

The future development of HMIP systems will show trends toward precise biomechanical modeling, intelligent fusion strategies, high-performance materials, and systematic privacy protection. Through the collaborative innovation of algorithms, materials, and security, it is expected to achieve an integrated adaptive system of “perception-decision-execution” in the future, promoting the transformation of rehabilitation medicine, human-machine collaboration, and intelligent society.

Discussion

This paper systematically reviews the research progress of intelligent wearable human motion intention prediction systems based on multi-scale biomechanical features. By integrating cross-disciplinary achievements in biomedical engineering, materials science, and artificial intelligence, a theoretical framework covering biomechanical features, wearable system architecture, and algorithm design is constructed, leading to the following core conclusions:

-

(1)

Multi-scale biomechanical features constitute the fundamental data source of HMIP systems and cover three core scales: body (acceleration), joints (angle, angular velocity, and torque), and muscles (activation signal and strength), enabling synchronous analysis of macroscopic behavior and microscopic neural drive.

-

(2)

The development of intelligent wearable devices has significantly enhanced the real-time sensing capability of HMIP systems. Through diverse platforms such as accessory-type devices, electronic textiles, and electronic skin, precise and efficient data acquisition is achieved.

-

(3)

Multimodal sensor fusion technology has overcome the sensing limitations of single sensors and significantly improved the accuracy of intention prediction and system robustness in complex motion scenarios.

-

(4)

Machine learning can ensure the real-time feedback of HMIP in resource-constrained scenarios, but its model generalization ability is limited. Deep learning has enhanced HMIP’s capability to model high-dimensional dynamic signals, but optimization is still needed in terms of data demand, computational resources, and interpretability.

-

(5)

Algorithm models and sensing hardware need to be co-designed. The selection of sensor types and algorithm structures should be made reasonably based on actual application scenarios to achieve a comprehensive balance of prediction accuracy, system real-time performance, and wearing comfort.

-

(6)

HMIP still faces challenges in data processing, materials preparation, and privacy security. Future development will show trends toward precise biomechanical modeling, intelligent fusion strategies, high-performance materials, and systematic privacy protection.

Data availability

Data sharing is not applicable to this article as no datasets were generated or analyzed during the current study.

References

Picerno, P. 25 years of lower limb joint kinematics by using inertial and magnetic sensors: a review of methodological approaches. Gait Posture 51, 239–246 (2017).

Cheng, P. & Oelmann, B. Joint-angle measurement using accelerometers and gyroscopes—a survey. IEEE Trans. Instrum. Meas. 59, 404–414 (2010).

López-Nava, I. H. & Muñoz-Meléndez, A. Wearable inertial sensors for human motion analysis: a review. IEEE Sens. J. 16, 7821–7834 (2016).

Chircov, C. & Grumezescu, A. M. Microelectromechanical systems (MEMS) for biomedical applications. Micromachines 13, 164 (2022).

Bi, L., Feleke, A. G. & Guan, C. A review on EMG-based motor intention prediction of continuous human upper limb motion for human-robot collaboration. Biomed. Signal Process. Control 51, 113–127 (2019).

Wu, H. et al. Materials, devices, and systems of on-skin electrodes for electrophysiological monitoring and human–machine interfaces. Adv. Sci. 8, 2001938 (2021).

Simić, M. & Stojanović, G. M. Wearable device for personalized EMG feedback-based treatments. Results Eng. 23, 102472 (2024).

Zhang, Y. et al. Abnormal recognition-assisted and onset-offset aware network for pathological wearable ECG delineation. Artif. Intell. Med. 157, 102992 (2024).

Chiu, I.-M. et al. Serum potassium monitoring using AI-enabled smartwatch electrocardiograms. JACC Clin. Electrophysiol. https://doi.org/10.1016/j.jacep.2024.07.023 (2024).

Sarhan, S. M., Al-Faiz, M. Z. & Takhakh, A. M. A review on EMG/EEG based control scheme of upper limb rehabilitation robots for stroke patients. Heliyon 9, e18308 (2023).

Asghari Oskoei, M. & Hu, H. Myoelectric control systems—a survey. Biomed. Signal Process. Control 2, 275–294 (2007).

Englehart, K., Hudgin, B. & Parker, P. A. A wavelet-based continuous classification scheme for multifunction myoelectric control. IEEE Trans. Biomed. Eng. 48, 302–311 (2001).

Au, A. T. C. & Kirsch, R. F. EMG-based prediction of shoulder and elbow kinematics in able-bodied and spinal cord injured individuals. IEEE Trans. Rehabil. Eng. 8, 471–480 (2000).

Ahsan, M., Ibrahimy, M. & Khalifa, O. O. EMG signal classification for human computer interaction: A review. Eur. J. Sci. Res. 33, 480–501 (2009).

Zhang, W., Zhao, S., Meng, F., Wu, S. & Liu, M. Dynamic compositional graph convolutional network for efficient composite human motion prediction. In Proc. 31st ACM International Conference on Multimedia 2856–2864. https://doi.org/10.1145/3581783.3612532 (Association for Computing Machinery, 2023)

Li, C., Zhang, Z., Lee, W. S. & Lee, G. H. Convolutional sequence to sequence model for human dynamics. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 5226–5234. https://doi.org/10.1109/CVPR.2018.00548 (2018).

Martinez, J., Black, M. J. & Romero, J. On human motion prediction using recurrent neural networks. In Proc. IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 4674–4683. https://doi.org/10.1109/CVPR.2017.49 (2017).

Pavllo, D., Feichtenhofer, C., Auli, M. & Grangier, D. Modeling human motion with quaternion-based neural networks. Int J. Comput Vis. 128, 855–872 (2020).

Chiu, H.-K., Adeli, E., Wang, B., Huang, D.-A. & Niebles, J. C. Action-agnostic human pose forecasting. In Proc. IEEE Winter Conference on Applications of Computer Vision (WACV) 1423–1432. https://doi.org/10.1109/WACV.2019.0015 (2019).

Fragkiadaki, K., Levine, S., Felsen, P. & Malik, J. Recurrent network models for human dynamics. In Proc. 2015 IEEE International Conference on Computer Vision (ICCV) 4346–4354 (IEEE Computer Society, 2015).

Graßhof, S., Bastholm, M. & Brandt, S. S. Neural network-based human motion predictor and smoother. SN Comput. Sci. 4, 760 (2023).

Zhang, X., Tian, S., Liang, X., Zheng, M. & Behdad, S. Early prediction of human intention for human–robot collaboration using transformer network. J. Comput. Inf. Sci. Eng 24, 051003 (2024).

Zhao, M. et al. Bidirectional transformer GAN for long-term human motion prediction. ACM Trans. Multimed. Comput. Commun. Appl. 19, 163:1–163:19 (2023).

Wang, J. et al. Spatio-temporal branching for motion prediction using motion increments. In Proc. 31st ACM International Conference on Multimedia 4290–4299. https://doi.org/10.1145/3581783.3612330 (Association for Computing Machinery, 2023).

Gopalakrishnan, A., Mali, A., Kifer, D., Giles, L. & Ororbia, A. G. A neural temporal model for human motion prediction. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 12108–12117. https://doi.org/10.1109/CVPR.2019.01239 (2019).

Lyu, K., Chen, H., Liu, Z., Zhang, B. & Wang, R. 3D human motion prediction: A survey. Neurocomputing 489, 345–365 (2022).

Woźniak, M., Wieczorek, M., Siłka, J. & Połap, D. Body pose prediction based on motion sensor data and recurrent neural network. IEEE Trans. Ind. Inf. 17, 2101–2111 (2021).

Deng, T. & Sun, Y. Recent advances in deterministic human motion prediction: a review. Image Vis. Comput. 143, 104926 (2024).

Khoshmanesh, F., Thurgood, P., Pirogova, E., Nahavandi, S. & Baratchi, S. Wearable sensors: at the frontier of personalised health monitoring, smart prosthetics and assistive technologies. Biosens. Bioelectron. 176, 112946 (2021).

Hua, Q. et al. Skin-inspired highly stretchable and conformable matrix networks for multifunctional sensing. Nat. Commun. 9, 244 (2018).

Farina, D. et al. Toward higher-performance bionic limbs for wider clinical use. Nat. Biomed. Eng. 7, 473–485 (2023).

Lo, H. S. & Xie, S. Q. Exoskeleton robots for upper-limb rehabilitation: state of the art and future prospects. Med. Eng. Phys. 34, 261–268 (2012).

Divekar, N. V., Thomas, G. C., Yerva, A. R., Frame, H. B. & Gregg, R. D. A versatile knee exoskeleton mitigates quadriceps fatigue in lifting, lowering, and carrying tasks. Sci. Robot. https://doi.org/10.1126/scirobotics.adr8282 (2024).

Zhang, Y.-P., Cao, G.-Z., Li, L.-L. & Diao, D.-F. Interactive control of lower limb exoskeleton robots: A review. IEEE Sens. J. 24, 5759–5784 (2024).

Furukawa, J. & Morimoto, J. Transformer-based multitask assist control from first-person view image and user’s kinematic information for exoskeleton robots. npj Robot 3, 13 (2025).